The Truth About LLM Evals: Why Your AI Model Might Be Better (or Worse) Than You Think

Last Updated on January 2, 2026 by Editorial Team

Author(s): Nikhil

Originally published on Towards AI.

When you’re building or deploying a large language model (LLM), one critical question emerges: how do you know if it’s actually good?

Unlike traditional software where you can measure success with clear metrics like “did it crash?”, evaluating LLMs is more nuanced. You need to assess whether the model generates accurate, coherent, and relevant responses. That’s where evaluations or “evals” come in.

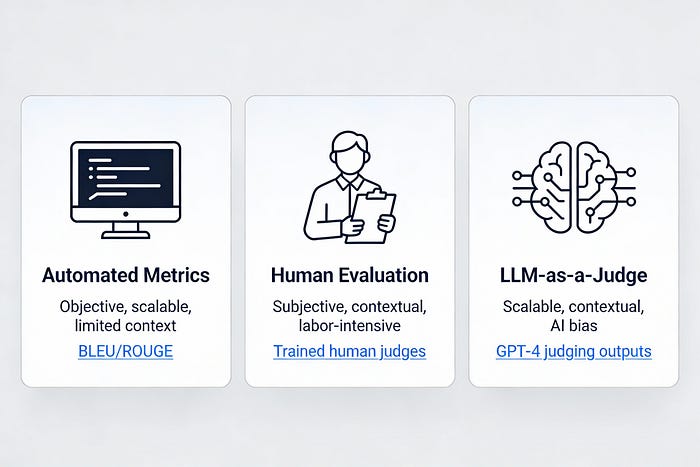

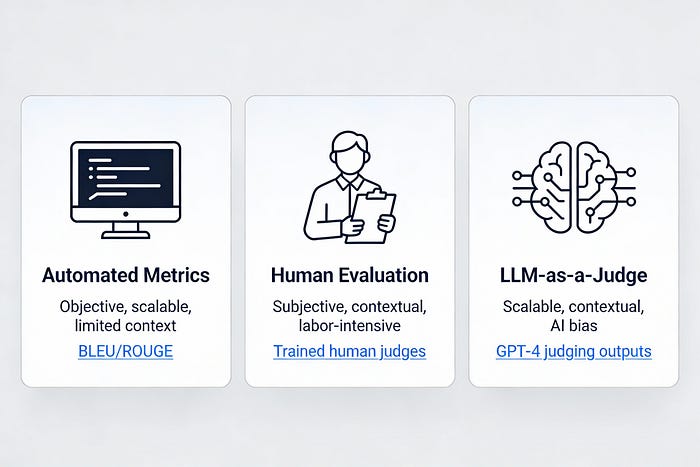

The article discusses the evaluation of large language models (LLMs), emphasizing the need for systematic assessments called “evals” to determine their effectiveness, ethics, and safety. It describes three main approaches: automated metrics, human evaluations, and using another LLM as a judge. Each method has its advantages and challenges, and a combination of all three is considered the best strategy for accurate evaluation. The article also highlights popular benchmarks that are utilized for standardized comparisons.

Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.