The Prompting Language Every AI Engineer Should Know: A BAML Deep Dive

Last Updated on November 25, 2025 by Editorial Team

Author(s): Lorre Atlan, PhD

Originally published on Towards AI.

What is BAML?

As LLM applications move to production, developers face a critical challenge: LLMs frequently generate malformed JSON and unparseable outputs, leading to application failures and degraded user experiences. BAML addresses this by providing a type-safe prompting language with robust parsing that handles LLM output errors automatically, while reducing token costs and improving developer productivity.

BAML stands for Boundary AI Markup Language — new language for coding prompts in any language (Python, Go, TypeScript, Ruby, etc.). Every prompt is a function so Language Server Protocols (LSP) tools can be used to find and test prompts (including multi-modal assets). BAML is a configuration file format. BAML is a prompting language that prioritizes type-safety and an exceptional developer experience. It simplifies making great UX (e.g. streaming) by reducing boilerplate code, while ensuring high accuracy and consistency with LLMs. NextJS demos with streaming and generative UIs are cool.

Use Cases

Classification

- binary e.g. SPAM vs NOT_SPAM

- multi-label e.g. a support ticket classifier that can assign multiple relevant categories to each ticket.

- Symbol Tuning during classification can help LLMs focus on descriptions instead of enum or property names. Aliasing field names to abstract symbols like “k1”, “k2”, etc. can improve classification results. See paper Symbol Tuning Improves In-Context Learning in Language Models for more details.

Structured Generation

- LLMs are just bad at producing JSON when left to their own devices.

- Not every LLM supports Tool calling, or structured output methods.

- Most APIs rely on a JSON schema, which is incredibly wasteful in the token space.

- Models that do support Tool/Function Calling often have degraded accuracy with function calling when compared to just prompting based techniques.

- Structured generation: leverages an LLM to generate parsed data using a data model from unstructured content.

- LLM libraries like Langchain, Instructor and Marvin use LLM Retries or call the model until it produces a parseable output or throws an error that can be fixed on a LLM retry. Leads to higher latency and token costs.

- Add Citations to RAG results, PII data extraction/scrubbing or extracting action items from meeting transcripts.

Use of Chain of Thought(CoT) Prompting while performing structured extraction

There are a few different ways to implement chain-of-thought prompting, especially for structured outputs.

- Require the model to reason before outputting the structured object.

- Require the model to flexibly reason before outputting the structured object.

- Embed reasoning in the structured object.

- Ask the model to embed reasoning as comments in the structured object.

You can use BAML to better understand the performance of seemingly good LLMs via Chain-of-draft (CoD) prompting. CoD is a less verbose form of CoT reasoning, where the LLM is asked to keep a minimum draft of its reasoning process. The technique described in the paper Chain of Draft: Thinking Faster by Writing Less ⤴. AI engineers can interrogate examples across many LLMs to understand how the LLM came to a decision — why it may misclassify input.

What BAML does differently

- Problem: JSON schemas are verbose and hard to read.

Solution: BAML replaces JSON schemas with typescript-like definitions. e.g. string[] is easier to understand than {"type": "array", "items": {"type": "string"}}.

2. Problem: JSON.parse fails when LLMs produce malformed output with missing quotes, brackets, or commas, causing application crashes.

Solution: BAML uses Schema-Aligned Parsing (SAP), a Rust-based algorithm that automatically corrects common LLM output errors in under 10ms. SAP replaces JSON.parse, which allows for fewer tokens in the output with no errors due to JSON parsing. BAML doesn’t use json_schema but uses baml_schema , which is more compressed. This avoids using characters like quotes, leading to a 4x fewer token usage.

SAP handles:

- Unquoted strings

- Unescaped quotes and newlines

- Missing commas, colons, and brackets

- Misnamed keys

- Type casting (fractions to floats)

- Removing superfluous keys

- Stripping extraneous text

- Selecting the best output when multiple candidates exist

- Completing partial objects during streaming

3. Problem: JSON schemas require verbose formatting with quotes and special characters, consuming excessive tokens.

Solution: BAML’s compressed schema format avoids unnecessary characters, reducing token usage by up to 4x compared to traditional JSON schemas.

Engineering problems with Agents

- Prompts should be easy to find and read. Fundamentally they are strings like HTML, but often they are incredibly dynamic — closer to TSX. Strings everywhere are horrible and not maintainable.

- Swapping models should be easy. Models get better every week and so should your app.

- Adding a hot-reload loop for agents. While building a frontend, any code changes reloads the browser (while persisting state from parent agent components).

- Build prompts with any language (C++, Java, Ruby, etc.). Adding agentic capability should be easy and not require a python microservice. BAML has a configuration file format that transpiles into native Python, TypeScript, Ruby, and other languages, providing autocomplete and static analysis without additional dependencies.

- You need tool calling and/or structured outputs. Many smaller and open-source LLM do not support tool calling natively. However, BAML will support this without degrading quality by injecting your function schemas into the prompt. BAML outperforms function-calling across all benchmarks for major providers.

Amongst other limitations, function-calling APIs will at times:

- Return a schema instead of an expected error

- Struggle with tools with more than 100 parameters.

- Use many more tokens than prompting.

6. Enabling Agents to stream, which requires a different type-system. If the LLM returns a T[], while streaming then we likely want a Partial<T>[]. This is even more complicated for multi-step agents.

7. No internet dependency — LLM-based apps should not require a paid SaaS to call a REST API.

#1, #3 (for faster prompt testing — iteration speed matters!), #5 and #7 are compelling reasons to dig deeper into BAML. Reformatting the prompts is a good starting place so that we can leverage token efficiency/latency and prompt testing speed. My concerns are how difficult is it to integrate BAML into a production LLM pipeline and where do I start to realize benefits.

Considerations on integrating BAML with AI Agent frameworks like LangGraph

When do you pivot away from Pydantic data models while using LangGraph? I would continue using Pydantic for Graph State, but update all the prompts and LLM definitions for controlled structured output and prompt testing.

Try out BAML online — no installs — promptfiddle.com

To see this integration approach in practice, I examined a real-world implementation that demonstrates how BAML and LangGraph can work together. This is an example implementation demonstrates a deep research agent using LangGraph and BAML. It clarifies the user’s query, breaks it into sub-parts, uses tools (web search, crypto coin price lookup) to gather information, which is synthesized in a final answer. LangGraph manages the agentic flow of information from node to node and into states, while BAML handles the prompts, functions and data structures for interacting with the LLM.

You have to put all prompts into BAML (vs using LangGraph/LangChain) so there will be some conversion from json/dicts to BAML objects and/or vice versa. The repo author applied BAML to handle all prompts/LLM interactions but BAML really shines when handling the most complex prompts like the filtering and re-ranking results and planning the next steps. We can easily see the expected node behavior based on the unit tests. This leads to a nice separation of LLMs and prompts from core LangGraph code (agent.py) at the cost of adding another dependency to your tech stack.

BAML excels at writing and testing complex LLM functions faster than traditional methods. However, test suite performance needs evaluation as projects scale to hundreds of tests.

My recommendation for adoption: Start by migrating your structured output prompts to BAML — this is where you’ll see immediate benefits from robust parsing and token efficiency. For simple prompts that don’t require structured outputs, BAML’s primary value is organizational: it reduces prompt sprawl by centralizing prompts as code, creating clear separation between AI logic and application code while enabling reuse across projects and languages. With the move to BAML, developers will need to change prompt testing and how tests are written/run. There is a test command that runs all BAML function tests so that this is easy. You can filter tests and run tests in parallel, somewhat akin to pytest or unittest. Consider whether these organizational benefits justify the learning curve for your team.

Cons of working with BAML

Limited IDE choice: BAML doesn’t have Jetbrains extension like VS Code for:

- Real-time prompt previews

- Schema validation

- Interactive prompt execution

Learning curve for new users: You may face an initial adjustment period if you’re new to BAML and/or domain-specific languages. The documentation offers several examples but has a few gaps when you need to combine functionalities and are spread widely in several different sections.

Added dependency in legacy LLM app tech stack: Integration into current AI workflow may require quite a bit of time depending on the choice of agentic frameworks and complexity of current pipeline. Leveraging BAML for real-time prompt testing is efficient, but adding BAML to a legacy LangGraph architecture will take longer to add and test.

Evolving ecosystem: BAML is a new and evolving tool, so there will be frequent updates. These developments might lead to some breaking changes every now and then, so make sure to stay on top of version updates.

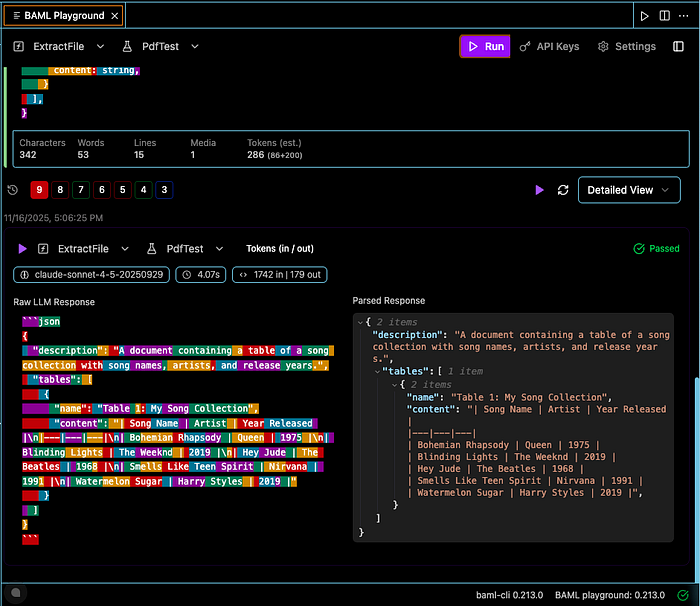

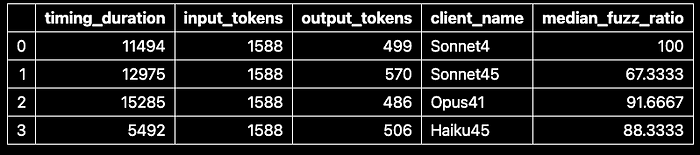

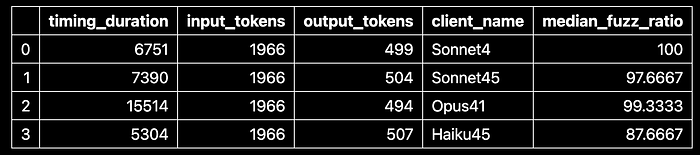

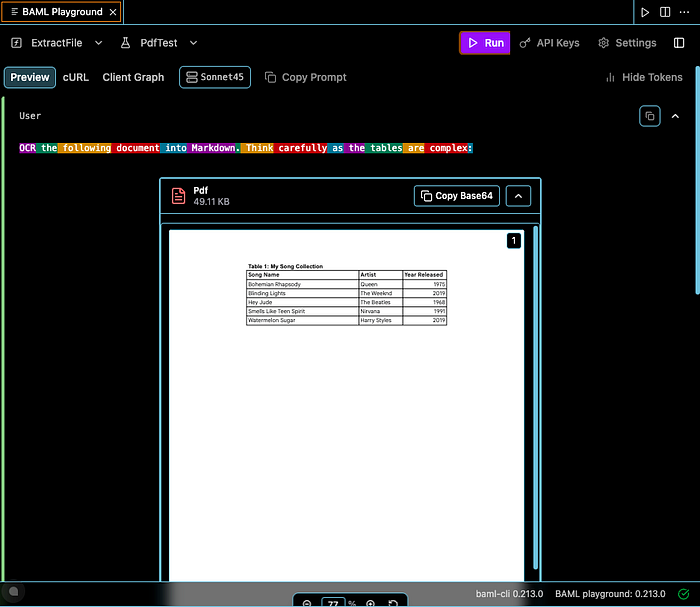

PDF Parsing Experiment: Comparing Models and Extraction Methods

Experiment Design: I tested four Claude models (Haiku-4, Sonnet-4.5, Sonnet-3.7, Opus-4.1) using two extraction methods (PDF-as-image vs. direct PDF input) to parse a one-page table-heavy document into markdown and HTML. The goal was to identify which combination optimizes for token efficiency, latency, and accuracy. Note: This is a preliminary experiment (n=1) to demonstrate BAML’s testing capabilities.

BAML provides an interactive playground that you can access via the internet or installation of the VS Code extension, which is helpful for quickly iterating on prompts or LLMs. However, what about automating this process once we know all of our tuning parameters (LLM, extraction method, and prompts)? In this example, I wanted to run an analysis that will readily output data about the best LLM for my use case (based on extract method, latency or accuracy) so I wrote a small script to cover this experiment design space using BAML so that I could see the results. I also established ground truth versions of all tables in Markdown, in order to calculate accuracy across LLMs.

You can find all of the Github code here.

Results

Submitting pdfs as images used fewer total tokens compared to sending raw pdf content directly (average of 1588 input + 515 output tokens vs 1966 input + 501 output tokens).

However the mean accuracy (as measured by fuzz ratio, a derivative of the Levenshtein distance metric) was much higher for the direct pdf input (96%) compared to sending PNG images (86%). There were more mistakes made by Haiku-4.5 than Sonnet-4 for both extraction methods, which is not too surprising. Opus-4.1 was not the best performer across the Claude LLM family as Sonnet-4 generally performed the best across all models and extraction methods. Better tuning of the prompt so that we had custom prompts for each LLM could have led to higher scores across the models.

In conclusion, I would use Sonnet-4 for parsing pdfs via the image extraction method because this LLM seemed to offer the best balance of low token usage and high accuracy performance.

Being able to add the test cases to the same .baml file as the core prompt definition is nice. Although, the first test always seemed to hang for some reason, but once I re-tried the run on another test — it worked. Ideally, these test cases would cover core and edge case functionality expected from the prompt. Some other test cases that I could have added for this pdf extraction example were:

- Test pdfs with larger and/or more complex tabular formatting (e.g. more colors, ambiguous cell boundaries, etc.)

- Test pdfs with heavy text usage

- Test pdfs with embedded images (e.g. charts and graphs or pictures) between tables/text

- Verify successful extraction of details from headers and footer sections

Common Pitfalls

File Type Unions: BAML cannot accept Union types for different file inputs (e.g., image | pdf) because each file type requires different source-specific parameters. Attempting this, results in the function defaulting to the first type, causing 400 errors when the wrong type is submitted. The solution is to create separate functions for each file type, even if they share the same prompt. The BAML Playground's ability to display the raw prompt sent to the LLM provider was essential for diagnosing this issue.

Model Iteration: I initially found iterating over different LLMs cumbersome without directly passing model names. I was forced to set a primary model and use with_options to override. However, the documentation shows you can pass the client parameter directly at runtime:

from baml_client import baml as b

# Use default model

resume = await b.ExtractResume(resume_text)

# Override at runtime based on your needs

resume_complex = await b.ExtractResume(complex_text, {"client": "Claude"})

resume_simple = await b.ExtractResume(simple_text, {"client": "GPT4Mini"})

Logging via Collector: Using the Collector was a bit opaque, as it was unclear if I should use a different Collector for each model or not. It seems I could take both approaches but opted for the former for clarity.

Summary

- Good for current open source/local models that suffer weak tool calling capability but this will change within the next few months — year. Either way, frontier LLMs fail ~5% of times to produce structured output when prompted, so it your product requires near perfect parsing of LLM output, then BAML may be the answer.

- Good for business cases that can benefit from ~50% faster and/or cheaper output at the same quality level, compared to naive prompting without BAML. It may be possible to reduce calls to larger, more expensive LLMs without a sacrifice in quality — leading to significant reductions of costs and latency.

- Function calling and Chat methods conflict with approaches used by LangGraph/LangChain and may not be worth switching over to, if you are embedded in that framework. Most of the value comes from the prompt management/testing. No net-new features here.

- We can implement CoT prompting in various ways outside of BAML — it doesn’t really add a new capability that developers couldn’t code into their legacy process, but it makes testing more transparent.

- Scalable way to manage and test hundreds of prompts as code, not strings or JSON or YAML objects and different LLMs. Adds rigor and structure in prompt engineering leading to fewer errors, inconsistencies, and difficulty in debugging. You can easily use the

Collectormethod to log usage, timing and other details of the LLM calls made for informed decision making based on performance. - Enhanced Debugging and Prompt Visibility for multi-language projects. BAML offers full control and visibility over the entire prompt, ensuring transparency and aiding in debugging during prompt tuning. Unlike libraries that modify prompts behind the scenes, BAML allows developers to see the exact prompt and web request, reducing unexpected model behavior and building trust in the system. This transparency has helped developers identify and resolve errors caused by hidden prompt modifications, leading to more reliable applications.

When to Adopt BAML

Adopt BAML if your application requires reliable structured outputs, you’re managing dozens of prompts across multiple models, or you need multi-language support for prompt code. The investment pays off quickly when token efficiency and parsing reliability directly impact your product quality or costs.

When to Skip BAML

Skip BAML if you’re building simple prototypes with a handful of prompts, you’re deeply embedded in LangChain/LangGraph patterns and don’t need structured outputs, or your team lacks capacity to learn a domain-specific language. For these cases, native framework tools will suffice until complexity justifies the switch.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.