The AI Bubble Is in the Wrong Place: Why Vertical AI/Integrators Will Win the Next Decade

Last Updated on November 25, 2025 by Editorial Team

Author(s): Ina Hanninger

Originally published on Towards AI.

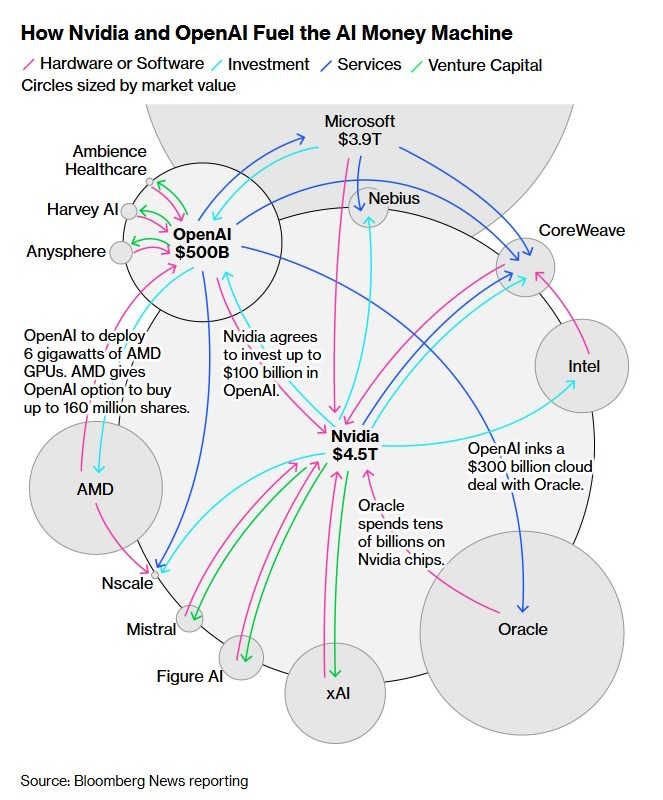

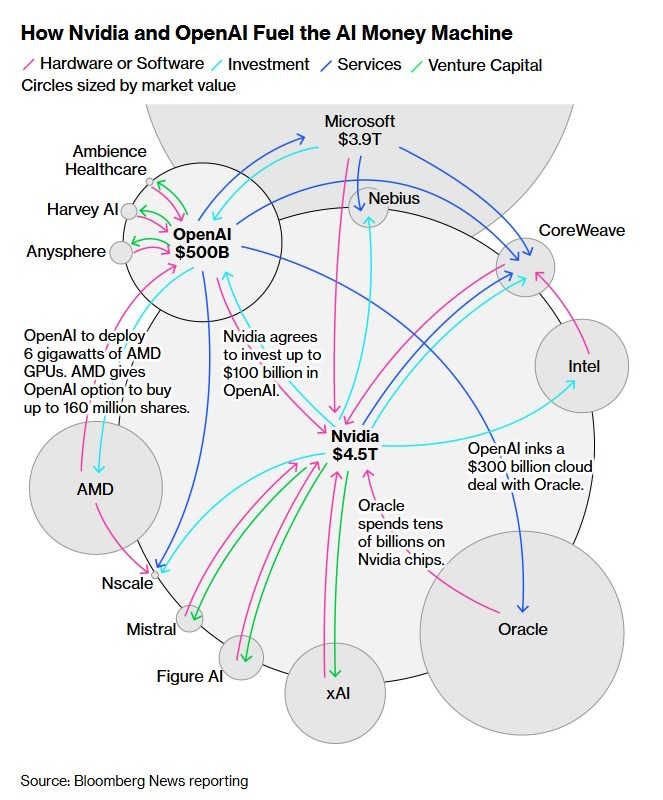

The latest AI boom has pushed valuations into territory we’ve only seen a handful of times in modern market history. At $5 trillion, Nvidia has become the most valuable company in the world, and exceeds the GDP of every country except US and China. OpenAI is trading on private valuations that rival century-old incumbents. CAPE ratios, Buffett-style market-cap-to-GDP measures, and household equity allocation all suggest we are at or near historical extremes. And a tightly interwoven set of self-referential investments — GPU suppliers funding model labs that buy more GPUs, clouds building data centres to host models that justify the data centres — only heightens the sense of circularity.

So the question keeps arising: is this a bubble?

I think the more useful question isn’t “Is AI a bubble?”

It’s “At which layer of the AI stack is the bubble?”

In my view, AI as a technology wave is real and durable. But the distribution of value across the layers of the stack — chips, models, platforms, integrators, vertical applications — is still highly uncertain. If history is any guide, the best long-term returns may not flow to the frontier model labs or GPU providers currently attracting the most attention, but to the quieter layer above them: the companies integrating AI into real industries.

The AI stack and the surplus question

To understand where long-run value might accrue, it helps to break the AI economy into a simplified stack:

- Hardware / compute — GPUs, networking, datacentres

(Nvidia, AMD, hyperscalers) - Foundation models — general-purpose GPT-style systems

(OpenAI, Anthropic, DeepMind, Meta, open-source models) - Horizontal platforms — clouds, copilots, orchestration frameworks

(Azure, AWS, Google Cloud, Microsoft Copilot) - Integrators & vertical applications — domain-specific products and services that embed AI into actual workflows

(health, law, finance, policing, manufacturing, logistics, etc.) - End-users & organisations — people and institutions whose productivity, accuracy, costs and decisions change because AI becomes part of what they do.

The central economic question is: which layer captures the surplus?

In other words, who turns AI’s technological potential into durable value and profit?

Historically, the answer has very often been: not the core technology providers. Instead, it is the companies that translate general capabilities into domain-specific, workflow-specific, socially and legally acceptable forms — the integrators and vertical AI companies — that accumulate the most resilient value.

What history actually teaches

- Telecom fibre (mid-1990s–2002): infrastructure lost, platforms won

The fibre-optic boom of the 1990s briefly turned Lucent and Nortel into giants of the new internet economy — Lucent hit roughly $38 billion in revenue and over 150,000 employees by 1999 — before collapsing to a fraction of that size by 2006. Aggressive network build-outs and cheap capital fuelled huge expectations, but equipment vendors were quickly commoditised: prices fell, margins evaporated and many ended up in bankruptcy or forced mergers. The durable value instead flowed to the services built on top of that bandwidth: Google’s search and ads business, Amazon’s e-commerce (and later AWS), early portals like Yahoo and AOL, and broadband providers like Comcast, AT&T, Verizon, BT and NTT that monetised the connection through recurring subscriptions. The pipes were essential, but the strongest economics emerged where bandwidth was turned into distribution, advertising and recurring services. - The personal computer era (1981–2000): hardware faded, software and ecosystems dominated

In the PC boom that followed the IBM PC’s launch in 1981, hardware manufacturers and chip vendors captured early headlines and unit growth. Over time, though, intense competition and standardisation squeezed margins at the box level. The centre of gravity shifted upward to Microsoft and a handful of enterprise software vendors who owned the operating system, productivity suite and developer ecosystem. Windows and Office became effectively non-optional in corporate environments by the mid-1990s, while most PC hardware became interchangeable and low-margin. What mattered was control over the ecosystem and workflows, not the metal and plastic on the desk. - Cloud computing (2006–2015): servers became commodity, SaaS captured the surplus

The launch of AWS in 2006, followed by Azure and Google Cloud, transformed compute and storage from scarce, capex-heavy resources into on-demand utilities. Traditional on-prem server and storage vendors found themselves competing in a declining-margin, price-sensitive market, even as total compute consumption exploded. The real surplus shifted to cloud-native and SaaS businesses — Salesforce, ServiceNow, Workday and hundreds of others — that sat above raw compute and embedded themselves in billing, CRM, HR, analytics and line-of-business workflows. Customers stopped paying for hardware; they paid for outcomes wrapped in subscriptions. - Smartphones & mobile OS (2007–present): hardware lost, platforms and apps won

When the iPhone (2007) and Android phones first appeared, much of the market’s attention was on handset makers like Nokia, BlackBerry, HTC and Motorola. Within a decade, most of those early leaders had exited, been acquired, or become marginal as smartphones themselves commoditised. The enduring value instead accrued to Apple and Google, which controlled iOS and Android, the app stores and the developer ecosystems — and to mobile-native applications like Instagram, WhatsApp, Uber, Spotify and TikTok that turned that distribution into engagement, data and revenue. Once again, the high ground was not “who makes the device?” but “who owns the platform and the everyday workflows that sit on it?”

Across these waves, the pattern is not a rigid law but a strong tendency: the core enabling technology is transformative, yet the most durable profits accrue one layer higher — where someone turns that technology into sticky, high-value workflows and business models in specific contexts. That is exactly the question hanging over AI today.

Why Integrators and Vertical AI matter

In practice, the hardest problems in AI deployment are rarely “Is the model good enough?” but “Does it actually work in this environment?” Most organisations — especially in regulated or safety-critical domains — face challenges such as:

- integrating AI with legacy systems and data flows,

- satisfying compliance, auditability and professional standards,

- adapting workflows and roles,

- designing interfaces people will reliably use,

- aligning outputs with domain-specific logic, policy and regulation.

That is precisely the work done by integrators and vertical AI companies. They orchestrate models, wrap them in domain-appropriate logic, manage risk, and embed them into operations. Their value comes from a combination of:

- domain expertise,

- deep integration,

- long-tail edge-case handling,

- compliance and governance,

- and practical user experience work.

Once embedded, these systems become difficult to dislodge, provide stable recurring revenue, and often command high margins. In many real deployments — particularly in health, legal, finance and public sector contexts — improvements in model quality beyond a competent threshold matter far less than this integration itself.

If history is any guide, this is the layer where the most resilient long-run returns accumulate.

The case for the frontier — and its limits

AI does contain some genuinely novel characteristics, and it is important not to overextend historical analogies. There are plausible scenarios in which frontier model labs and GPU suppliers retain an unusually large share of economic value.

The strongest counterargument is the possibility that the application layer collapses into the model. If future models internalise vast amounts of domain knowledge and automate their own integration — generating connectors, workflows, edge-case handling and policy logic — then many vertical applications may reduce to thin interfaces over a centralised intelligence layer. In such a world, substitution risk is high, and the frontier layer may remain dominant.

A related argument is that the stack may not fragment into many specialists, but rather consolidate into a small number of generalist AI super-platforms. If Microsoft, or an alliance of cloud + model providers, successfully bundles compute, models, orchestration, safety and enterprise tooling into a unified offering, the surplus could remain concentrated at the bottom of the stack.

These scenarios deserve serious consideration. They describe a world in which the historical “integrators win” story weakens, and in which owning frontier models or GPUs yields superior long-term returns.

But here’s why they are far from guaranteed…

Why the Integrator thesis still matters

Even if models continue to improve rapidly, there are several structural forces that support the integrator and vertical layer.

First, models cannot dissolve institutional, regulatory and professional constraints. In health, law, policing and social care, the hardest problems are organisational and legal, not purely technical. The NHS illustrates this vividly: government reviews repeatedly found that thousands of fax machines, pagers and decades-old EHRs remained in daily use well into the late 2010s, despite the availability of far superior technology (NHS Digital 2018; DHSC 2019). Similar issues appear across policing and justice, where the Police National Computer and other core systems are routinely described as outdated, fragile and costly just to maintain (NAO 2020; Home Office 2021). These failures persist not because software or models are inadequate, but because public institutions change only through domain-specific procurement, governance and workforce reform — work that general-purpose models in practice cannot automate.

Second, generalist platforms struggle to achieve domain depth. Large tech firms excel at horizontal tools, but their record in specialised, regulated domains is mixed. IBM Watson Health, once marketed as the future of AI-driven medicine, was ultimately sold after years of underperformance and internal restructuring (STAT News 2021). Google Health has been formed, re-formed and dismantled multiple times (The Verge 2021). Microsoft’s Amalga hospital information system was discontinued and sold to a specialist vendor after failing to gain traction (Healthcare IT News 2012). Even Microsoft Copilot’s early deployments promising ‘general purpose intelligence’ show persistent limitations for nuanced, high-stakes tasks, requiring substantial human oversight (arxiv, 2025). The consistent pattern is that horizontal excellence does not automatically translate into domain-specific product–market fit.

Third, integrators will evolve into full-stack vertical platforms. They already sit closest to end-users, hold domain-specific data, and understand the real workflows, edge cases and compliance demands of their sectors. As large models commoditise — thanks to open-weight families like LLaMA and Mistral, and increasingly accessible fine-tuning and deployment tooling — the strategic bottleneck shifts from “owning the best model” to “owning the workflow, the data and the customer”. These are precisely the assets that domain-native integrators are accumulating, positioning them to become the defensible AI incumbents of the next decade.

Even if the frontier labs and compute providers retain more value than in past cycles, that does not ensure they are attractively priced today. Much of their expected dominance is already priced into valuations that assume exceptional, sustained growth. The integrator and vertical layer is still comparatively early and often valued on more grounded assumptions.

AI isn’t the bubble — misallocation is

I believe the AI revolution is real. The technological wave is too powerful, too widely adopted, and too economically meaningful for the label “bubble” to describe the underlying phenomenon.

But bubbles are not about technologies; they are about where capital flows, and what stories investors tell themselves about who will capture the value.

If history is any guide, investors should be cautious about assuming that the frontier model labs and GPU suppliers — the most visible and spectacular parts of the stack — will hold the strongest long-term economic positions. The more robust, less crowded opportunities may sit one layer higher, in the firms that translate raw capability into workable transformations inside real institutions.

In that sense, AI is not a bubble. But portfolios that treat only the frontier providers as investable beneficiaries of AI’s rise may well be. Over the next decade, the surprise is unlikely to be that AI worked. The surprise will be which companies end up owning the gains — and history suggests that the integrators and vertical AI platforms deserve far more attention than they currently receive.

Sources / Further Reading:

- CAPE ratio near historical extremes

YCharts — “S&P 500 Shiller CAPE Ratio (Cyclically Adjusted PE).”

https://ycharts.com/indicators/cyclically_adjusted_pe_ratio - Household equity allocation at record highs

Schroders — “The case for and against US stock market exceptionalism.”

https://www.schroders.com/en-gb/uk/institutional/insights/the-case-for-and-against-us-stock-market-exceptionalism/ - Telecom fibre boom: Lucent revenue/employee peak & collapse

William Lazonick — “The Rise and Demise of Lucent Technologies.”

https://core.ac.uk/download/pdf/6485780.pdf - Telecom boom & bust dynamics (overbuild, bankruptcies)

Federal Reserve Bank of Richmond — “Boom and Bust in Telecommunications.”

https://www.richmondfed.org/publications/research/economic_quarterly/2003/fall/couper-hejkal-wolman - Cloud compute shift: “From scarcity to abundance”

K.E. Kushida — “Cloud Computing: From Scarcity to Abundance.”

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2568049 - SaaS capturing surplus above hardware

Lightspeed — “Welcome to the Hypersonic Innovation Cycle.”

https://lsvp.com/stories/welcome-to-the-hypersonic-innovation-cycle-how-scale-factors-are-redefining-innovation/ - Smartphone OS consolidation (Android + iOS dominance)

Statista — “The Smartphone Platform War Is Over.”

https://www.statista.com/chart/4112/smartphone-platform-market-share/ - NHS reliance on fax machines & outdated tech (2018–2019)

UK DHSC — “Health and Social Care Secretary bans fax machines in NHS.”

https://www.gov.uk/government/news/health-and-social-care-secretary-bans-fax-machines-in-nhs

DigitalHealth.net — “Trusts set to miss Axe the Fax deadline due to lack of progress.”

https://www.digitalhealth.net/2019/09/trusts-set-to-miss-axe-the-fax-deadline-due-to-concerning-lack-of-progress/ - Police National Computer & legacy policing systems issues

Police Foundation — “The Power of Information.”

https://www.police-foundation.org.uk/wp-content/uploads/2010/10/power-of-information-FINAL.pdf - IBM Watson Health underperformance & sale

IBM — “Francisco Partners to Acquire IBM’s Healthcare Data and Analytics Assets.”

https://newsroom.ibm.com/2022-01-21-Francisco-Partners-to-Acquire-IBMs-Healthcare-Data-and-Analytics-Assets

IEEE Spectrum — “How IBM Watson Overpromised and Underdelivered on AI Health Care.”

https://spectrum.ieee.org/how-ibm-watson-overpromised-and-underdelivered-on-ai-health-care - Google Health repeatedly dismantled

MobiHealthNews — “Google dismantles health division in strategy overhaul.”

https://www.mobihealthnews.com/news/google-dismantles-health-division-strategy-overhaul - Microsoft Amalga discontinued / sold to Caradigm

DigitalHealth.net — “Microsoft sheds Health Solutions Group.”

https://www.digitalhealth.net/2012/02/microsoft-sheds-health-solutions-group/ - Microsoft 365 Copilot limitations (human oversight required)

Bano et al. (2025) — “A Qualitative Study of User Perception of M365 AI Copilot.”

https://arxiv.org/abs/2503.17661 - AI Commoditisation Curve (value moves up-stack)

Amadeus Capital — “AI Commoditisation Curve: Where LLM Value Goes Next.”

https://www.amadeuscapital.com/ai-commoditisation-curve/

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.