The A/B Testing Cheatsheet for Product Managers

Last Updated on December 2, 2025 by Editorial Team

Author(s): Padmaja Kulkarni

Originally published on Towards AI.

I have run experiments that crashed and burned and others that unlocked millions in revenue. The difference was how it was set up and what the data was actually saying, and not the variant or the model.

Here is the cheat sheet I use to make sure I am reading experiments the way a product manager should and asking the right questions before calling something a “win.”

I will dive deeper into each concept in my next article, but this is the high level version I keep to make sure all the checks are in place.

🧭 1. Don’t Start With a Test. Start With a Decision.

This is the single biggest mistake I see teams make. They start with a cool idea for a test. They argue about button colors. They build a variant. They start in the wrong place. Before running any test, you must be able to answer four questions. If you can’t, stop.

- What behavior are we trying to change? (e.g., “We want new users to complete onboarding.”)

- What metric best represents it? (e.g., “Day 1 retention,” not “clicks on the ‘Next’ button.”)

- What decision will we make based on this result? (e.g., “If B wins, we roll it out. If A wins or it’s inconclusive, we kill the feature and try a new hypothesis.”)

- What’s our Minimum Detectable Effect (MDE)? (This is the most critical question. What is the smallest change that is both detectable with our traffic and worth caring about? Is a 0.5% lift even worth the engineering cost? I have seen experiments go to waste as business and data was not aligned on what MDE they were hoping to see with the sample size. As a PM, make sure you keep this question in mind.)

PM Mantra: Don’t start with a test. Start with a decision. Most “failed” A/B tests fail before they even start because the MDE was a fantasy or the decision was never defined.

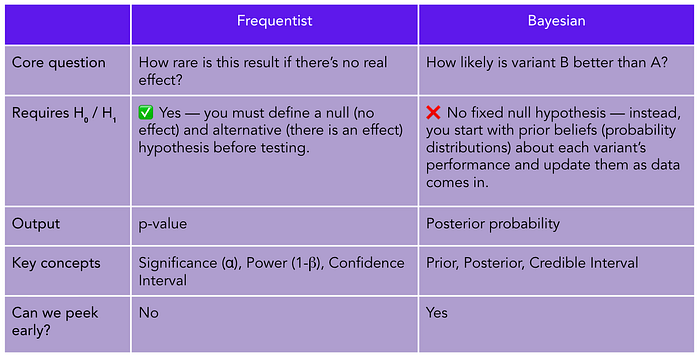

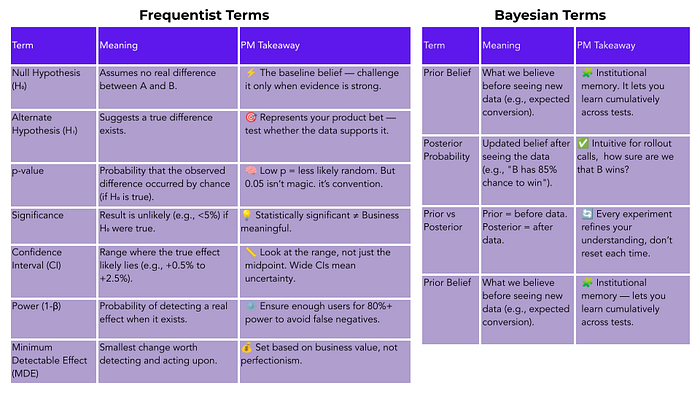

🧮 2. Speaking the Language: Frequentist vs. Bayesian

You don’t need a Ph.D. in statistics, but you must know what your testing tool is actually telling you. You’re likely using one of two “languages” to make decisions under uncertainty.

A Frequentist test gives you a p-value. This is the most misunderstood metric in all of product management. A p-value of 0.05 does not mean there is a 95% chance your variant is a winner. It means: “If the null hypothesis (that there is no difference) were true, we would only see a result this extreme 5% of the time due to random chance.” It’s… weird. And backward-looking.

A Bayesian test gives you a posterior probability. This is what you think you’re getting. It answers the direct question: “Given the data we’ve seen, what is the probability that B is better than A?”

The PM Takeaway: Know your tool. Peeking at a Frequentist test before it has reached its pre-calculated sample size will invalidate your results. Bayesian methods are designed to be updated as data comes in. Different languages, same goal: making better decisions.

⚖️ 3. How to Interpret Results Like a Decision Maker

The goal of a test is not to “be significant.” The goal is to be confident in your next decision. This is what separates junior PMs from senior product leaders.

A junior PMs say:

It’s significant at 95%! We won! Ship it!

Good PMs say:

We’re 95% confident that variant B increased conversion by a range of 1.2% to 3.4%. The uncertainty interval is fully above zero, and the low end of 1.2% still justifies the rollout. Let’s ship it for high-traffic segments first and monitor.

A “win” is not a single number. It’s a range. That range is your Uncertainty Interval (or Confidence Interval). This is your best friend.

- p-value asks: “Is this effect likely real?”

- Uncertainty Interval asks: “If it is real, how big could it be?”

That range tells you your risk. If your 95% confidence interval is -0.5% to 4.0%, you might have a win, but you also might be hurting conversion. That’s not a clear-cut decision.

🧩 4. The Three Great Traps That Create Fake Wins

I’ve seen all of these burn teams, leading to “wins” that mysteriously disappear when measured at the macro level.

Trap 1: The Multiple-Test Contamination When you run multiple experiments on the same users at the same time, the results contaminate each other. Worse, your false positives multiply.

The Fix: Use mutually exclusive cohorts, exclusion groups, or statistical corrections (like Bonferroni or FDR). And always, always measure against a global holdout group to see if your ten “micro-wins” actually add up to one macro impact. (Hint: they often don’t.) Large companies (like Booking or Netflix) handle this with centralized platforms that manage randomization and guardrails. Don’t avoid overlap by luck, engineer around it.

Trap 2: The Segmentation Rabbit Hole The test comes back flat. “No effect.” Someone on the team says: “I bet it worked for new users. Or mobile users. Or users in Germany! Let’s slice the data.” This is called “p-hacking,” and it’s how you invent patterns from noise.

The Fix: Segment only when you hypothesised it up front. For example, there is a clear behavioural reason the effect might differ (e.g., mobile, vs desktop UX). Make uure the segment is large enough to have the statsical power on.

Trap 3: The “Early and Often” Peek You’re excited. You check the test results every hour. On day two, it’s at 94% significance! The next day, 80%. The day after, 96%! You declare victory. You just invalidated your test. This is a key pitfall of Frequentist testing. By repeatedly checking, you dramatically increase your chance of seeing a false positive. You must calculate your sample size (based on MDE and Power) and wait until the test is complete.

The Fix: Don’t peek. If you can’t stop your team from peeking, use a Bayesian engine or a sequential testing method, which are built for it.

🚦 5. A/B Testing Is a Tool, Not a Religion

You don’t always need a test. Sometimes, you just need a decision you can trust.

✅ A/B test when:

- Large reach / high impact

- Expensive to roll back

- Politically or financially sensitive

- You truly don’t know what will happen

- You need to quantify impact (e.g., “How much revenue uplift did this change create?”)

🚢 Ship & measure when:

- Small scope or reversible

- Obvious improvement (performance, latency)

- You’re iterating on a proven pattern

If you skip testing:

- Use canary rollouts (1% → 5% → 10% → 50%)

- Define guardrail metrics (conversion, errors, latency)

- Keep a 5–10% holdout to estimate lift retroactively

PM Mantra: Use an A/B test whenever you need a number, a measurable, causal estimate of impact, and not just a directional signal. A/B testing is a tool, not a religion. Use it when you need precision, not permission.

🧠 The Big Lesson: Statistics Don’t Make Decisions

It took me years (and a few painful rollbacks) to learn this: Statistics don’t make decisions. People do. The magic of A/B testing isn’t in just the math, it’s in the mindset of disciplined experimentation. It’s the humility to accept that your “great idea” might be wrong. It’s the curiosity to ask why a result occurred. And it’s the discipline to trust the framework over your gut. A/B testing isn’t about proving you’re right. It’s about learning what’s true.

Glossary

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.