Running Small Language Models (SLMs) on CPUs: A Practical Guide

Last Updated on September 30, 2025 by Editorial Team

Author(s): Devi

Originally published on Towards AI.

Navigation:

- Why SLMs on CPUs are Trending

- When CPUs Make Sense

- SLMs vs LLMs: A Hybrid Strategy

- The CPU Inference Tech Stack

- Hands-On Exercise: Serving a Translation SLM on CPU with

llama.cpp+ EC2

Why SLMs on CPUs are Trending

Traditionally, LLM inference required expensive GPUs. But with recent advancements, CPUs are back in the game for cost-efficient, small-scale inference. Three big shifts made this possible:

- Smarter Models: SLMs are improving faster and are purpose-built for efficiency.

- CPU-Friendly Runtimes: Frameworks like

llama.cpp,vLLMand Intel optimizations bring GPU-like serving efficiency to CPUs. - Quantization: Compressing models (16-bit → 8-bit → 4-bit) drastically reduces memory footprint and latency with minimal accuracy loss.

Sweet spots for CPU deployment:

- 8B parameter model quantized to 4-bit

- 4B parameter model quantized to 8-bit

Note on GGUF & Quantization

If you’re working with a small language model, using GGUF makes life much easier. Instead of wrangling multiple conversion tools, GGUF lets you quantize and package your model in one step. The result is a single, portable file that loads everywhere, saving disk space.

Unlike raw PyTorch checkpoints or Hugging Face safetensors (geared toward training and flexibility), GGUF is built for inference efficiency.

When CPUs Make Sense

Strengths

- Very low cost (especially on cloud CPUs like AWS Graviton).

- Great for single-user, low-throughput workloads.

- Privacy-friendly (local or edge deployment).

Limitations

- Batch size typically = 1 (not great for high parallelism).

- Smaller context windows.

- Throughput is lower vs GPU.

Real-World Example: Grocery stores using SLMs on Graviton to check inventory levels: small context, small throughput, but very cost-efficient.

SLMs vs LLMs: A Hybrid Strategy

Enterprises don’t have to choose one. A hybrid model also works best:

- LLMs → abstraction tasks (summarization, sentiment analysis, knowledge extraction).

- SLMs → operational tasks (ticket classification, compliance checks, internal search).

- Integration → embed both into CRM, ERP, HRMS systems via APIs.

The CPU Inference Tech Stack

Here’s the ecosystem you need to know:

Inference Runtimes

In simple terms, these are the engines doing the math.

- llama.cpp (C++ CPU-first runtime, with GGUF format).

- GGML / GGUF (tensor library + model format).

- vLLM (GPU-first but CPU-capable).

- MLC LLM (portable compiler/runtime).

Local Wrappers / Launchers

In simple terms, these are the user-friendly layers on top of runtime engines.

- Ollama (CLI/API, llama.cpp under the hood).

- GPT4All (desktop app).

- LM Studio (GUI app for Hugging Face models).

Putting it all together with a Hands-On Exercise: Serving a Translation SLM on CPU with llama.cpp + EC2

A high-level 4-step process:

Step 1. Local Setup

A. Install prereqs

# System deps

sudo apt update && sudo apt install -y git build-essential cmake

# Python deps

pip install streamlit requests

B. Build llama.cpp (if not already built)

git clone https://github.com/ggerganov/llama.cpp.git

cd llama.cpp

mkdir -p build && cd build

cmake .. -DLLAMA_BUILD_SERVER=ON

cmake --build . --config Release

cd ..

C. Run the server with a GGUF model specific for your use case(for instance: I chose Mistral-7B Q4 for our translation task):

./build/bin/llama-server -hf TheBloke/Mistral-7B-Instruct-v0.2-GGUF --port 8080

Now you have a local HTTP API (OpenAI-compatible).

Our Quantized Model Details — A deeper look:

Model: mistral-7b-instruct-v0.2.Q4_K_M.gguf

Quantization Type: Q4_K_M

- Q4 = 4-bit quantization (reduced from original 16-bit)

- K_M = Medium quality K-quantization method

- Size: ~4.4GB (vs ~13–14GB for full precision)

Benefits of This Quantization

- 75% Size Reduction: 4.4GB vs 13–14GB original

- Faster Inference: Less memory bandwidth needed

- Good Quality: K_M provides excellent quality/size balance

- Local Deployment: Fits on consumer hardware

- No Internet Required: Runs completely offline

Q4_K_M Explained

- 4-bit precision instead of 16-bit (75% compression)

- K-quantization: Advanced method preserving important weights

- Medium quality: Balance between size and accuracy

- GGUF format: Optimized for llama.cpp inference

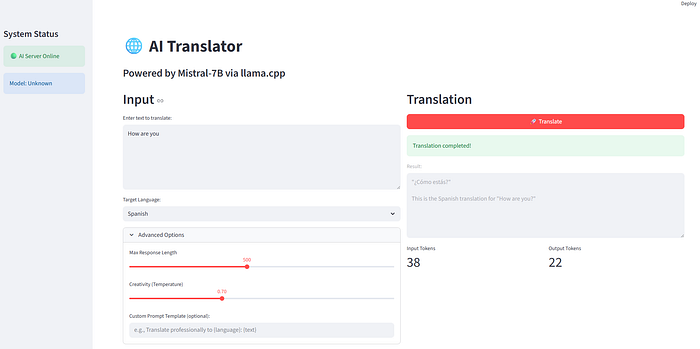

Step 2. Create Streamlit App for our frontend

Save as app.py:

import streamlit as st

import requests

st.set_page_config(page_title="SLM Translator", page_icon="🌍", layout="centered")st.title("🌍 CPU-based SLM Translator")

st.write("Test translation with a local llama.cpp model served on CPU.")# Inputs

source_text = st.text_area("Enter English text to translate:", "Hello, how are you today?")

target_lang = st.selectbox("Target language:", ["French", "German", "Spanish", "Tamil"])

if st.button("Translate"):

prompt = f"Translate the following text into {target_lang}: {source_text}"

payload = {

"model": "mistral-7b",

"messages": [

{"role": "user", "content": prompt}

],

"max_tokens": 200

} try:

response = requests.post("http://localhost:8080/v1/chat/completions", json=payload)

if response.status_code == 200:

data = response.json()

translation = data["choices"][0]["message"]["content"]

st.success(translation)

else:

st.error(f"Error: {response.text}")

except Exception as e:

st.error(f"Could not connect to llama.cpp server. Is it running?\n\n{e}")

Step 3. Run Locally and test out your app

- Start

llama-serverin one terminal:

./build/bin/llama-server -hf TheBloke/Mistral-7B-Instruct-v0.2-GGUF --port 8080

2. Start Streamlit in another terminal:

streamlit run app.py

3. Open browser → http://localhost:8501 → enter text → get translations.

Step 4. Deploy to AWS EC2

You have 2 choices here. Option A or B.

Option A. Simple (manual install)

- Launch EC2 (Graviton or x86, with ≥16GB RAM).

- SSH in, repeat the Step 1 & 2 setup (install Python, build llama.cpp, copy

app.py). - Run:

nohup ./build/bin/llama-server -hf TheBloke/Mistral-7B-Instruct-v0.2-GGUF --port 8080 & nohup streamlit run app.py --server.port 80 --server.address 0.0.0.0 &

Open http://<EC2_PUBLIC_IP>/ in browser.

(Make sure security group allows port 80.)

Option B. Docker (portable, easier)

Build & run:

docker build -t slm-translator .

docker run -p 8501:8501 -p 8080:8080 slm-translator

Then test at: http://localhost:8501 (local) or http://<EC2_PUBLIC_IP>:8501 (cloud).

With this, you get a full loop: local testing → deploy on EC2 → translation UI.

References

- https://aws.amazon.com/blogs/apn/running-genai-inference-with-aws-graviton-and-arcee-ai-models

- Deploying LLMs on CPUs: Is GPU-Free AI Finally Practical? — Blog — Product Insights by Brim Labs

- Small Language Models (SLMs) for Enterprise AI

- https://www.youtube.com/watch?v=U92nV525J1U

- https://www.youtube.com/watch?v=L044lp_tB94

Enjoyed this blog, or even better, learned something new?

👏 Clap as many times as you like — every clap makes me smile!

⭐ Follow me here on Medium and subscribe for free to stay updated

🔗 Find me on LinkedIn & Twitter 📪 Subscribe to my newsletter to stay on top of my posts!

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.