Recurrent Neural Networks Explained: How Neural Networks Remember What Happened Before

Last Updated on December 4, 2025 by Editorial Team

Author(s): Sai Viswanth

Originally published on Towards AI.

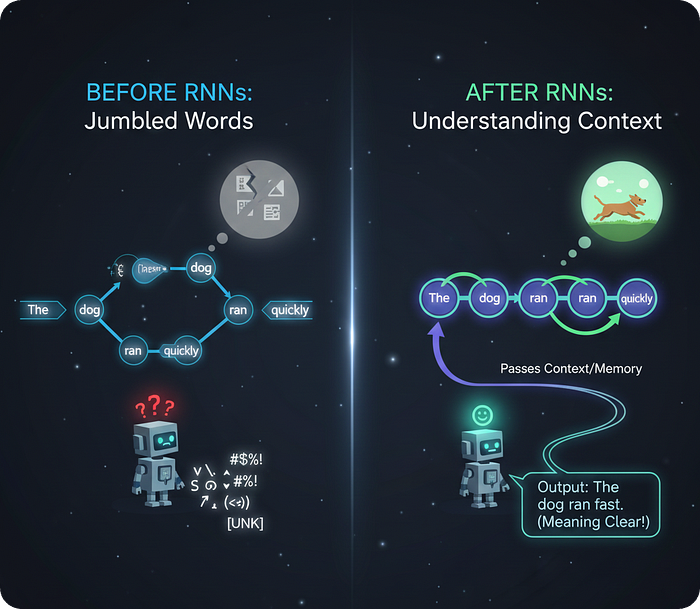

Computers began to reason and actually started to understand the meaning of the language via text. It was due to the discovery of Recurrent Neural Network (RNNs) in Machine learning. I am not exaggerating, but without the discovery of it there would be no Large Language model and in turn ChatGPT like applications.

Why

Prior to it , the Artificial Neural Networks (ANNs) were the very first networks that were introduced in Deep learning space that was capable of handling huge dataset for learning complex patterns. The next in line was Convolutional Neural Networks (CNN) that were exceptional at recognising patterns from an image data with reduced trainable parameters compared with ANNs.

Next you probably guessed it, it was RNNs. The reasons it became so powerful and at the same the former networks were lacking in it

1. Sequence of Data

We call the Data is in Sequence if the present input depends on the past input. For example, time based series applications such as stock market the price of a stock on the 10th day is influenced by the previous 9 days.

Such kind of data dependency , the ANNs and CNNs cannot handle it as inherently their architecture does not support the transfer of the past data.

2. Dynamic Size of Input Data

The Previous Neural Networks Architectures are designed in a way that it cannot handle different input sizes in the dataset for training. If you had a chance to work with CNN ,while you define your model at the beginning itself you would have stated the input size of your image like (244,244,3) that is fixed throughout.

Whereas if your data has tweet comments and you want to classify whether is a positive or negative tweet. The count of words in each comment will definitely not be a constant.

How

Let us see how RNNs achieve the above 2 capabilities and the way it works with simple visuals & examples

From the name the Recurrent by definition means — “occurring frequently”. This extending in technical terms there is something in our neural network is something which is repeating itself from time to time.

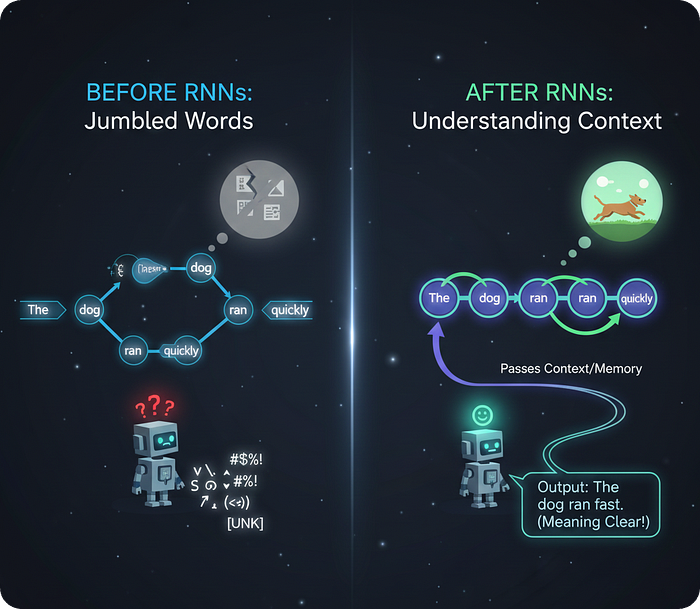

Now with this small info, let us see the basic unit of it to understand it better

3 terms you see in the above image are

- X :- Input

- Y :- Prediction

- h :- Knowledge State / Hidden State

The circular loop you see in the above picture is recurrent mechanism that we have just talked earlier. This mechanism is for updating the Knowledge state h repeatedly so that the information is preserved from the beginning.

What is Knowledge State

I have a maths test on Friday is what I know initially , then later my professor announced that the test is going to be preponed to thursday. Here the initial knowledge I had was that the test is going to held on Friday but later I had to change my knowledge on the date of the test otherwise I would have skipped it.

In order to update my knowledge I need 2 details :-

- Professor’s Announcement as Input

- Friday Test Data Information

Similarly, the Knowledge state for a RNN model is like a memory, this memory will change depending on the input given and the previous knowledge it gained from the previous predictions.

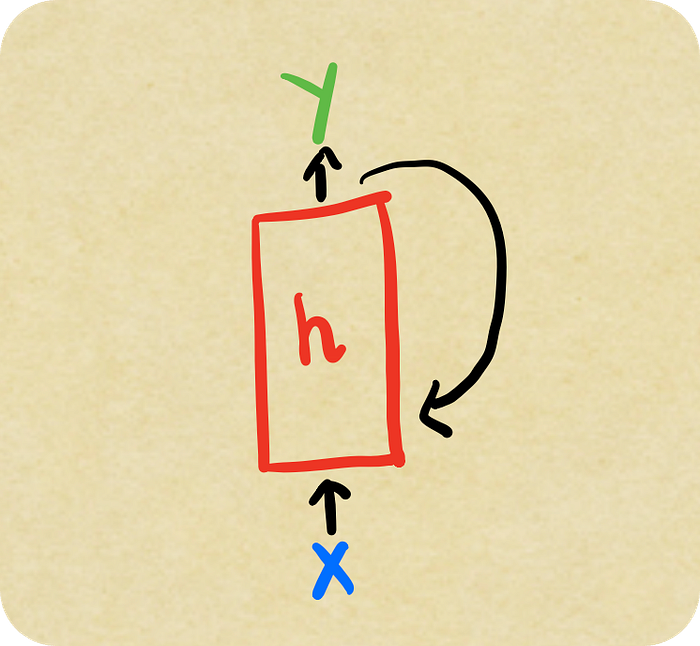

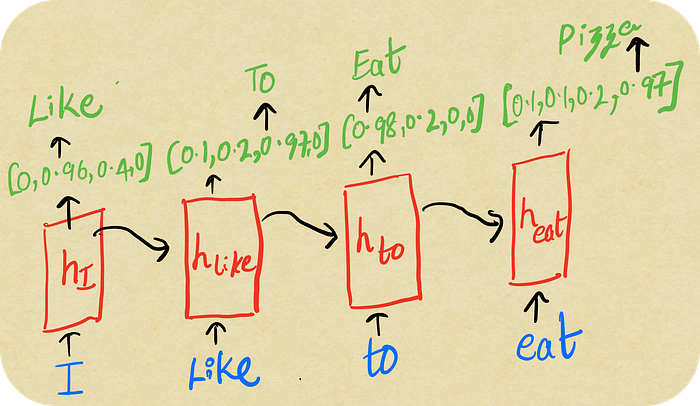

Its all good in theory, but how does the model do it. To see it in live action, we will plug a simple sentence into a RNN model and go through high level formulates with a visual explanation.

Our end goal would be to to predict the word that comes after eat and mainly how is the prediction depends on I , like ,to and eat words.

Sentence :- I like to eat __

Unrolling of the Network

The RNN unit will take in the input “I” , using the input and the knowledge state h it will compute the output Y. We are going to have weights initiaized to the inputs and the knowledge state seperately.

Basic Formulae

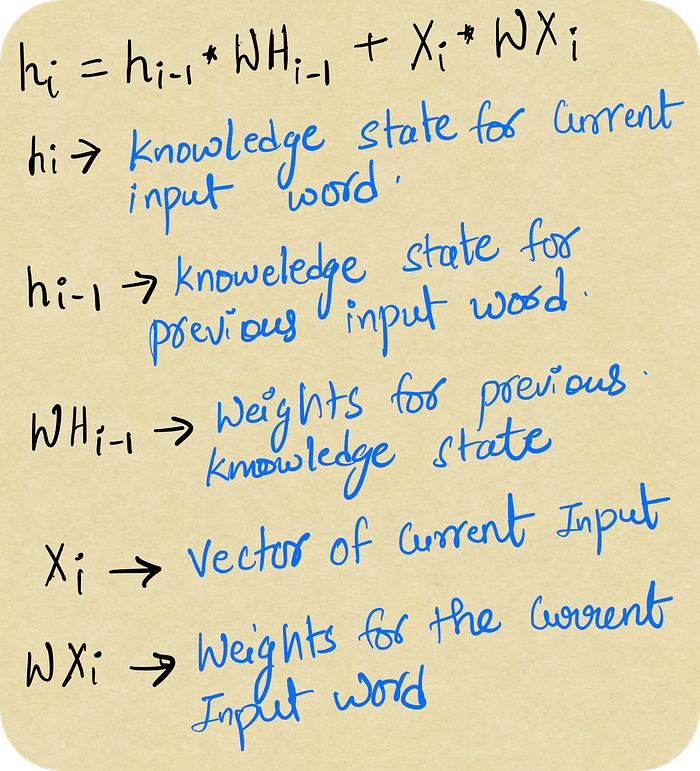

- h[i] = h[i-1] * W[h[i-1]] + X[i] * W[X[i]]

- Y = Activation_Function(h[i])

First Formulae

Weights Computation is for updating the knowledge state

Second formulae

Activation function is to Output the word that has the highest probability out of all the words in a database.

The first word “I” will be represented in numbers as the model cannot understand pure text. Since it is the starting point for the model it does not have any knowledge about anything.

Because of this the h[i-1] previous knowledge will be set to zero. The current knowledge state will get updated with the word I and the output will be calculated on this current knowledge H[I].

For the Knowledge state h[I] there is a softmax function applied to get the highest probability word from a list of words. The weights will be fintuned for the input and knowledge state as the model learns through time , with the finalised weights the word likely to be predicted will be “Like”.

Magic Begins

Next word in our sentence is “Like” , similar to the I , it will be transformed into bunch of numbers and be used to calculate the knowledge state h[like]. The process that takes place here is where the magic happens.

We give the Input “Like” and the previous knowledge state H[I] to update the knowledge state. From the below formulae , the knowledge state to calculate the output for input “Like” is indirectly influenced by the previous input “I” via H[I]

Basically the model might have learnt that the word I is a generic that there can be many words that comes after it, so this information that the model has acquired will be used to update the Like Knowledge state

h[Like] = H[I] * HW[I] + X[Like] * XW[Like]

This simple ideology gave birth to sequence networks.

Using the above formulate the Knowledge will be updated and a softmax function will be applied for the prediction.

By doing this repeatedly until the last word “eat”, we pause here to look at the knowledge state looks like for eat

Equations From Backwards

- h[eat] = h[to] * hW[to] + X[eat] * XW[eat]

- h[to] = h[like] * hW[like] + X[to] * XW[to]

- h[like] = h[I] * hW[I] + X[Like] * XW[Like]

- h[I] = X[I] * XW[I]

When we go backwards through the equations, the prediction for the word input eat has the term h[to] in Equation 1. From here onwards , the so the h[to] was calculated using the input “to” and the knowledge state h[like] , again h[like] is is computed depends on the words to, I and like which is really important for the prediction.

High chance that the word “like” can impact the word that comes after eat. Personally for me it can be a pizza as it is my favourite food to have anytime but if the data which fed to the network has burger more times coming after eat then it can be burger.

What you have Learnt

- Sequence of Data related applications led to rise in adoption of Recurrent Neural Networks.

- RNNs key architecture point is that the knowlege gained from the past inputs can be passed to the present input for predictions.

- High level formulae to see how can RNNs pass information through the network over time.

Main Sources I Used

- Andre Karpathy explained the power of RNNs :- https://karpathy.github.io/2015/05/21/rnn-effectiveness/

- Basics of RNN by Stat Quest :- https://www.youtube.com/watch?v=AsNTP8Kwu8

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.