More than Chat: Fixing Multilingual Search Queries with LoRA finetuning on Apple Silicon

Last Updated on December 2, 2025 by Editorial Team

Author(s): Sreejith Sreejayan

Originally published on Towards AI.

I recently read a fantastic engineering article from Zepto (one of India’s fastest-growing quick-commerce apps) about a difficult search problem.

In India, people don’t just search in English. They search in a mix of languages, often typing Hindi or Tamil words using English letters. A user might type “dahi” (curd) or “kothimbir” (coriander). To make things harder, they often misspell these words.

Zepto solved this by using Llama 3 (8B), a large language model, combined with RAG (Retrieval Augmented Generation). In simple terms, they look up relevant products first, then ask the AI to pick the right one.

It works well, but it comes with costs:

- Latency: RAG requires searching a database before the AI even starts thinking.

- Compute: Running an 8-billion parameter model in the cloud is expensive.

I was truly inspired by their post. It showed a real-world use case for LLMs beyond just “chatbots.” But it got me thinking: Can we solve this problem faster, cheaper, and entirely on a laptop?

I decided to fine-tune a smaller model, Gemma 3 (4B), specifically for this task using an Apple MacBook Air M4. The results were surprising: I achieved 3x the inference speed of typical larger models without needing a complex RAG system.

Here is how I built it.

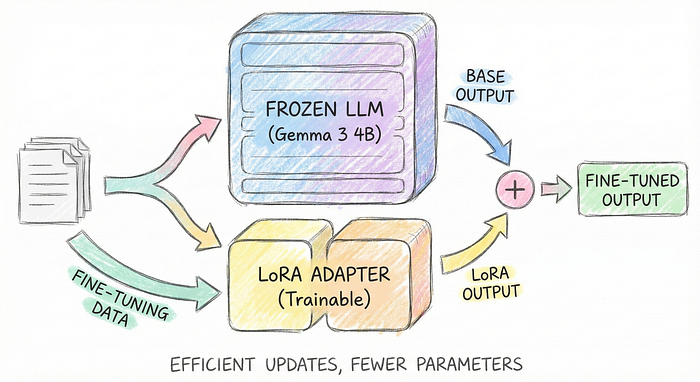

The Strategy: Small Language Models (SLMs) & LoRA

Instead of using a general-purpose giant model that “looks up” answers (Zepto’s approach), I wanted to train a smaller model to “memorize” the translation patterns.

To do this, I chose two specific tools:

- Gemma 3 (4B): This is Google’s latest open model. It is half the size of Llama 3 (8B), which makes it much faster. Crucially, Gemma is excellent at multilingual tasks, making it perfect for understanding “Manglish” (Malayalam + English) or “Tanglish” (Tamil + English).

- MLX & QLoRA:

- MLX is a framework designed by Apple to run AI efficiently on Mac chips.

- QLoRA (Quantized Low-Rank Adaptation) is a training technique. Instead of retraining the whole “brain” of the AI (which takes massive supercomputers), we freeze the brain and only train a tiny “adapter” layer on top of it.

This combination allows us to fine-tune a powerful AI on a standard MacBook Air.

Step 1: Creating the “Bad” Data

Since I don’t have access to Zepto’s real user search logs, I had to simulate them. I used Gemini 3 Pro to generate synthetic training data.

Note: Synthetic data is never as good as real production data. If you have actual search logs where users corrected their own typos (e.g., searched “banan”, then searched “banana”), that data is gold. But for this experiment, synthetic data works well enough to prove the concept.

I needed the model to learn a specific behavior: Take a messy input and return a clean JSON output.

I created a script to generate pairs like this:

- User Input: “nandni dahi packet” (Brand + Hindi word for curd)

- Target Output: {“corrected”: “Nandini Curd”}

Here is the Python function I used to format the data exactly how Gemma expects it:

def create_chat_message(typo, correct_name):

# We force the model to output JSON so it can be parsed by code later

target_json = json.dumps({"corrected": correct_name}, ensure_ascii=False)

# We format this exactly how Gemma expects conversation data

full_prompt_text = (

f"<start_of_turn>user\n"

f"You are a specialized Multilingual Query Corrector. "

f"Map the query to the correct Standard Product Name in JSON format.\n\n"

f"User Query: {typo}<end_of_turn>\n"

f"<start_of_turn>model\n"

f"{target_json}<end_of_turn>"

)

return { "text": full_prompt_text }

I generated hundreds of these pairs, split them into training and validation sets, and saved them as `.jsonl` files.

Step 2: The Fine-Tuning Process

To run this on a Mac, I used the MLX library.

The best place to find models for this is the HuggingFace MLX Community. They have pre-converted versions of almost every popular open-source model. If you can’t find the model you want, you can convert it yourself using the script `python scripts/convert.py — hf-path <model_path> -q` from the MLX repo.

I selected the 4-bit quantized version of Gemma. “Quantized” means the model’s math precision is lowered slightly to save memory, but it runs much faster with almost no loss in intelligence.

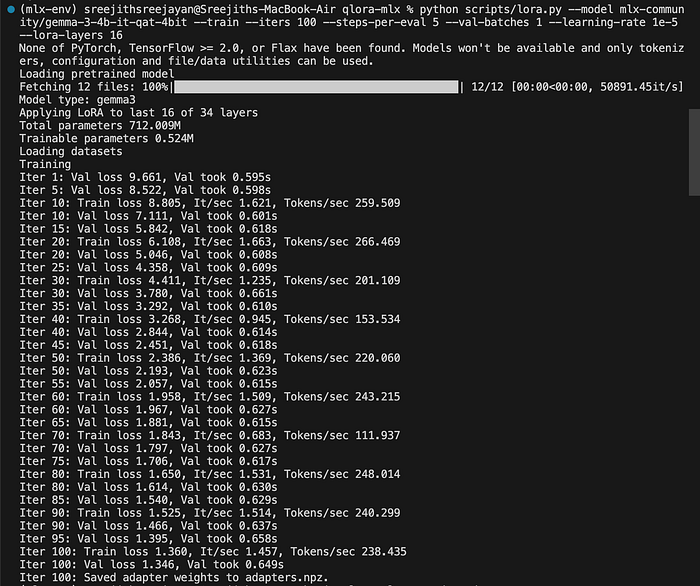

Here is the command I ran in my terminal:

python scripts/lora.py \

--model mlx-community/gemma-3-4b-it-qat-4bit \

--train \

--iters 300 \

--steps-per-eval 5 \

--learning-rate 1e-5 \

--lora-layers 16

What is happening here?

- — iters 300: The model looks at the data batches 300 times to learn the patterns.

- — lora-layers 16: We are only training the last 16 layers of the model.

- Hyperparameters: You can also tweak the — rank. The rank determines the size of the “adapter” we are training. A higher rank (e.g., 16 or 32) allows the model to learn more complex nuances but requires more memory. For simple spelling correction, the default rank is usually sufficient.

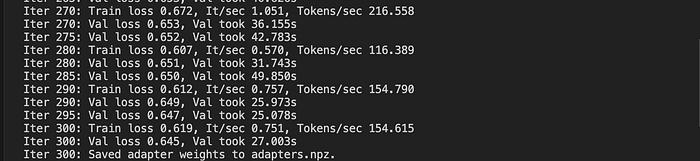

The Training Run

Watching the logs on my M4 Air was satisfying.

- Start: The model was confused. The “Loss” (error rate) was high, around 8.5.

- Middle: By iteration 100, the loss dropped to 1.3. It was starting to understand the task.

- End: By iteration 300, the loss was down to 0.64. The model had effectively learned the mapping.

The entire process took just a less than an hour and consumed very little power.

Step 3: The Results

The most impressive part wasn’t just that it worked — it was how fast it worked.

When running inference (asking the model to correct a word), typical 7B or 8B models on consumer hardware might give you 60 to 80 tokens per second.

Because Gemma 3 is smaller (4B) and running on the optimized MLX framework, I was seeing speeds of 200 to 240 tokens per second. That is effectively instant for a user waiting for search results.

Here are actual outputs from the fine-tuned model:

Test 1:

User Query: “nandni dahi packet”

Model Output: {“corrected”: “Nandini Curd”}

Test 2:

User Query: “kothimbir bunch”

Model Output: {“corrected”: “Coriander”}

It successfully translated the Marathi word “kothimbir” to the English catalog item “Coriander” and formatted it as valid JSON.

Why This Matters

In platforms like Quick Commerce or Food Delivery, a slight difference in search quality can mean day and night for conversion rates. If a user can’t find “curd” because they typed “dahi,” you lose a sale.

Zepto’s approach with RAG is powerful because it can handle a catalog that changes every minute. However, my experiment proves a different point for engineering teams:

- Speed Wins: By removing the RAG lookup and using a smaller model, we cut latency drastically.

- Edge AI is Real: This model runs efficiently on a MacBook Air. This implies it could likely run directly on a high-end phone or a local edge server, removing cloud GPU costs entirely.

- Specialization > Generalization: You don’t always need a massive “brain” like GPT-4 or Llama 3. A smaller brain, trained specifically on your data (like grocery items), can outperform a genius generalist.

Conclusion

This was a fun experiment to see how far we can push local hardware with the latest Small Language Models. While this isn’t a production-ready system yet (it needs real data and more rigorous testing), it shows that the barrier to entry for high-performance AI is lower than ever.

I’m still learning and optimizing this workflow. If you have suggestions on how to improve the LoRA parameters or data pipeline, I’d love to hear them!

You can find the code and the dataset generation scripts in my GitHub repo here: [Link to GitHub Repo]

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.