LEANN: Making Vector Search Work on Small Devices

Last Updated on September 9, 2025 by Editorial Team

Author(s): Edgar Bermudez

Originally published on Towards AI.

Introduction: Why does vector search need to go local?

As AI tools continue to evolve, many of the applications we use daily, like recommendation engines, image search, and chat-based assistants, rely on vector search. This technique lets machines find “similar” things quickly, whether that’s related documents, nearby images, or contextually relevant responses. But here’s the catch: most of this happens in the cloud, because storing and querying high-dimensional vector data is computationally expensive and storage-heavy.

This poses two major problems.

First, privacy: cloud-based AI search often requires sending personal data to remote servers. Second, accessibility: people with limited connectivity or working on edge devices can’t take full advantage of these powerful tools. Wouldn’t it be useful if your phone or laptop could do this locally, without sending data elsewhere?

That’s the challenge tackled in a new paper titled “LEANN: A Low‑Storage Vector Index” by Wang et al. (2025). The authors present a method that makes fast, accurate, and memory-efficient vector search possible on small, resource-constrained devices without relying on cloud infrastructure.

The Basics: What is vector search, and what is HNSW?

To understand the contribution of LEANN, we need to unpack two core ideas: vector search and HNSW indexing.

In vector search, data items (like text, images, or audio) are turned into vectors, essentially long lists of numbers that capture the meaning or features of each item. Finding similar items becomes a matter of measuring distances between these vectors. But when you’re dealing with millions of them, brute-force comparison is too slow. That’s where approximate nearest neighbor (ANN) algorithms come in.

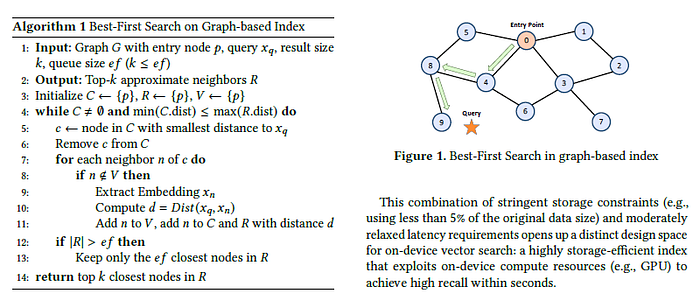

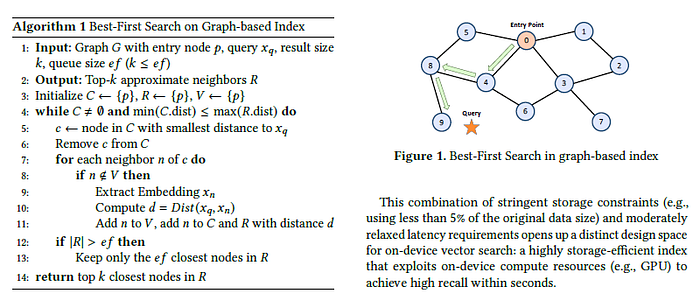

One of the most popular ANN algorithms is HNSW, or Hierarchical Navigable Small World. You can think of it like a friendship network: each data point (or “node”) has links to some others. To find a close match, you start with a random point and “walk” through its neighbors, jumping across the network until you reach something similar to your query. HNSW is fast and accurate, but it’s also storage-intensive. It requires you to store both the index graph (all those connections) and the original data vectors which adds up quickly.

This makes HNSW impractical for mobile or embedded devices where memory is limited.

LEANN’s Core Idea: Prune, don’t store

LEANN addresses this challenge with two key innovations:

- Graph Pruning: Instead of storing the full HNSW graph, LEANN trims it down to a much smaller version that still preserves the ability to navigate effectively. It does this using pruning algorithms that reduce unnecessary connections while keeping enough structure to maintain search accuracy.

- On-the-Fly Vector Reconstruction: LEANN doesn’t store all the original data vectors. Instead, it stores a small seed set and reconstructs the needed vectors at query time using a lightweight model. This dramatically reduces memory usage, because the full embedding matrix no longer needs to live in memory.

Together, these strategies reduce storage by up to 45 times compared to a standard HNSW implementation without significant loss in accuracy or speed. That’s a game-changer for local AI.

The authors demonstrate LEANN on several real-world datasets and show that it performs comparably to full HNSW in both latency and recall, while using only a fraction of the storage.

Why this matters: Making AI more private, accessible, and personal

To me, this paper is interesting because it offers a practical way to bring powerful AI capabilities to smaller, offline environments. Think of a few concrete examples:

- On-device document search: Imagine being able to ask your phone to “find that PDF I read last week about neural networks” and get a meaningful result, even if you’re on a plane or in a remote location.

- Private photo retrieval: Instead of uploading your photos to the cloud to search by visual similarity, your device could handle that locally.

- Assistive tools for healthcare or education: In regions with limited internet access, lightweight vector search could power diagnostic tools or personalized learning without needing external servers.

This kind of local compute model aligns with a broader shift in AI away from centralized systems and toward more distributed, privacy-preserving architectures.

Try It Yourself: A Toy Demo of Low-Storage Vector Search

Although I did not find an open source implementation of LEANN that could be used to demonstrate it for this post, here’s a simple example using hnswlib to build a vector index, simulate reduced storage using a smaller seed set, and estimate memory savings.

# Install hnswlib if not available

!pip install hnswlib -q

import hnswlib

import numpy as np

import random

import sys

import gc

# Helper to estimate size in MB

def get_size(obj):

return sys.getsizeof(obj) / (1024 * 1024)

# 1. Generate synthetic data

dim = 128 # Vector dimension

num_elements = 10000 # Number of vectors

data = np.random.randn(num_elements, dim).astype(np.float32)

# 2. Build full HNSW index

p = hnswlib.Index(space='l2', dim=dim)

p.init_index(max_elements=num_elements, ef_construction=200, M=16)

p.add_items(data)

p.set_ef(50)

print(f"Full index size (approx): {get_size(p)} MB")

print(f"Full vector storage size: {get_size(data)} MB")

# 3. Simulate storing only a small seed set (e.g. 5% of vectors)

seed_ratio = 0.05

seed_indices = random.sample(range(num_elements), int(seed_ratio * num_elements))

seed_vectors = data[seed_indices]

# Simulated vector reconstruction (dummy here: just return nearest seed vector)

def reconstruct_vector(query_vec, seed_vectors):

dists = np.linalg.norm(seed_vectors - query_vec, axis=1)

nearest = seed_vectors[np.argmin(dists)]

return nearest

# 4. Search using reconstructed vectors

query = np.random.randn(1, dim).astype(np.float32)

reconstructed_query = reconstruct_vector(query, seed_vectors)

labels, distances = p.knn_query(reconstructed_query, k=5)

print(f"Search result using reconstructed vector: {labels}")

# 5. Print simulated memory usage

print(f"Simulated reduced vector storage size: {get_size(seed_vectors)} MB")

What this shows

- A full embedding matrix for 10,000 vectors takes ~5–10MB (depending on dtype and dimension).

- By storing only 5% of the vectors and reconstructing others, we can significantly reduce memory usage.

- The HNSW index itself is also compact but pruning it further (not shown here) could yield more savings.

In the real LEANN system, vector reconstruction is done using a learned model, and the pruning step is optimized to keep accuracy high. This toy example just helps visualize the core trade-offs.

Final Thoughts: What does the future of AI search look like?

LEANN shows that you don’t need to choose between efficiency and performance when doing vector search. With smart algorithmic design, it’s possible to build AI systems that are both capable and accessible running directly on the devices we use every day.

This leads to an open question:

How will lightweight, local vector search change the design of future AI applications? Will more systems shift to offline-first models or will cloud infrastructure remain dominant?

References

paper: https://arxiv.org/pdf/2506.08276

GitHub – nmslib/hnswlib: Header-only C++/python library for fast approximate nearest neighbors

Header-only C++/python library for fast approximate nearest neighbors – nmslib/hnswlib

github.com

HNSWLib | 🦜️🔗 Langchain

HNSWLib is an in-memory vector store that can be saved to a file. It

js.langchain.com

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.