How to Increase the Context Length of LLM?

Last Updated on February 3, 2026 by Editorial Team

Author(s): Bibek Poudel

Originally published on Towards AI.

References

Attention Based Frequency

What is Positional Encoding?

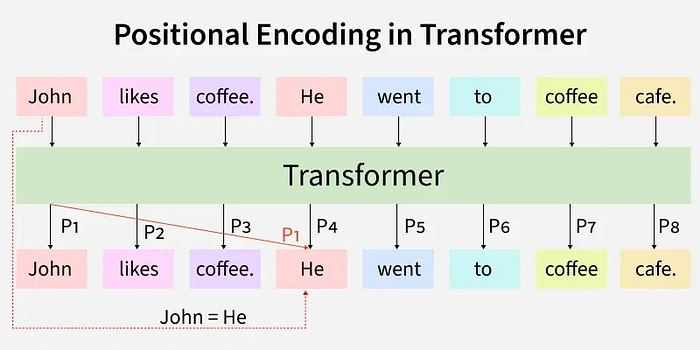

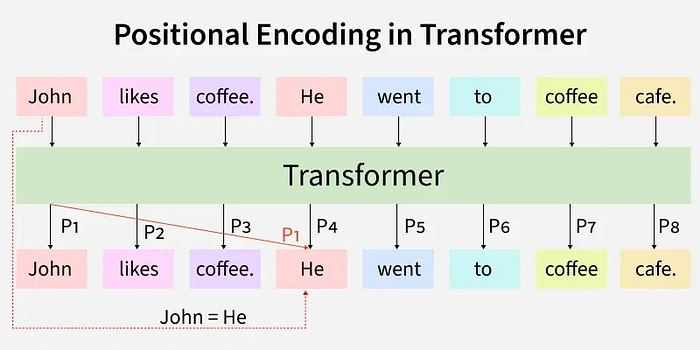

At its core, positional encoding answers a deceptively simple question: How does a transformer know that “bank” in “river bank” appears at position 2, while “bank” in “bank account” appears at position 0?

Transformers process tokens in parallel, unlike recurrent neural networks that read left-to-right sequentially. This parallelism is their superpower for parallelization and long-range dependencies, but it creates a blind spot: without explicit position information, the model sees a bag of words with no concept of order. “The cat sat on the mat” becomes semantically identical to “mat the on sat cat the.”

Positional encoding injects positional information into the high-dimensional word vectors before they enter the self-attention mechanism. Think of it as attaching GPS coordinates to every word, allowing the model to calculate not just what words mean, but where they are in relation to each other.

Absolute Positional Encoding

The original Transformer architecture used absolute positional encoding. Each position in the sequence receives a fixed vector generated by sinusoidal functions, creating predetermined coordinates. This technique represented exact locations on a mathematical map rather than relational distances.

If word “cat” at position 0 has embedding [0.5, -0.2, 0.8] and position 0's encoding is [0.0, 1.0, 0.0], the model receives [0.5, 0.8, 0.8]. The position vector is added to the word vector, creating an absolute coordinate.

The limitation?

Absolute encoding identifies your exact location but not your distance from others. When the model learns that positions 5 and 6 indicate a verb-object pattern, it cannot apply this knowledge to positions 105 and 106. It must learn every specific coordinate pair separately, failing to recognize that the adjacent tokens behave similarly regardless of where they appear in the document.

Relative Positional Encoding

Relative positional encoding emerged as the solution. Instead of adding a position vector to the word vector, it alters the attention mechanism itself to incorporate relative positional information. The model no longer asks “Where is word A?” but rather “How far is word B from word A?”

This shift is crucial: it allows the model to learn that a word at relative distance -1 is typically a subject, while distance +2 is often an object, regardless of whether these words appear at the beginning or end of a 100,000-token document.

However, early relative encoding methods were computationally expensive, requiring separate embedding matrices for every possible relative distance. They worked, but they were unwieldy.

RoPE: The Rotational Breakthrough

How RoPE works?

Rotary Position Embedding (RoPE), introduced by Su et al., solved the efficiency problem of relative encoding through a mathematical insight so elegant it feels like discovering that addition and rotation are secretly the same operation.

Instead of adding a position vector to a word vector, RoPE rotates the word vector itself in high-dimensional space. The rotation angle is directly proportional to the word’s position in the sentence.

Consider a word “cat” represented by a 128-dimensional vector: [0.1, 0.2, 0.3, …, 0.9, 0.10]. In RoPE, we don’t add anything to these values. Instead, we rotate the vector by an angle θ.

- Position 0: No rotation (angle 0)

- Position 1: Rotate by θ

- Position 2: Rotate by 2θ

- Position 3: Rotate by 3θ

- Position n: Rotate by nθ

RoPE injects this positional information before calculating attention between Queries (Q) and Keys (K). When computing the dot product Q·K, both vectors have already been rotated by their respective position angles. The resulting value represents their relative distance.

A larger angular difference indicates distant tokens, while a smaller difference suggests nearby tokens. For example, the model learns that tokens with minimal angular separation like “cat” and “sat” in “the cat sat” often form subject-verb relationships.

Numerical Simulation of RoPE

Let’s walk through a concrete example with the sentence: ”The cat sat.”

Assume:

- Base frequency θ = 1.0 radian

- “The” at position 0: vector [1.0, 0.0]

- “cat” at position 1: vector [0.8, 0.2]

- “sat” at position 2: vector [0.3, 0.9]

RoPE Application:

- “The” (position 0): Rotated by 0θ = 0°

Result: [1.0, 0.0] (unchanged) - “cat” (position 1): Rotated by 1θ = 1.0 radian (~57°)

Using 2D rotation matrix:[x', y'] = [x·cos(θ) - y·sin(θ), x·sin(θ) + y·cos(θ)]

x` = 0.8*cos(1) — 0.2*sin(1) ≈ 0.8*0.54–0.2*0.84 ≈ 0.43–0.17 = 0.26

y` = 0.8*sin(1) + 0.2*cos(1) ≈ 0.8*0.84 + 0.2*0.54 ≈ 0.67 + 0.11 = 0.78

Result: [0.26, 0.78] - “sat” (position 2): Rotated by 2θ = 2.0 radians (~114°)

Similar calculation yields a vector pointing roughly southeast.

Attention Calculation

When calculating attention between “sat” (position 2) and “cat” (position 1), the dot product incorporates the angular difference between their rotated vectors. This difference (2θ — 1θ = θ) depends only on their relative distance (1), not their absolute positions.

Thus, the model learns: “Whatever is at relative distance -1 from me tends to be the subject, regardless of whether I’m at position 100 or position 10,000.”

RoPE Shortcoming: The Wrap-Around Problem

RoPE was revolutionary, but it carried a hidden limitation tied to that base frequency parameter. The rotation angle per position is inversely proportional to the base frequency. In early implementations (and GPT-style models), this base was set to 10,000.

With base frequency = 10,000, the angle per step is small but non-zero. As you process longer sequences, the rotation accumulates. By the time you reach token 10,000, the vector has rotated through full circles multiple times. By token 50,000, position 0 and position 50,000 might have nearly identical angular coordinates.

This is geometric aliasing. The model literally cannot distinguish between “the word at the beginning” and “the word 50,000 tokens later” because their positional embeddings point in the same direction.

Additionally, RoPE suffers from angle decay in long-range relationships. As relative distances grow, the effective angular differences blur together, causing the model to “forget” connections between distant tokens.

What is ABF?

Attention Based Frequency (ABF), also referred to as Frequency Attention Networks, is the technique that breaks through the geometric ceiling by fundamentally re-tuning the rotational velocity of positional embeddings.

In Qwen3, ABF was employed to increase the RoPE base frequency from 10,000 to 1,000,000, a 100× increase.

The Dimensional Symmetry: Why ABF Preserves Local Precision

In our 128-dimensional “cat” vector [0.1, 0.2, 0.3, …, 0.9, 0.10]:

- Dimensions 0.1, 0.2 represent lower frequencies (local structure)

- Dimensions 0.9, 0.10 represent higher frequencies (global structure)

When ABF increases the base frequency, it affects higher dimensions more while lower dimensions remain relatively stable. This is crucial because:

- Lower dimensions: Preserve fine-grained local precision. The model still understands that “cat” immediately follows “the” through subtle angular shifts in these coordinates.

- Higher dimensions: Carry the burden of long-range discrimination. By rotating more slowly in these dimensions, ABF ensures that token #0 and token #128,000 maintain distinct angular structures.

Preventing Geometric Repetition

By reducing the “angle per step,” ABF prevents the rotation from wrapping around too quickly when long tokens are processed. With base frequency 1M, the vector rotates so slowly that by token #128,000, it hasn’t completed a full geometric revolution.

This ensures every token from #0 to #128,000 maintains a unique angular structure, allowing the model to track relative positions across the entire context window without the clockwork confusion of overlapping angles.

Final Thoughts

Attention Based Frequency represents more than a hyperparameter tweak. It is a geometric intervention that re-calibrates how AI models perceive sequence in language. By slowing the rotational clockwork from 10k to 1M, ABF solves the aliasing problem that constrained early transformers to short-context myopia, ensuring every token from #0 to #128,000 maintains a unique angular signature.

The dimensional asymmetry reveals sophisticated insight: lower dimensions preserve fine-grained local grammar, while higher dimensions carry broad, distinct coordinates for global structure. We need both the precision to parse immediate syntax and the range to track narrative arcs across thousands of tokens. Sometimes the key to remembering more isn’t building a bigger memory palace it’s simply slowing the clock enough to include every position’s unique geometric place in the sequence.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.