How I Built a Chatbot Without APIs, GPUs, or Money (Part 2)

Last Updated on January 2, 2026 by Editorial Team

Author(s): Asif Khan

Originally published on Towards AI.

In Part 1, we built a complete backend for a document-based chatbot using local tools.

Before jumping into the frontend, it’s important to verify that the backend behaves correctly.

Instead of guessing, we’ll first test everything using Swagger UI.

This helps us separate backend logic from frontend issues.

Starting the Backend Server

Before testing anything, we need to start the backend.

Navigate to the backend directory and run the following command:

(My_Env) PS C:\ai_chatbot_projects\backend> python -m uvicorn app.main:app --reload --port 8000

What this does:

- Starts the FastAPI application

- Enables auto-reload for development

- Runs the server on port

8000

If everything is set up correctly, you should see logs indicating that the server is running.

Verifying the Server Is Running

Open your browser and visit:

http://127.0.0.1:8000

If the server is active, you’ll either see a default response or be able to access the API documentation.

This confirms that:

- The backend is running

- Dependencies are installed correctly

- The application entry point is working

Once this is verified, we’re ready to test the APIs using Swagger UI.

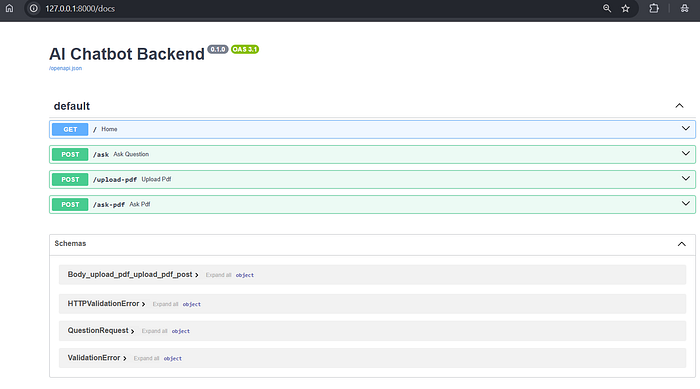

Testing the Backend Using Swagger UI

FastAPI automatically provides a built-in interface to test APIs.

Once the backend is running, open your browser and visit:

http://127.0.0.1:8000/docs

This opens Swagger UI.

Here, we can interact with the backend directly, without writing any frontend code.

Step 1: Uploading a PDF

Start by testing the PDF upload endpoint.

This step confirms that:

- The backend can accept files

- PDFs are stored correctly

- Documents are ingested into the vector database

- Embeddings are created successfully

Upload a small PDF and execute the request.

If this works, it means the document ingestion pipeline is ready.

Step 2: Asking Questions from the Document

Next, test the question-answer endpoint.

First, ask a question that is clearly present in the uploaded PDF.

Expected behavior:

- The chatbot returns an answer

- The answer is grounded in the document

- Source pages are listed

Now ask a question that is not present in the document.

Expected behavior:

- The chatbot should say “I don’t know”

- No sources should be displayed

If both cases work correctly, your RAG logic is solid.

Moving to the Frontend

With a verified backend, we can start building the frontend.

For this project, I chose Streamlit because:

- It’s simple

- It’s Python-based

- It’s fast to iterate

- It’s perfect for internal tools and prototypes

The goal of the frontend is not to be flashy, but to be clear and usable.

Designing the Chat Experience

The chat interface follows a simple layout:

- Left panel for document uploads

- Main panel for chat

- Input box at the bottom

- Clear separation between user and assistant messages

Each assistant response may include:

- The answer

- Source references (only when applicable)

This keeps the interaction transparent and trustworthy.

Streamlit Frontend (Chat UI):

import streamlit as st

import requests

API_BASE = "http://127.0.0.1:8000"

# -------------------------------

# Page Config

# -------------------------------

st.set_page_config(

page_title="RAG Chatbot",

page_icon="📄",

layout="wide"

)

# -------------------------------

# Session State Init

# -------------------------------

if "messages" not in st.session_state:

st.session_state.messages = []

if "documents" not in st.session_state:

st.session_state.documents = []

if "chat_id" not in st.session_state:

st.session_state.chat_id = 1

# -------------------------------

# Sidebar (Documents & Controls)

# -------------------------------

with st.sidebar:

st.title("📄 RAG Chatbot")

st.caption("Local Phi • Ollama • Chroma")

st.divider()

st.subheader("📂 Upload Documents")

uploaded_files = st.file_uploader(

"Upload one or more PDFs",

type=["pdf"],

accept_multiple_files=True

)

if uploaded_files and st.button("📥 Ingest PDFs"):

with st.spinner("Ingesting documents..."):

for file in uploaded_files:

files = {

"file": (file.name, file, "application/pdf")

}

response = requests.post(

f"{API_BASE}/upload-pdf",

files=files

)

if response.status_code == 200:

data = response.json()

st.session_state.documents.append({

"name": file.name,

"pages": data.get("pages",0),

"chunks": data.get("chunks", 0)

})

else:

st.error(f"Failed to ingest {file.name}")

st.success("Documents ingested successfully")

# -------------------------------

# Document Summary

# -------------------------------

if st.session_state.documents:

st.divider()

st.subheader("📊 Document Overview")

for doc in st.session_state.documents:

st.markdown(

f"""

**{doc['name']}**

- Pages: `{doc['pages']}`

- Chunks indexed: `{doc['chunks']}`

"""

)

st.divider()

# -------------------------------

# New Chat

# -------------------------------

if st.button("🧹 New Chat"):

st.session_state.messages = []

st.session_state.chat_id += 1

st.rerun()

# -------------------------------

# Main Chat Area

# -------------------------------

st.header("💬 Ask Questions from Your Documents")

if not st.session_state.documents:

st.info("Upload and ingest PDFs from the sidebar to begin.")

else:

for msg in st.session_state.messages:

with st.chat_message(msg["role"]):

st.write(msg["content"])

question = st.chat_input("Ask something about the uploaded documents...")

if question:

with st.spinner("Searching documents..."):

def build_chat_history(messages, max_turns=6):

history = []

for msg in messages[-max_turns * 2:]:

role = "User" if msg["role"] == "user" else "Assistant"

history.append(f"{role}: {msg['content']}")

return "\n".join(history)

chat_history = build_chat_history(st.session_state.messages)

st.session_state.messages.append(

{"role": "user", "content": question}

)

response = requests.post(

f"{API_BASE}/ask-pdf",

json={"question": question, "chat_history": chat_history}

)

if response.status_code == 200:

data = response.json()

answer = data.get("answer", "")

sources = data.get("sources", [])

else:

answer = "Error retrieving answer."

sources = []

st.session_state.messages.append(

{"role": "assistant", "content": answer}

)

if sources and not answer.lower().startswith("i don't know"):

st.session_state.messages.append(

{

"role": "assistant",

"content": "📌 **Sources:**\n" + "\n".join(f"- {s}" for s in sources)

}

)

st.rerun()

Bringing Backend and Frontend Together

Once connected:

- The frontend sends user questions

- The backend retrieves relevant document chunks

- The model generates a grounded response

- The UI displays the answer and sources

The frontend does not make decisions.

All intelligence lives in the backend.

Final Thoughts

Building a chatbot is not about adding more features.

It’s about understanding where answers come from and when not to answer.

Once the fundamentals are clear, switching models or scaling later becomes much easier.

If this helped you even a little, don’t forget to clap 👏 (yes, you can clap up to 50 times).

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.