How I Built a Chatbot Without APIs, GPUs, or Money

Last Updated on January 2, 2026 by Editorial Team

Author(s): Asif Khan

Originally published on Towards AI.

I wanted to create a chatbot, but without spending a single penny.

No paid API keys.

No GPU dependency.

No cloud bills.

The goal was simple:

👉 Understand the logic, concepts, and terms behind a working chatbot, not just make something that “works”.

During my research, I found that Microsoft has a small open-source model called Phi.

It’s lightweight and good enough for learning.

Then I discovered Ollama, which lets you run models locally on your system.

No API keys. No internet dependency. Perfect for experimentation.

In this blog, I’ll walk you through each step I followed to build a working RAG-based chatbot backend, keeping things simple and practical.

I’ll avoid long paragraphs and focus on clarity.

This is Part 1, where we build the backend.

What We Are Building

- A local chatbot backend

- Runs completely offline

- Uses PDFs as knowledge

- Answers questions only from documents

- Can say “I don’t know” when the answer is not present

Prerequisites

1. Operating System

- Windows

2. Python

- Python 3.10 or above

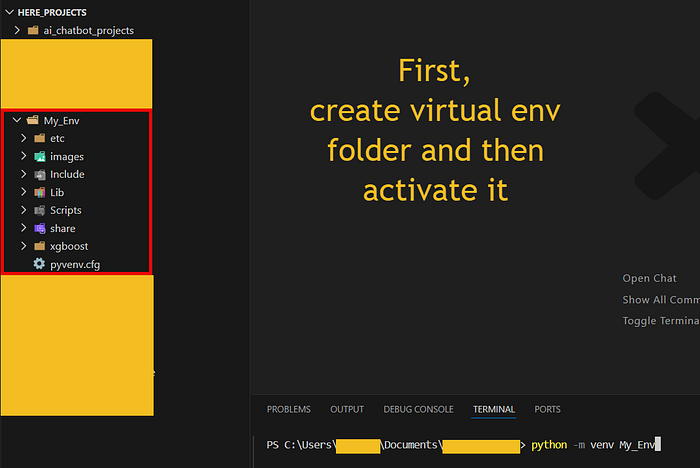

3. Virtual Environment (PowerShell)

Create and activate a virtual environment:

To activate, type My_Env\Scripts\activate.ps1 in CLI.

You should see (My_Env) in your terminal.

Next, create this folder structure.

ai_chatbot_project/

│

├── backend/

│ ├── app/

│ │ ├── __init__.py

│ │ ├── main.py

│ │ ├── llm.py

│ │ ├── rag.py

│ │ ├── schemas.py

│ │

│ ├── data/

│ │ └── uploads/

│ │

│ ├── vectorstore/

│ │

│ ├── .env

│ ├── requirements.txt

│

├── frontend/

│ ├── streamlit_app.py

│

└── README.md

requirements.txt (dependency management)

This file lists:

- All Python packages needed to run the backend

Why it’s important:

- Reproducible setup

- Easy onboarding for others

- Clean environment management

Anyone can recreate the backend with a single command.

fastapi

uvicorn

# LangChain core + ecosystem

langchain

langchain-core

langchain-community

langchain-text-splitters

langchain-chroma

langchain-ollama

# Vector database

chromadb

# PDF loading

pypdf

# Environment & utilities

python-dotenv

pydantic

requests

# Frontend

streamlit

“Install everything as follows:

Make sure you are inside theai_chatbot_projectfolder.

Use:cd ai_chatbot_projects”

pip install -r requirements.txt

Installing Ollama and Phi

Step 1: Download Ollama from:

👉 https://ollama.com

Download and install Ollama for Windows.

After installation:

- Ollama runs as a background service

- You do NOT need to open it manually

Verify Ollama Is Installed:

- Open a new terminal and run

ollama --version

Step 2: Pull Microsoft Phi Model Locally

ollama pull phi

This downloads Microsoft Phi model locally.

Size:

- ~2–3 GB

- One-time download

Wait until it completes.

Step 3: Test Phi Locally (Very Important)

Run:

ollama run phi

Ask something simple:

What is FastAPI?

If you get a response → model works locally.

Exit with: Ctrl + D

Step 4: For embeddings (important for RAG):

Run:

ollama pull nomic-embed-text

Now your system is ready to run models locally.

Why We Need RAG

LLMs can answer anything, even when the answer is not in your document.

That’s dangerous.

So we use RAG (Retrieval Augmented Generation):

- Load documents

- Convert text to embeddings

- Store embeddings in a vector database

- Retrieve relevant chunks

- Answer only from retrieved content

Backend Files Explained

1) main.py – Application Entry Point

This is the starting point of the backend.

Responsibilities:

- Initializes the FastAPI application

- Exposes API endpoints

- Acts as the bridge between frontend and backend logic

Key things it handles:

- PDF upload requests

- Question-answer requests

- Request validation and response formatting

Think of main.py as the traffic controller.

It doesn’t do heavy processing, it just routes requests to the right place.

from fastapi import FastAPI, HTTPException, UploadFile, File

from app.llm import llm, rag_answer

from app.schemas import QuestionRequest

from app.rag import ingest_pdf

from pathlib import Path

import shutil

app = FastAPI(title="AI Chatbot Backend")

# Base directory → backend/

BASE_DIR = Path(__file__).resolve().parents[1]

UPLOAD_DIR = BASE_DIR / "data" / "uploads"

UPLOAD_DIR.mkdir(parents=True, exist_ok=True)

@app.get("/")

def home():

return {"status": "Backend is running"}

@app.post("/ask")

def ask_question(payload: QuestionRequest):

try:

response = llm.invoke(payload.question)

return {"answer": response}

except Exception as e:

raise HTTPException(status_code=500, detail=str(e))

@app.post("/upload-pdf")

def upload_pdf(file: UploadFile = File(...)):

file_path = UPLOAD_DIR / file.filename

with open(file_path, "wb") as buffer:

shutil.copyfileobj(file.file, buffer)

chunks = ingest_pdf(str(file_path))

return {"message": "PDF ingested successfully", "chunks": chunks}

@app.post("/ask-pdf")

def ask_pdf(payload: QuestionRequest):

return rag_answer(

payload.question,

payload.chat_history

)

2) rag.py – Document Ingestion and Retrieval Logic

This file handles everything related to documents.

Responsibilities:

- Loading PDF files

- Splitting large text into smaller chunks

- Creating embeddings from text

- Storing and retrieving vectors from the vector database

Important design choices here:

- Uses a dedicated embedding model, not the LLM

- Uses a persistent vector store, so data survives restarts

- Uses an explicit collection name to avoid data loss

In simple terms, rag.py is the memory of the chatbot.

from langchain_community.document_loaders import PyPDFLoader

from langchain_chroma import Chroma

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_ollama import OllamaEmbeddings

from pathlib import Path

# Base directory → backend/

BASE_DIR = Path(__file__).resolve().parents[1]

UPLOAD_DIR = BASE_DIR / "data" / "uploads"

VECTOR_DIR = BASE_DIR / "vectorstore"

COLLECTION_NAME = "pdf_documents"

UPLOAD_DIR.mkdir(parents=True, exist_ok=True)

VECTOR_DIR.mkdir(parents=True, exist_ok=True)

embeddings = OllamaEmbeddings(model="nomic-embed-text")

def ingest_pdf(file_path: str):

loader = PyPDFLoader(file_path)

documents = loader.load()

splitter = RecursiveCharacterTextSplitter(

chunk_size=800,

chunk_overlap=150

)

chunks = splitter.split_documents(documents)

vectordb = Chroma(

collection_name=COLLECTION_NAME,

persist_directory=str(VECTOR_DIR),

embedding_function=embeddings

)

vectordb.add_documents(chunks)

return {

"pages": len({doc.metadata.get("page") for doc in documents}),

"chunks": len(chunks)

}

def get_retriever_with_sources():

vectordb = Chroma(

collection_name=COLLECTION_NAME,

persist_directory=str(VECTOR_DIR),

embedding_function=embeddings

)

return vectordb.as_retriever(search_kwargs={"k": 4})

3) llm.py – Intelligence and Guardrails

This is the most critical file in the backend.

Responsibilities:

- Calling the language model

- Building prompts with context

- Enforcing strict rules on when to answer

- Deciding when to say “I don’t know”

Key ideas implemented here:

- The chatbot answers only when the document supports it

- Weak retrieval results are rejected

- Sources are shown only if an answer exists

This file ensures the chatbot is honest and trustworthy, not just clever.

import os

from dotenv import load_dotenv

from langchain_ollama import OllamaLLM

from langchain_core.runnables import RunnableWithMessageHistory

from langchain_core.chat_history import InMemoryChatMessageHistory

from langchain_core.prompts import PromptTemplate

from langchain_core.runnables import RunnablePassthrough

from app.rag import get_retriever_with_sources

#for local version

llm = OllamaLLM(model="phi",temperature=0.2)

retriever = get_retriever_with_sources()

# Prompt (from langchain-core)

prompt = PromptTemplate(

input_variables=["chat_history", "question"],

template="""

You are a helpful assistant.

Conversation so far:

{chat_history}

User question:

{question}

Answer clearly and concisely:

"""

)

rag_prompt = PromptTemplate(

input_variables=["context", "question"],

template="""

Answer the question ONLY using the context below.

If the answer is not present, say "I don't know".

Conversation so far:

{chat_history}

Context:

{context}

Question:

{question}

Answer:

"""

)

def rag_answer(question: str, chat_history: str):

docs = retriever.invoke(question)

# 1. If retriever confidence is weak → no answer

if not docs or len(docs) < 2:

return {

"answer": "I don't know. This is not mentioned in the document.",

"sources": []

}

context = "\n\n".join(d.page_content for d in docs)

answer = llm.invoke(

rag_prompt.format(

context=context,

question=question,

chat_history=chat_history

)

)

answer_text = answer.strip() if isinstance(answer, str) else str(answer).strip()

normalized = answer_text.lower()

# 2. If answer is empty or generic → reject

if (

not answer_text

or "i don't know" in normalized

or "not mentioned" in normalized

or "cannot find" in normalized

or "I'm sorry" in normalized

):

return {

"answer": "I don't know. This is not mentioned in the document.",

"sources": []

}

# 3. VALID answer → attach sources

sources = sorted({

f"{doc.metadata.get('source')} (page {doc.metadata.get('page')})"

for doc in docs

if doc.metadata.get("source") is not None

})

return {

"answer": answer_text,

"sources": sources

}

rag_chain = (

{

"context": retriever | (lambda docs: "\n\n".join(d.page_content for d in docs)),

"question": RunnablePassthrough()

}

| rag_prompt

| llm

)

# Base chain (RunnableSequence)

base_chain = prompt | llm

# Simple in-memory message store

_store = {}

def get_session_history(session_id: str):

if session_id not in _store:

_store[session_id] = InMemoryChatMessageHistory()

return _store[session_id]

# Chain with memory

chat_chain = RunnableWithMessageHistory(

base_chain,

get_session_history,

input_messages_key="question",

history_messages_key="chat_history",

)

4) schemas.py – Request and Response Validation

This file defines the shape of data flowing into the backend.

Responsibilities:

- Validating incoming requests

- Enforcing required fields

- Preventing malformed inputs

Why this matters:

- Avoids silent bugs

- Makes APIs predictable

- Helps future scaling and debugging

Think of schemas.py as a contract between frontend and backend.

from pydantic import BaseModel

class QuestionRequest(BaseModel):

question: str

chat_history: str

5) data/uploads/ – Uploaded Files

This folder stores:

- User-uploaded PDF documents

Why it exists:

- Keeps raw documents separate from processed data

- Makes it easier to manage or delete files later

This folder is temporary storage, not intelligence.

6) vectorstore/ – Embedded Knowledge

This folder contains:

- Vector database files created by ChromaDB

Why this matters:

- This is where meaning is stored, not text

- Enables semantic search

- Persists document understanding across restarts

Deleting this folder resets the chatbot’s knowledge.

7) .env – Environment Configuration

Used to store:

- Configuration values

- Environment-specific settings

Even though we avoided paid APIs initially, keeping this file prepares the project for:

- Future API keys

- Deployment environments

- Secure configuration handling

At this point, we have a fully working backend that runs locally and understands documents.

In Part 2, we’ll first validate this backend by testing our model using Swagger UI, and then move on to building a clean, chat-based frontend (using streamlit).

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.