Generative AI — RAG Applications Embedding Libraries

Last Updated on January 6, 2026 by Editorial Team

Author(s): shalabh jain

Originally published on Towards AI.

Generative AI is talk of town in recent years , we all suppose to understand it. This article is part of a series provides comprehensive overview of the major components used in a Generative AI RAG application.

Let me set context on what is RAG application .

A RAG application stands for Retrieval-Augmented Generation application. It is a type of AI system that combines information retrieval with large language models (LLMs) to produce more accurate, up-to-date, and trustworthy responses.

It solves a one fundamental problem with LLM that they are trained on static data, they do not have access to private and proprietary documents and may hallucinate with answers. RAG application solves these in more cost effective way then fin tuning or re training the LLM.

How RAG application works

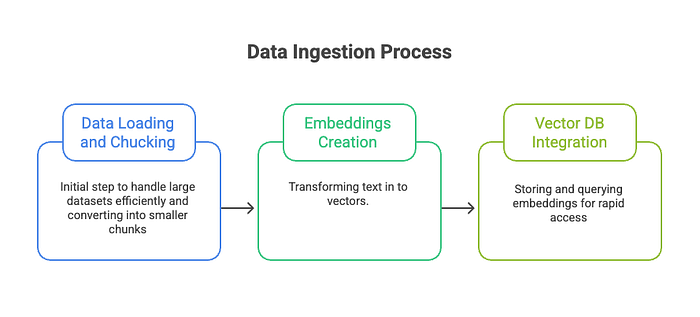

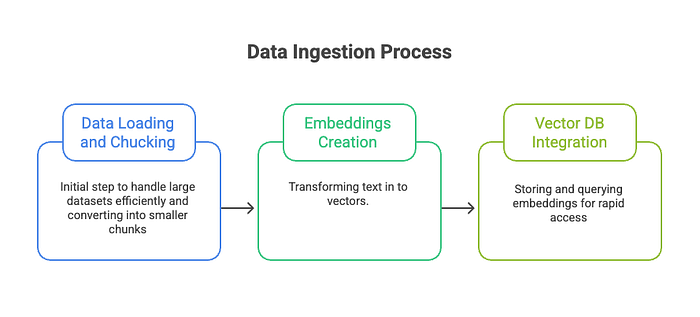

RAG application consist of two separate pipelines

- Data Ingestion

- Retrieval and Augmented Generator

In this article we will focus on embeddings and related tooling available along with different scenarios on using them.

Let me first cover some definitions —

- Embedding Model — An embedding model is a machine learning model that converts data (such as text, images, audio, or code) into numerical vectors (called embeddings) in a high-dimensional space, where semantic meaning is preserved.

- Embeddings — list of numbers that represents the meaning of a piece of data. Similar meaning means vectors are close together and different meaning means Vectors are far apart.

- Dimensionality in embedding model — is the number of numerical values (features) in the vector that represents a piece of data. For example, a 1536-dimensional embedding means each input is represented by 1536 numbers. More dimensions means more capacity to store meaning. Higher dimensions generally result in higher cost.

- Sparse vectors vs dense vectors — Sparse vectors have mostly zero values, making them efficient for keyword matching (like search) by storing only non-zero features, while dense vectors have mostly non-zero values, capturing complex semantic meaning but requiring more computation.

Embedding Use cases —

- Search (where results are ranked by relevance to a query string)

- Clustering (where text strings are grouped by similarity)

- Recommendations (where items with related text strings are recommended)

- Anomaly detection (where outliers with little relatedness are identified)

- Diversity measurement (where similarity distributions are analyzed)

- Classification (where text strings are classified by their most similar label)

Popular embedding models —

- Open AI Models —

- text-embedding-3-small — Newer model, 1536 default dimensions , can be reduced when highest performance not required to balance cost.

- text-embedding-3-large — Newer model , 3702 default dimensions , can be reduced , High semantic accuracy, cost per token is more.

- text-embedding-ada-002–1536 — Old model , fixed 1536 dimensions, slated for deprecation.

All models are API based and costs per token. cosine similarity is recommended. It is faster then the dot product but provides similar ranking as Euclidean distance.

2. Google cloud Models —

- gemini-embedding-001 — Newer model, combines functions of preceding model , supports dense vectors up to 3072 output dimensions. Supports max sequence length of 2048 tokens.

- text-embedding-005 — specialized in English and code tasks, supports up to 768 tokens and up to 2048 tokens.

- text-multilingual-embedding-002 — specialized in multilingual tasks, supports up to 768 tokens and up to 2048 tokens.

using the output_dimensionality parameter, users can control the size of the output embedding vector. Selecting a smaller output dimensionality can save storage space and increase computational efficiency for downstream applications, while sacrificing little in terms of quality. Google models are also API based.

3. Amazon Titan models

- amazon.titan-embed-text-v2:0 — Default Dimensions are 1024 , but also support 512 , 256 . Max supported tokens are 8,192

- Titan Text Embeddings G1 — Default Dimensions are 1024 , but also support 384 , 256 . Max supported tokens are 256

4. Sentence-Transformer (a.k.a SBERT) models

- all-MiniLM-L6-v2 — All-round model tuned for many use-cases. 384 dimensions , 256 max tokens

- all-mpnet-base-v2 — All-round model tuned for many use-cases. 384 dimensions , 256 max tokens

Usage scenarios —

For organisations building enterprise-grade RAG applications where accuracy and semantic depth are critical, models such as OpenAI’s text-embedding-3-large or Google’s gemini-embedding-001 are strong choices. These models provide high-dimensional embeddings and excellent semantic fidelity, making them well suited for question answering, knowledge assistants, and compliance or policy-driven use cases. However, their higher cost means they are best used when retrieval quality directly impacts business outcomes.

In scenarios where cost efficiency and scalability are more important than maximum semantic precision — such as indexing millions of documents — models like OpenAI’s text-embedding-3-small or Amazon Titan embeddings offer a practical compromise. These models provide adjustable dimensionality, allowing teams to reduce storage and computation costs while still maintaining good retrieval performance. They are particularly effective for large-scale document search and recommendation systems.

For teams already invested in a specific cloud ecosystem, it is generally advisable to use the native embedding models provided by that platform. On Google Cloud, Gemini and text-embedding models integrate seamlessly with Vertex AI Vector Search. On AWS, Titan embeddings work well with Bedrock and OpenSearch. This alignment simplifies deployment, monitoring, and scaling while minimising operational overhead.

In regulated environments, offline deployments, or cases where full control over data and infrastructure is required, open-source Sentence-Transformer models such as all-mpnet-base-v2 or all-MiniLM-L6-v2 are excellent options. While these models may not always match the top-tier semantic performance of proprietary APIs, they provide strong results at zero per-token cost and are ideal for experimentation, internal tools, and privacy-sensitive workloads.

Overall, the choice of an embedding model should be driven by retrieval quality requirements, cost constraints, cloud alignment, and operational control, rather than dimensionality alone. Selecting the right embedding model is a foundational decision that directly determines the effectiveness, reliability, and scalability of a RAG application.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.