From Questions to Insights: Data Analysis with LangChain’s Built-In Tools

Last Updated on February 6, 2026 by Editorial Team

Author(s): Vahe Sahakyan

Originally published on Towards AI.

In the first two articles of this series, we established why tools are essential for agentic systems and how those tools are constructed and orchestrated inside agents.

What we deliberately avoided until now is the environment where those ideas are tested most brutally: real data.

Data analysis exposes the hard limits of language models more clearly than almost any other task. Numbers must be exact. Filters must be precise. Joins, aggregations, and transformations must be correct — not plausible. No amount of prompting can make an LLM reliably “reason” its way through a DataFrame.

This is where tools stop being optional and start becoming foundational.

In this final article, we shift from building tools to using mature ones. We explore how LangChain’s built-in tools — especially create_pandas_dataframe_agent — allow large language models to interact directly with real tabular data, execute deterministic computations, and translate results back into natural language insights.

The focus is not on pandas syntax or visualization tricks. It is on how agentic systems bridge the gap between language and data, and why built-in tools are often the most reliable way to do so.

Why Built-In Tools Matter When Agents Work with Data

In the previous articles, we constructed custom tools and integrated them into agents to understand how tool interfaces work and why careful design matters. That exercise is essential for learning. However, in most real-world systems — especially those that operate on data — agents do not need to reinvent every capability from scratch.

LangChain provides a rich ecosystem of built-in tools and toolkits, each designed for a specific class of tasks such as search, code execution, data analysis, web interaction, productivity, and financial queries. These ready-made tools allow agents to interact with external systems, perform calculations, analyze data, retrieve information, and automate workflows without requiring custom integration code for each capability.

Built-in tools are particularly important in data-centric scenarios. They enable hybrid workflows in which an agent can:

- retrieve information (search),

- analyze it (Pandas or Python REPL),

- take action (Requests or Office365),

- and explain the results using LLM-based reasoning.

By combining custom tools with LangChain’s built-ins, agents can bridge reasoning, deterministic computation, and real-world interaction in a way that is both practical and reliable. To keep the focus on core concepts, this article highlights only a small subset of commonly used built-in tools, starting with LangChain’s Pandas-based data analysis agents.

LLMs and Data Analysis: From Language to Computation

Large language models are often described as reasoning engines or natural-language interfaces, but one of their most practical roles is enabling people to analyze data through conversation. Instead of writing SQL queries, crafting pandas expressions, or navigating BI dashboards, users can ask questions in plain language and let the LLM translate intent into structured operations.

This shift is not merely a convenience feature. It reflects a convergence between two traditionally separate worlds:

- Language, where intent is expressed loosely and contextually

- Data, where answers require precise queries, filters, aggregations, and transformations

LLMs sit at this intersection. They map human questions into operational steps, supervise or generate computations, interpret results, and return insights in a form that feels natural.

Traditional data analysis requires several layers of skill: identifying meaningful questions, translating those questions into code, executing transformations, debugging errors, and interpreting results. LLMs reduce friction in this process in two ways. First, they understand questions expressed in natural language, even when phrasing is ambiguous or incomplete. Second, they generate structured operations to filter, aggregate, and manipulate data — often producing the same logical steps a human analyst would write manually.

However, this capability only becomes reliable when LLMs are paired with execution tools. On their own, language models cannot perform numeric computation deterministically; they approximate and may hallucinate. When combined with tools such as pandas, a Python REPL, or SQL engines, LLMs move beyond static text generation and become data assistants capable of running real computations, verifying results, and narrating insights.

Under the surface, this process follows a consistent loop whenever an LLM receives a question about data. The model first interprets intent, determining whether the user is asking for a statistic, a comparison, a trend, a subset of data, or a visualization. It then plans operations, implicitly constructing a sequence of steps such as filtering rows, grouping records, computing aggregates, or generating plots. Execution happens inside tools, where pandas expressions, Python code, or SQL queries are run deterministically. Finally, the model interprets and presents results, translating raw outputs into summaries, observations, patterns, and explanations.

The result is a recurring cycle: question → computation → insight → follow-up questions. This loop mirrors agentic reasoning patterns, but applied specifically to data workflows, where correctness depends on precise execution rather than plausible reasoning.

Capabilities and Limits of LLM-Powered Data Analysis

When paired with execution tools, large language models can support a wide range of data-analysis workflows. Their strength does not come from performing computation themselves, but from coordinating intent interpretation, operation planning, and deterministic execution through external tools.

At a high level, LLM-powered data agents are particularly effective in four areas.

Exploratory analysis.

Agents can inspect datasets, describe columns and data types, compute basic statistics, identify missing values, and surface potential anomalies. These tasks are well suited to natural-language interaction because they involve many small, contextual questions rather than a single complex query.

Data transformation.

Agents can generate structured operations to filter, sort, group, aggregate, and join data. Instead of manually writing pandas or SQL expressions, users can describe transformations in plain language while execution happens deterministically inside tools.

Analytical reasoning.

Within the boundaries of tool-backed execution, agents can perform comparisons, correlations, and simple statistical analyses. The key constraint is that all numeric computation and data manipulation must be delegated to execution tools rather than inferred by the model.

Insight narration.

Once results are computed, LLMs excel at translating raw outputs into explanations. They can summarize trends, compare groups, interpret distributions, and communicate findings clearly in natural language.

Together, these capabilities make LLM-powered agents effective assistants for exploratory and analytical work — provided that computation and execution remain grounded in reliable tools rather than probabilistic reasoning alone. When tools are removed from the loop, correctness degrades quickly, especially as queries become more complex.

LangChain’s Pandas Agent: Architecture and Setup

LangChain provides a practical interface to connect LLM reasoning with real execution environments. In this section, the star is create_pandas_dataframe_agent.

This tool creates an agent capable of inspecting a DataFrame, running pandas operations, generating explanations, and building multi-step transformations. It abstracts away the need to write code while maintaining the ability to run real computations. Internally, the agent uses the LLM for reasoning, a Python REPL for code execution, and guardrails to keep operations safe and structured. This makes it an ideal tool for lightweight analytics or notebook-based exploration.

Setup and Dependencies

To run the examples in this article, install the libraries below. They cover: DataFrame operations (pandas), a built-in dataset (seaborn), LangChain core + integrations, and environment variable loading (python-dotenv).

pip install pandas seaborn langchain langchain-openai langchain-experimental langchain-community python-dotenv

Initialize the Model (gpt-4.1-nano)

In the examples, we will use OpenAI’s gpt-4.1-nano model. The API key is loaded from a .env file.

from langchain_openai import ChatOpenAI

from dotenv import load_dotenv

import os

load_dotenv()

model = ChatOpenAI(

model="gpt-4.1-nano",

api_key=os.getenv("OPENAI_API_KEY"),

)

Load the Dataset

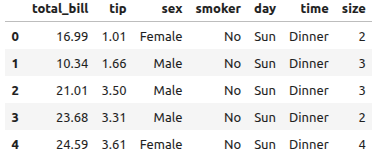

To keep the examples simple and reproducible, we use the built-in tips dataset from Seaborn. Each row represents a restaurant bill, including the total bill amount, the tip, the party size, and contextual fields such as day and time.

import seaborn as sns

from langchain_experimental.agents import create_pandas_dataframe_agent

df = sns.load_dataset("tips")

df.head()

The tips DataFrame includes:

- total_bill: amount of the bill

- tip: gratuity given by the customer

- sex, smoker, day, time, size: contextual details about each group

We will use this dataset for single-DataFrame analysis before moving to multi-DataFrame workflows.

Single-DataFrame Analysis with create_pandas_dataframe_agent

With the model initialized and the dataset loaded, we can now create a Pandas agent. The create_pandas_dataframe_agent function builds an agent that answers natural-language questions by generating Python code and executing it through a controlled Python REPL.

Create a Basic Pandas Agent

We start with the default configuration:

agent = create_pandas_dataframe_agent(

model,

df,

agent_type="tool-calling",

allow_dangerous_code=True, # Allow execution of Python code (use with caution)

verbose=True, # Enable verbose logging for debugging

)

Simple Queries

The following examples show how the agent can answer typical exploratory data-analysis questions without writing any Pandas code manually:

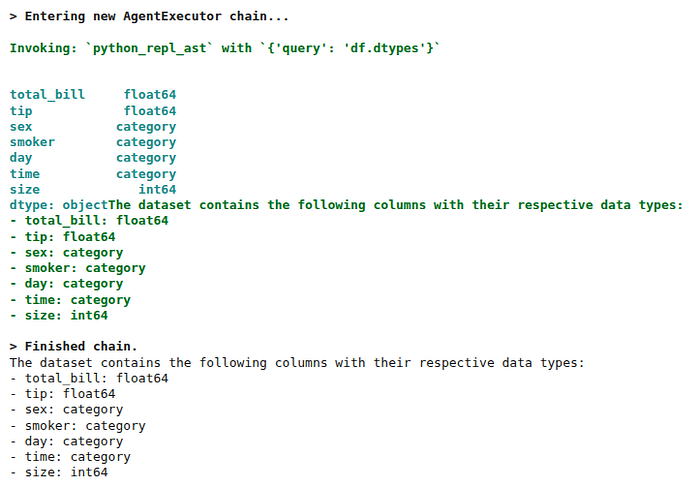

response = agent.invoke("What are the columns in the dataset and their data types?")

print(response["output"])

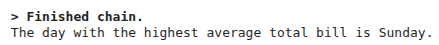

response = agent.invoke("Which day has the highest average total bill?")

print(response["output"])

Conditional Queries and Group Comparisons

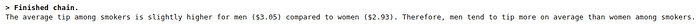

Beyond descriptive questions, the agent can perform conditional analysis — such as filtering subsets of the DataFrame and comparing groups. In the next example, we focus only on smokers and compare the average tip between male and female customers:

response = agent.invoke(

"Among smokers, compare the average tip for men and women. "

"Explain which group tips more on average."

)

print(response["output"])

Limiting Execution Time and Iterations

Sometimes an agent may generate complex Python code or enter a reasoning loop that takes too long. To prevent this, you can control:

- max_execution_time — the maximum number of seconds the agent is allowed to run

- max_iterations — the maximum number of internal reasoning/tool-calling steps

These safeguards help maintain predictable resource usage, especially when running agents inside notebooks or production environments.

agent = create_pandas_dataframe_agent(

model,

df,

agent_type="tool-calling",

max_execution_time=10, # Limit total execution time to 10 seconds

max_iterations=5, # Limit internal reasoning/tool steps

allow_dangerous_code=True,

verbose=True,

)

Visualization

Since the Pandas agent uses a Python REPL under the hood, it can generate simple visualizations using Matplotlib or Seaborn. This makes it possible to request plots directly in natural language.

Note: Depending on your environment, plots may appear inline or may require enabling %matplotlib inline.

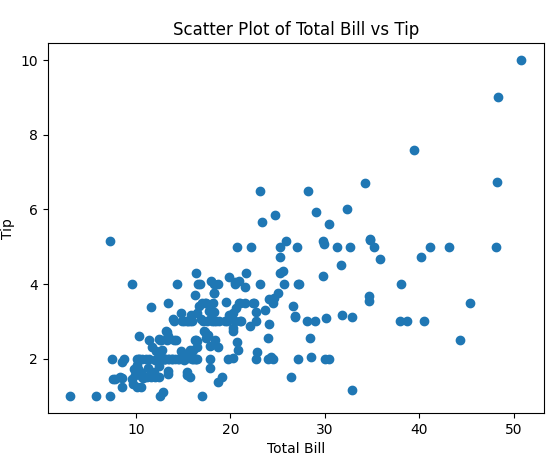

response = agent.invoke(

"Create a scatter plot of total_bill vs tip and describe any pattern you see."

)

print(response["output"])

> Finished chain.

Based on the typical pattern observed in scatter plots of total bill versus tip, there is generally a positive correlation. This means that as the total bill increases, the tip tends to increase as well. However, there is also variability, with some smaller bills receiving relatively higher tips and some larger bills receiving lower tips.

Customizing Agent Behavior with Prompts

You can influence the agent’s reasoning style using:

- prefix — system-level instructions describing the agent’s analytical role

- suffix — instructions shaping how the final answer should be formatted

Below we rebuild the agent with customized prompting to encourage concise, structured responses:

reasoning_agent = create_pandas_dataframe_agent(

model,

df,

agent_type="tool-calling",

prefix="You are a helpful data analyst. Think step-by-step and justify analytical choices.",

suffix="Present the final answer clearly and concisely.",

allow_dangerous_code=True,

verbose=True,

)

response = agent.invoke("Give a short summary of the dataset structure and key statistics.")

print(response["output"])

> Finished chain.

The dataset contains 244 entries and includes 7 columns with the following structure:

- `total_bill`: Continuous numerical data (float), representing the total bill amount. The mean is approximately 19.79, with a minimum of 3.07 and a maximum of 50.81.

- `tip`: Continuous numerical data (float), representing the tip amount. The mean is approximately 3.00, with a minimum of 1.00 and a maximum of 10.00.

- `sex`: Categorical data with two categories, "Male" and "Female". "Male" is the most frequent, appearing 157 times.

- `smoker`: Categorical data with two categories, "Yes" and "No". "No" is the most frequent, with 151 occurrences.

- `day`: Categorical data with four categories ("Sat", "Sun", etc.), with "Sat" being the most common.

- `time`: Categorical data with two categories ("Dinner" and "Lunch"). "Dinner" is more frequent.

- `size`: Integer data indicating the number of people, ranging from 1 to 6, with an average size of approximately 2.57.

The dataset is primarily composed of categorical variables, with some continuous numerical data, and is suitable for analyses involving descriptive statistics and categorical comparisons.

Extending Pandas Agents with Custom Tools

While create_pandas_dataframe_agent already provides strong built-in capabilities through the Python REPL, its functionality can be extended by supplying custom tools. This is useful in cases where certain operations should be handled by a dedicated, deterministic function rather than dynamically generated code.

A custom tool is appropriate when:

- a specific operation is not easily expressed in a single natural-language instruction,

- consistent and deterministic behavior is required (for example, a fixed formula or calculation),

- or the functionality is simpler to implement directly in Python than through generated pandas code.

Below is an example of a custom tool that computes the Pearson correlation between two numeric columns in the active DataFrame. By defining this logic explicitly, the agent can invoke a dedicated function instead of generating its own code for the task.

from langchain_core.tools import tool

@tool

def column_correlation(column_a: str, column_b: str) -> str:

"""

Compute the Pearson correlation between two numeric columns

in the active DataFrame (df must exist in the REPL environment).

Returns a natural-language explanation.

"""

if column_a not in df.columns or column_b not in df.columns:

return f"Columns not found: {column_a}, {column_b}"

corr = df[column_a].corr(df[column_b])

return f"The correlation between {column_a} and {column_b} is {corr:.3f}."

Once the tool is defined, it can be injected into the Pandas agent using the extra_tools parameter:

agent_with_tool = create_pandas_dataframe_agent(

model,

df,

agent_type="tool-calling",

allow_dangerous_code=True, # Allows Python REPL execution

extra_tools=[column_correlation], # Inject custom tool

verbose=True,

)

The agent can then decide when to invoke this tool as part of its reasoning process:

response = agent_with_tool.invoke(

"Use the correlation tool to compute the correlation between total_bill and tip."

)

print(response["output"])

This pattern illustrates how built-in data analysis agents can be extended with custom, purpose-specific tools, combining flexible LLM reasoning with deterministic Python logic where precision matters.

Reasoning Across Multiple DataFrames

So far, we passed a single DataFrame to create_pandas_dataframe_agent. In many real-world scenarios, however, analysis spans multiple related tables. In this example, we will:

- use the built-in tips dataset from Seaborn (restaurant bills and tips),

- derive a second DataFrame with daily revenue,

- define a third DataFrame encoding a hypothetical discount policy by day,

- and build a pandas agent that can reason across all three tables to answer business questions such as:

“If we introduce different discounts by weekday, what happens to total revenue?”

Create the Three DataFrames

# Load built-in "tips" dataset

df_tips = sns.load_dataset("tips")

# 2) Derived table: daily revenue summary

df_daily_revenue = (

tips_df

.groupby("day", as_index=False)[["total_bill", "tip"]]

.sum()

.rename(columns={"total_bill": "total_revenue", "tip": "total_tip"})

)

daily_revenue_df gives an overview per weekday:

- total_revenue: sum of all total_bill values for that day

- total_tip: sum of all tip values for that day

# 3) Discount policy: hypothetical weekday discounts

df_discount_policy = pd.DataFrame(

{

"day": ["Thur", "Fri", "Sat", "Sun"],

"discount_pct": [0.00, 0.05, 0.10, 0.15], # e.g. 10% off on Sat, 15% on Sun

}

)

discount_policy_df encodes a simple promotion plan:

- no discount on Thursday,

- 5% discount on Friday,

- 10% discount on Saturday,

- 15% discount on Sunday.

We will ask the agent to combine tips_df, daily_revenue_df, and discount_policy_df to estimate how these promotions would affect revenue.

Building a Multi-DataFrame Agent

create_pandas_dataframe_agent accepts a list of DataFrames instead of a single one. We also use prefix and suffix to make the agent:

- think step-by-step,

- explain which tables it uses,

- and return a clear final answer.

# Reuse the same LLM `model` defined earlier

multi_df_agent = create_pandas_dataframe_agent(

model,

[df_tips, df_daily_revenue, df_discount_policy],

agent_type="tool-calling",

allow_dangerous_code=True,

verbose=True,

prefix=(

"You are a data analyst working for a restaurant chain.\n"

"In Python, you have access to these DataFrames:\n"

"- df1: tips transactions (original tips dataset)\n"

"- df2: daily revenue summary (columns: day, total_revenue, total_tip)\n"

"- df3: discount policy (columns: day, discount_pct)\n"

"Always use df1/df2/df3 exactly as named above."

),

suffix="Finish with a short business-oriented conclusion.",

)Example 1 — Estimating Revenue After Discounts

In this example, we ask the agent to join the daily revenue with the discount policy, compute discounted revenue, compare original vs discounted totals, and identify the day with the largest revenue loss.

query = """

Using the available DataFrames:

1. Join the daily revenue with the discount policy on 'day'.

2. For each day, compute a new column 'discounted_revenue'

that applies the discount percentage to 'total_revenue'.

3. Summarize original vs discounted revenue per day,

and identify which day loses the most revenue in absolute terms.

Explain the steps briefly before showing the result.

"""

response = multi_df_agent.invoke(query)

print(response["output"])

> Finished chain.

The analysis shows that on Sunday, the total revenue was $1627.16, which dropped to approximately $1624.72 after applying the discount policy. This results in a revenue loss of about $2.44, making Sunday the day with the highest absolute revenue loss due to discounts. This insight can help the restaurant chain evaluate the impact of discounts on revenue, especially on weekends.

Example 2 — Focusing on a Subset of Days

Now we reuse the same agent for a more targeted “what-if” question:

If we keep discounts only on Saturday and remove all other discounts, how does total revenue compare to the full promotion plan?

query = """

Assume the current discount policy applies to all days in `discount_policy_df`.

Now imagine a new policy where:

- Only Saturday keeps its discount.

- Thursday, Friday and Sunday have 0% discount.

Compare total revenue under the original policy vs this new policy.

Explain which policy is better for total revenue and by how much.

"""

response = multi_df_agent.invoke(query)

print(response["output"])

> Finished chain.

The total revenue under the original policy is approximately $4,827.77. When the discount policy is changed so that only Saturday retains its discount, while Thursday, Friday, and Sunday have 0% discounts, the total revenue slightly decreases to approximately $4,827.11.

This indicates that the original policy, which applies discounts on all days, is marginally more beneficial for total revenue by roughly $0.66. Therefore, maintaining the original discount policy across all days appears to be better for maximizing revenue.

Conclusion

LangChain provides many other built-in tools for search, code execution, web interaction, document processing, and workflow automation. While this article focused on data analysis as a concrete example, the same agentic patterns apply across these domains.

In this article, we focused on how built-in LangChain tools can be used to perform real data analysis with large language models. Using create_pandas_dataframe_agent as a concrete example, we showed how an agent can inspect DataFrames, generate and execute pandas operations, reason across multiple steps, and translate results into clear natural-language explanations. We also demonstrated how this approach extends from simple exploratory queries to conditional analysis, visualization, custom tool integration, and reasoning across multiple related tables.

The key takeaway is that effective data analysis with LLMs depends on the combination of natural-language reasoning and deterministic execution through tools. Rather than relying on prompting alone, built-in tools provide a structured and reliable way to ground analysis in real computations.

Across the three articles in this series, we moved step by step from principles to practice. The first article explained why tools are essential for agentic systems and why prompting alone is not enough. The second article showed how tools are created, exposed, and orchestrated inside agents using LangChain and LangGraph. This final article demonstrated how those ideas apply in a data-centric setting, where precision and correctness are critical.

Taken together, the series highlights a consistent theme: agents emerge from the interaction between reasoning models, well-designed tools, and clear execution boundaries. Built-in tools play a central role in making that interaction practical, scalable, and reliable in real-world applications.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.