Evolution of Vision Language Models and Multi-Modal Learning

Last Updated on January 5, 2026 by Editorial Team

Author(s): Bibek Poudel

Originally published on Towards AI.

References

- Exploring the Frontier of Vision-Language Models: A Survey of Current Methodologies and Future Directions

- Visual Instruction Tuning

- Qwen2-VL Technical Report

Vision Language Models

The advent of large language models has profoundly changed the AI landscape. However, these generative models present a notable limitation, as they are mainly focused on generating textual information. To resolve this limitation, researchers have endeavored to incorporate multi-modal capabilities with LLMs, resulting in the development of Vision-Language Models. These models are great at tasks ranging from image captioning, visual question answering, text extraction and generating multi-modal outputs.

Types of Vision-Language Models

Vision-Language Models are categorized into three diverse categories. Models dedicated to vision-language understanding like CLIP and SigLIP, models that process multi-modal inputs to generate uni-modal outputs like Moondream2 and SmolVLM and models that both accept and produce multi-modal data like GPT-4o and Gemini 2.5 Pro.

Motivation for VLM Development

Human intelligence possesses innate ability to seamlessly unify diverse sensory inputs to navigate the complexities of the real world. In similar fashion, generative AI also requires to embrace multi-modal data processing to emulate human-like cognitive ability. Multi-modal data provide generative models with contextual awareness and adaptability in real-world scenarios.

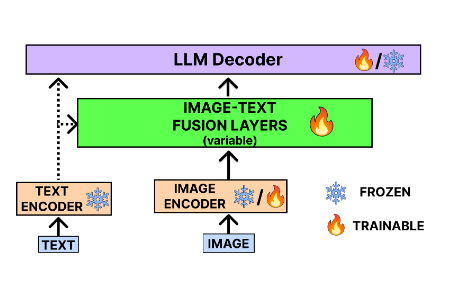

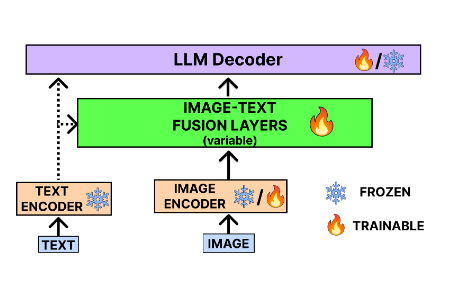

Basic VLM Architecture

Architecture of VLM mainly consist of a dedicated image and text encoder to generate the embeddings. These embeddings from different modalities are later fused together using multi-modal projector. Finally, the fused vector is passed through language model to generate final visually aware response.

and Future Directions

Pioneer to Vision Language Understanding, CLIP

CLIP stands for Contrastive Language-Image Pre-training, which is a pioneer VLM developed by OpenAI in 2021. It consisted of neural network competent in extracting visual attributes through natural language guidance. It also possess the zero-shot ability for identifying visual categories on diverse benchmarks. This ability was the result of scaling a basic contrastive pre-training task. Compared with CNN-based detectors such as YOLO, CLIP consistently achieves higher accuracy.

Object Detection as Vision-Language Task, GLIP

GLIP stands for Grounded Language-Image Pre-training. It was developed and released by Microsoft in 2023 emphasizing object-level alignment via language grounding. This model redefined the object detection as a vision-language task. GLIP’s pre-training on semantic-rich labelled data enabled it to automatically generate bounding box outperforming baselines models like CLIP on image captioning and replacing the supervised dynamic head in downstream object detection tasks.

Contrastive video-text understanding, VideoCLIP

VideoCLIP provided a contrastive approach to pre-train a unified model for zero-shot video and text comprehension, in absence of any labels on downstream tasks. VideoCLIP teaches a transformer to link video with text by matching clips that actually overlap in time and deliberately setting them against similar-looking non-matches fetched as hard negatives.It aimed to foster the connection between different modalities by leveraging contrastive loss and integrating overlapped video-text clips. This model showcased state-of-the-art performance on video language benchmarks and datasets like Youcook2.

Open-Source Integration of CLIP and LLM, LLaVA

LLaVA stands for Large Language and Vision Assistant. It was one of the earliest open-source multi-modal model designed and developed to enhance language models for understanding both text and image modalities. The dataset was synthesized with the help of GPT-4 used for training the LLaVA model.

Dealing with Noisy Training Data, BLIP

BLIP stands for Bootstrapped Language-Image Pre-training. It stands out as an visionary Vision-Language framework, that resolved the hurdles associated with biased and noisy training data. The innovative Multi-modal Mixture of Encoder-Decoder architecture which integrated major concepts like Image-Text Matching (ITM), Language Modelling and Image-Text Matching objectives during pre-training. Captioning and Filtering (CapFilt) was used to improve data quality leading to optimized downstream task performance. BLIP was also implemented in PyTorch and pre-trained on a heterogeneous 14 million image dataset. With the deployment of techniques like nucleus sampling and effective parameter sharing, BLIP surpassed existing models on benchmarks.

Multilingual Vision Language Model, Qwen-VL

Chinese AI developer community and organizations have been actively reshaping the generative AI landscape in recent years. From the first shock wave of DeepSeek’s eye-catching debut to today’s advanced models like Qwen, Kimi, DeepSeek-Janus and others, Chinese labs have carved out a reputation as pacesetters of open-weight and open-source AI. Among these innovative efforts, the Qwen-VL model stands out in the vision-language space.

Qwen unveiled Qwen-VL and its conversational twin Qwen-VL-Chat, which displayed exceptional performance across multifaceted tasks including image captioning, question answering, visual localization, and multi-run dialogue. Qwen-VL was trained on multilingual multi-modal datasets with significant portion of corpora on Chinese and English. Vision Language Models from Qwen was first introduced in their Qwen2 series released in late 2024. From that initiation, the lab released Qwen2.5-VL and Qwen3-VL consecutively. These models excels at tasks ranging from image captioning, object localization and generate contextualized and grounded responses to the user’s queries making them landmark open-source contributions to applied multi-modal AI.

General Usage Small VLM, Moondream

Moondream is a 1.6 million parameter vision-language model developed by Vikhyatk. The composition of SigLIP as vision encoder and Phi 1.5 as language model enabled small sized multi-modal AI which can be easily deployed in embedded devices like Jetson Nano, Raspberry Pi along with computers with limited V-RAM configuration. However, the developer of this model have added a restriction to use this model for any commercial usage. The work is open for academic exploration and research.

Hugging Face’s SmolVLM

SmolVLM is the example set by HuggingFace how a efficient and deployable vision-language model should be for resource-constraint environments like edge devices. SmolVLM integrates frozen SigLIP vision encoder with SmolLM as language model for text processing in compact form. This model employs aggressive token compression techniques to reduce visual tokens up to 90% through hierarchical pooling strategies. It enabled the inference on device with as little as 4GB V-RAM. These innovative aspects of SmolVLM allow it to excel in tasks including on-device image captioning, document understanding, and visual question answering without relying on large GPU configuration and cloud infrastructures.

Multi-Modal Input, Multi-Modal Output; CoDi

CoDi stands for Composable Diffusion. This model have implemented a multi-modal way with the use of Latent Diffusion Models for text, image, video and audio. The core of this model was based on Variational Auto Encoders (VAE). The text processing uses a variational auto encoders with BERT and GPT-2. Visual inputs are handled by Latent Fusion Model (LDM) with variational auto encoders. The speech related input are processed via LSM with a VAE encoder-decoder for mel-spectrogram representation. CoDi generates a shared multi-modal n-dimensional space through cross-modal fusion with Joint Multi-modal Generation and cross-attention modules. Later, its successor CoDi-2 was released with further enhancements.

Google’s Effort to Omni-Model, Gemini

Google published the ground-breaking work on transformer architecture in 2018. Although Google pioneered the research and theoritical work, OpenAI, Claude, Mixtral developed the frontier model for real-world application like GPT, Chat-GPT, Claude and Mixtral. Google did release the Bard as their frontier AI model which was more experimental project instead of a well-developed system for general usage.

But, Google released its most powerful model, Gemini in 2024. This model featured transformative architecture with deep fusion capabilities. This series of models outscored GPT-4 in majorities of benchmarks. The multi-modal and multilingual dataset used for training this model via Reinforcement Learning with Human Feedback (RHLF) to ensure quality and safety. Conclude it please. The new generation of this series have now triumphed the space of text generation with Gemini 3 Pro and image understanding and generation with models like Nano Banana. To sum up, Google may have missed the first product wave, but with Gemini it has leapfrogged back to the front of the frontier-model pack.

Molmo, a grounding based open-source VLM

Allen Institute for AI (AI2), published a significant research on multi-modal learning and vision language model. One of those work is Molmo, a multi-modal model with visual grounding capabilities and transparent open-source development. Molmo approaches the inputs processing aspect through a smart strategy. This strategy allow model to explicitly learns to extract and reference specific regions, and spatial relationships within images. The vision encoder, DinoV2 is paired with modified LLaMA-2 language decoder and augmented by specialized cross-attention layers that preserve spatial fidelity throughout the processing pipeline. The dataset collection for this model includes a creative way where the captions for data labeling was taken via speech. Instead of writing the caption with generative AI, the research team opted to use human’s for explaining the image and then transcribing the speech through Automatic Speech Recognition (ASR).

This meticulously curated dataset allowed this model to achieve state-of-the-art performance on referring expression understanding and visual grounding benchmarks besting the likes of GPT-V and Gemini frontier models. The model’s emphasize on interpretability, providing attention visualizations that show which image regions influenced each token. This effort addressed the black-box criticism leveled at many VLMs allowing further work on mechanistic interpretability space. However, this model cannot be used for general usage in resource constraints environment due to its compute heavy nature and lack of instruction tuning.

MolmoAct, an Action Reasoning Model

MolmoAct extends the Molmo framework into the temporal and action-oriented domain, specializing in understanding and reasoning about human actions, object affordances, and task-oriented procedures from video inputs. This model introduces a novel temporal-grounding mechanism that processes video clips as sequences of salient frames paired with action transcripts, learning to associate visual states with discrete action verbs and their arguments. Its architecture incorporates a slow-fast vision encoder that captures both fine-grained spatial details and long-range temporal dynamics, while the language decoder is augmented with a structured action vocabulary derived from large-scale instructional video corpora. MolmoAct demonstrates remarkable few-shot capabilities in predicting action sequences, identifying failure modes in procedural tasks, and generating step-by-step instructions from visual demonstrations.

Limitations of Vision Language Models

- Models remain susceptible to image hijacks and prompt injection attacks, where maliciously crafted pixels can override safety instructions and cause harmful outputs at runtime.

- VLMs frequently generate plausible but factually incorrect details, hallucinating objects, attributes, or relationships not present in input images, a problem persisting across text, image, video, and audio modalities.

- Many state-of-the-art models demand prohibitive training resources and inference costs, limiting accessibility and creating environmental concerns.

- Models trained on general web data often underperform in specialized domains like medical imaging or scientific diagrams without extensive fine-tuning.

- Most VLMs struggle with long-form multimodal inputs, unable to maintain coherence across extended image sequences or hour-long videos.

Future Directions in Research and Improvisation

- Replacing black-box pre-training with composable, interpretable modules that separate perception, reasoning, and generation, enabling targeted improvements without full retraining.

- Teaching VLMs causal inference and “what-if” reasoning about visual scenes, moving beyond correlation-based predictions.

- Training VLMs to reason across languages and cultural contexts, with benchmarks like PALO targeting 5 billion speakers.

- Creating domain-specific VLMs for medicine, education, and scientific research that leverage curated expert knowledge while maintaining general capabilities.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.