Benchmarking Zero‑Shot Object Detection: A Practical Comparison of SOTA models

Last Updated on January 2, 2026 by Editorial Team

Author(s): Mohsin Khan

Originally published on Towards AI.

1. Introduction

In the first blog of this series — “Practical Guide to Zero‑Shot Object Detection: Detect Unseen Objects Without Retraining” — we explored how Zero‑Shot Object Detection (ZSOD) works and why it’s becoming increasingly practical for real‑world use.

Now that we understand how ZSOD works, it’s time to examine the next big question:

👉 Which ZSOD models actually perform the best in practice?

To answer this, I benchmarked 8 state‑of‑the‑art zero‑shot models, evaluating them on the same dataset, with identical prompts and identical evaluation settings. The results reveal clear leaders — and some surprisingly competitive alternatives.

This blog provides an overview of the dataset, evaluation metrics, model‑specific insights, the ranking based on performance, visual examples, final recommendations and each model’s Hugging Face card.

Let’s dive in.

2. Dataset Used for Benchmarking

The original dataset on Kaggle contains multiple object categories, but for this benchmark, I extracted a 20‑image subset containing only toy cars.

Each model was given the same task:

👉 Detect “toy car” using only a text prompt — without any retraining.

This ensures a clean test of pure zero‑shot generalization.

3. Evaluation Metrics

Each model was evaluated using standard object‑detection metrics:

1️⃣ mAP@0.5 (Mean Average Precision at IoU 0.5)

The primary measure of detection quality — balancing precision and recall.

2️⃣ Precision@0.5

Of all the model’s detections, how many were correct?

Higher precision → fewer false positives.

3️⃣ Recall@0.5

Of all toy cars present, how many were successfully detected?

Higher recall → fewer missed detections.

Together, these metrics reveal:

- Best overall detector

- Most accurate detector

- Most reliable detector

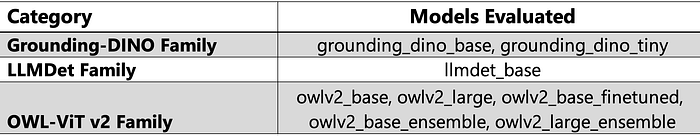

4. Models Compared

A total of 8 models were evaluated:

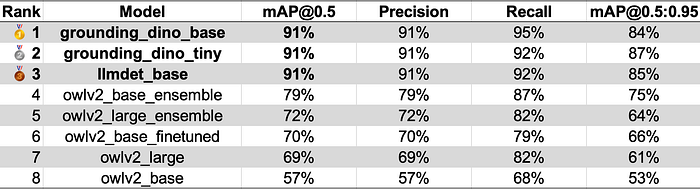

5. Final Ranking

Below is the performance‑based ranking using mAP@0.5, along with precision, recall, and mAP@0.5:0.95.

📊 Final Leaderboard

6. Key Insights From the Results

🥇 1. Grounding‑DINO Dominated the Benchmark

Both variants — grounding_dino_base and grounding_dino_tiny — delivered exceptional performance:

- mAP@0.5 > 0.91

- Strong bounding‑box consistency

- Excellent object‑text grounding

They clearly set the benchmark for ZSOD accuracy and robustness.

🥈 2. LLMDet‑Base Performed Surprisingly Well

llmdet_base was a standout performer:

- mAP@0.5 ≈ 0.91

- High recall

- Efficient text–image alignment

Given its compact architecture, this model is especially suited for:

- Lightweight pipelines

- Lower‑latency applications

- Scenarios requiring efficient inference

🥉 3. OWL‑ViT v2 Models Delivered Stable but Moderate Performance

Ensemble variants performed best within the OWL family.

Strengths:

- Strong recall

- Consistent detection across scales

Limitations:

- Lower precision → more false positives

- Weaker bounding‑box refinement than Grounding‑DINO

Overall, OWLV2 models are reliable generalists but not top performers in zero‑shot accuracy.

7. Visualising the detections

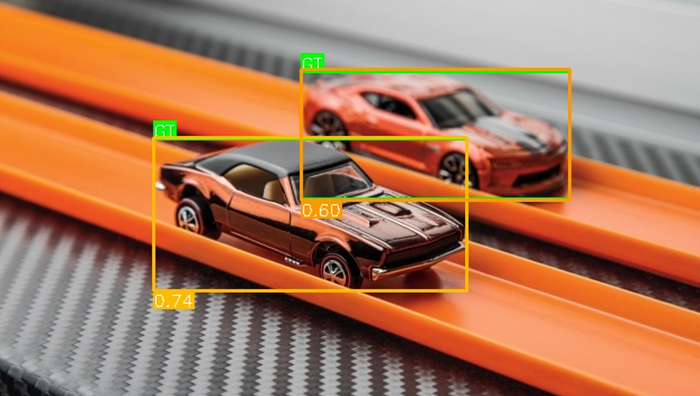

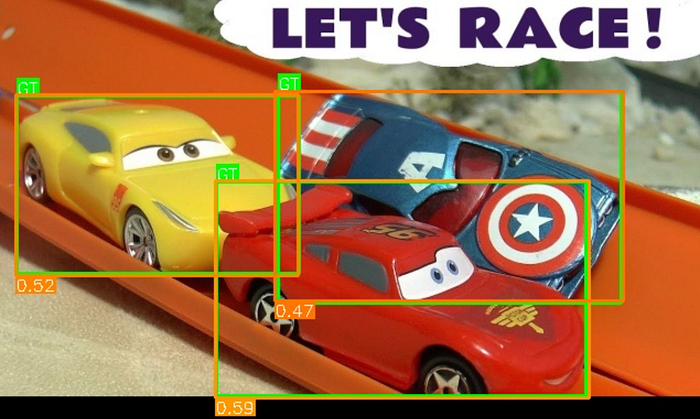

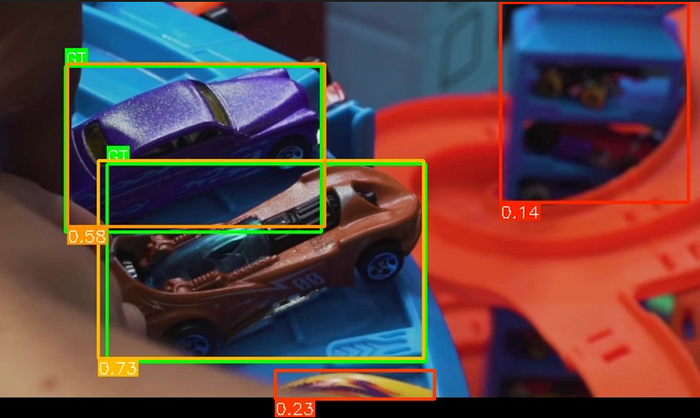

Grounding‑DINO Base Detections

OWL‑ViT v2 Ensemble Detections

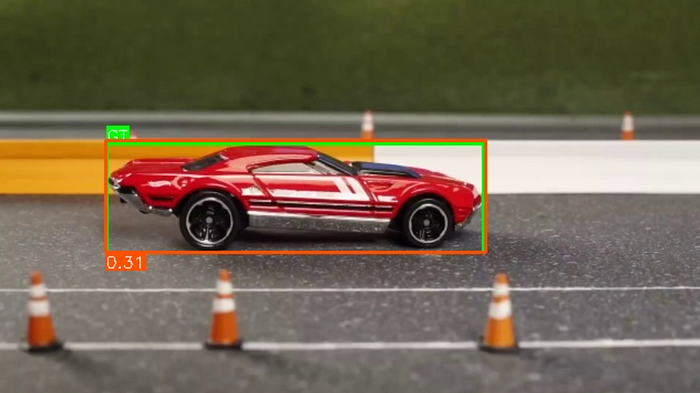

LLMDet‑Base Detections

8. Summary of Findings

✔ Grounding‑DINO is the most accurate and consistent ZSOD model

Strong bounding boxes, excellent precision, and clean detections.

✔ LLMDet‑Base is a lightweight but highly competitive alternative

Almost matches Grounding‑DINO with lower compute requirements.

✔ OWL‑ViT v2 is reliable but not top‑tier

Good recall but higher false‑positive rates.

✔ Zero‑shot performance varies significantly by architecture

Despite identical prompts and dataset, model behaviors differ widely.

9. Model Cards (Hugging Face IDs)

For reproducibility, here are the exact model IDs used in this benchmark:

Grounding‑DINO Models

IDEA-Research/grounding-dino-tinyIDEA-Research/grounding-dino-base

LLMDet Model

iSEE-Laboratory/llmdet_base

OWL‑ViT v2 Models

google/owlv2-base-patch16-ensemblegoogle/owlv2-base-patch16google/owlv2-base-patch16-finetunedgoogle/owlv2-large-patch14-ensemblegoogle/owlv2-large-patch14

10. Closing Thoughts

This benchmark makes one thing clear:

👉 Zero‑shot object detection is powerful and practical — but model choice matters.

- Grounding‑DINO gives the best accuracy

- LLMDet‑Base offers a strong performance‑to‑efficiency ratio

- OWLV2 is reliable for broad generalization

In the next blog, we may explore:

- How to create a pipeline that includes Zero shot object detection with other models including a multi-modal large language model.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.