Apple Source Code Exposed Again — Why Every Enterprise AI Stack Needs Secure Code Before It’s Too Late

Author(s): Snow

Originally published on Towards AI.

This week, Apple offered the industry yet another teachable moment.

- App Store web front-end source exposed (Nov 2025): Apple’s new web App Store shipped with production source maps enabled, letting the front-end code be reconstructed and mirrored to GitHub before a DMCA takedown cleared thousands of forks. Even if no secrets were embedded, this is blueprint-level exposure (framework choices, routing, state management) — gold for attackers and copycats. TechRadar

And this is not the first time.

- Internal tools code claimed leaked (June 2024): Threat actor IntelBroker claimed source for internal Apple tools (including AppleConnect-SSO) was posted on a forum. Even if customer data was untouched, SSO code + configs are privilege gateways — if authentic, the risk is outsized. AppleInsider

- macOS “Sploitlight” (July 2025): Microsoft disclosed a Spotlight/TCC flaw that could expose sensitive file data and even caches used by Apple Intelligence, patched by Apple in macOS 15.4. These aren’t theoretical weaknesses; they are AI-adjacent pathways to sensitive data. Microsoft

Being a veteran in security at Meta and active contributor of our security community across the industry from Microsoft Defender, Carbon Black, CrowdStrike, I know that none of this means Apple is uniquely careless. It means every team building AI-enabled products now carries double exposure: the code becomes an attack surface, and the AI features widen pathways to private data if guardrails are thin.

Executive Summary: The Three-Headed Crisis of Security Sprawl — Secrets, Tools, and Identities

Security sprawl is not a monolith. It is an interconnected crisis of:

- Secrets Sprawl: 23.8 million new hardcoded secrets were added to public GitHub in 2024 alone, a 25% surge from 2023. The exposed credentials has an alarming persistence, with 70% secrets detected in 2022 remain active at the time of the report. GitGuardian

- Tool Sprawl: 65% of enterprises report running too many disjointed tools. 53% say risky fragmented environment is introduced by fragmented security tool integration. Barracuda

Usability failure is also outlined as a major concern as developers find SMTs and their documentation so difficult to use that they “deviate” from secure practices and resort to workarounds, such as hard-coding credentials. ArXiv - Identity Sprawl: Non-human identities now outnumber human ones by a ratio of 82:1 — the result of uncontrolled cloud automation and AI proliferation. CyberArk

💡 The Bigger Trend: Machine Identity Explosion

Cloud-native environments and CI/CD automation are scaling identity sprawl at unprecedented rates. The attack surface has shifted from endpoints to ephemeral, AI-generated credentials.

AI is now the #1 creator of privileged identities in enterprise networks (CyberArk 2025).

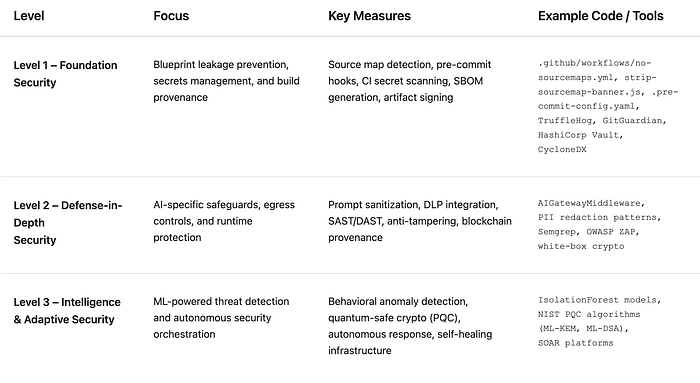

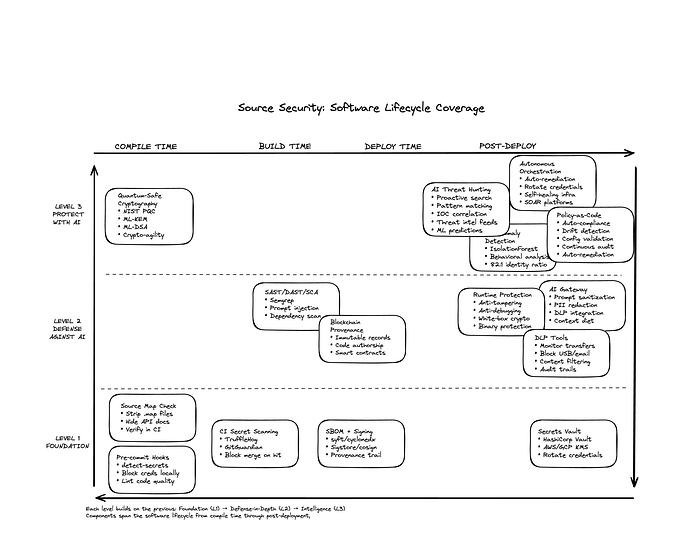

The Three-Level Framework: From Foundation to Intelligence

Security is not about selecting a set of tools, but building a resilient system. Many audience kindly provided positive feedback on the Three Levels of Security & Privacy for AIaaS in my last piece, saying that they would love see more. Therefore I am outlining the Three Levels of Source Guardian for AIaaS today, such that it also can be referenced as an evolutionary roadmap for AI systems, from ground-up baseline protection to intelligence-level adaptation.

(Methodology, Not Production. Visit our Hugging Face publication at

👉 https://huggingface.co/spaces/AIgenticLLC/blog for more technical details)

Level 1: Foundation Layer — The Non-Negotiables

Skipping this layer will collapse the entire design. These are table-stakes controls that prevent the most common and damaging exposures we saw in the Apple incidents.

1.1 Prevent Blueprint Leakage (Front-ends & APIs)

Production build hygiene, it is so basic but I am always shocked to find out the remaining gap in this first defense:

- Disable source maps for production assets along with other code obfuscation; if somehow these are needed (for example: error monitoring), host privately and require auth.

- Strip

sourceMappingURLin final bundles; verify in CI. - Public artifact review: Ensure no

.map,.ts,.svelte,.tsx, or dev manifests are public. Dynamically scan containers for debug layers.

API self-describe hardening: Hide /docs, /openapi.json, /graphql introspection in prod; use signed clients or tokens.

# GitHub Actions: fail build if public sourcemaps detected

# .github/workflows/no-sourcemaps.yml

name: block-sourcemaps

on: [push, pull_request]

jobs:

check:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- run: |

if git ls-files | grep -E '\.map$'; then

echo "✖ Source maps detected in repo"

exit 1

fi

// esbuild plugin to strip sourceMappingURL

// strip-sourcemap-banner.js

export default {

name: 'strip-sourcemap',

setup(build) {

build.onEnd(result => {

for (const o of result.outputFiles || []) {

if (o.path.endsWith('.js')) {

o.text = o.text.replace(/\/\/# sourceMappingURL=.*\n?$/g, '');

}

}

});

}

};

1.2 Secrets & Config Discipline

This is essential to stop the “Keys in Code” Problem.

- Pre-commit hooks (e.g.,

pre-commit,detect-secrets) block credentials locally. - CI secret scanning on every PR; block merges on hits.

- Vault everywhere: Centralize secrets (e.g., HashiCorp Vault, AWS/GCP KMS).

- Ephemeral credentials: Rotate short-lived tokens; ban long-lived PATs.

# Pre-commit example (.pre-commit-config.yaml)

repos:

- repo: https://github.com/Yelp/detect-secrets

rev: v1.5.0

hooks:

- id: detect-secrets

args: ["--baseline", ".secrets.baseline"]

1.3 Build-Time Provenance & Policy

- Software bill of materials (SBOM) + signing: Generate SBOM (

syft/cyclonedx) and sign artifacts (Sigstore/cosign). - Protected environments: Restrict deployment to production and launch SOP.

# Minimal SBOM in CI

# CycloneDX for Node

npm i -g @cyclonedx/cyclonedx-npm

cyclonedx-npm --output-file sbom.json

Level 2: Defense-in-Depth Layer — AI-Specific Controls

Once the foundation is solid, layer in protections tailored to AI workloads and the expanded attack surface they create.

2.1 Model & Data Egress Controls (AI-Specific)

Model gateway abstraction: Route all model calls through a gateway (internal or vendor) that enforces PII scrubbing, prompt minification, and output filters.

Prompt/response DLP: I would argue that almost all agents needs zero knowledge of the PII, given the principle of least privilege. If an agent does though, mask PII before prompts leave the VPC; redact outputs that echo sensitive strings (e.g., keys).

“Context diet”: Only send the minimal chunk set needed for an answer; never forward raw repositories or proprietary archives. Just recently, a client was in panic when I told them that they had been sending all their sales data including client information and order details to one of the major cloud LLM providers for months without knowing about it.

Gateway policy sketch:

Client → Policy Engine → (sanitize prompt) → Model

↘ DLP scan ↙

Audit stub (hash, time, user)

2.2 AppSec + SAST/DAST Tuned for AI Stacks

- SAST tuned to your frameworks (e.g., taint rules for prompt concatenation and template injection).

- DAST with AI-specific payloads (prompt injection, model-tool jailbreaks).

- Package hygiene: Block known-bad versions; auto-open PRs to patch.

- Threat modeling that includes vector DBs, embedding pipelines, and RAG connectors as first-class assets.

2.3 Runtime Protection & Anti-Tampering

Runtime protection like Anti-Tampering, Anti-Debugging, Binary Protection, White-Box Cryptography provides extended assurance as code moves beyond the perimeter.

Level 3: Intelligence Layer — AI-Powered Security

This is where AI becomes the defender, not just the asset being defended. As I explored in the previous analysis on AWS reliability and AI observability, AI-driven observability isn’t just for uptime — it’s the future of security, including source code protection.

3.1 AI-Powered Threat Detection & Response

Traditional signature-based detection can’t keep pace with the 82:1 non-human identity explosion. ML models trained on behavioral baselines can detect anomalies invisible to rules engines.

"""

ML-powered anomaly detection for non-human identity behavior.

Detects unusual patterns in machine credential usage, API calls, and access patterns.

"""

class MachineIdentityAnomalyDetector:

def __init__(self):

self.model = IsolationForest(contamination=0.01)

self.feature_extractors = {

'api_call_rate': lambda events: len(events) / time_window,

'error_rate': lambda events: count_errors(events) / len(events),

'credential_age_days': lambda events: get_credential_age(events),

'temporal_entropy': lambda events: calculate_entropy(events),

'unusual_hours_activity': lambda events: count_off_hours(events),

'geo_diversity': lambda events: len(unique_ips(events))

}

def extract_features(self, identity_events) -> feature_vector:

"""Extract behavioral features from identity event stream."""

return [extractor(identity_events)

for extractor in self.feature_extractors.values()]

def detect_anomaly(self, identity_id, recent_events):

"""Detect if current identity behavior is anomalous."""

features = self.extract_features(recent_events)

prediction = self.model.predict([features])[0] # -1=anomaly, 1=normal

anomaly_score = self.model.score_samples([features])[0]

if prediction == -1: # Anomaly detected

return {

'identity_id': identity_id,

'risk_level': calculate_risk(anomaly_score),

'actions': self.generate_response_actions(features)

# Actions: ROTATE_CREDENTIALS, TEMP_SUSPEND, ALERT_SOC

3.2 Quantum-Safe Security (Post-Quantum Cryptography)

The quantum threat is no longer theoretical. NIST has standardized post-quantum cryptographic algorithms, and forward-thinking organizations are beginning migration now.

Why this matters: “Store now, decrypt later” attacks mean adversaries are already harvesting encrypted data to break once quantum computers arrive.

Action items:

- Inventory all cryptographic implementations

- Plan migration to NIST-approved PQC algorithms (ML-KEM, ML-DSA, SLH-DSA)

- Implement crypto-agility in your architecture for rapid algorithm swaps

3.3 Autonomous Security Orchestration

The ultimate evolution is full closed-loop automation: detect → analyze → respond → learn, without human latency.

Capabilities:

- Self-healing infrastructure that auto-remediates configuration drift

- Automated threat hunting using AI agents that proactively search for IOCs

- Continuous compliance enforcement with policy-as-code that auto-corrects violations

Conclusion: Source Code Security as the First Line of Defense

The Apple incidents aren’t cautionary tales about carelessness — they’re reminders that attack surface scales with capability. Every AI feature you ship expands the frontier adversaries can probe.

But here’s the paradox: the same AI that increases risk is also your best defense. The 82:1 machine-to-human identity ratio isn’t sustainable for human SOC teams alone. Since we can have AI train AI, we need AI to defend AI.

The three-level framework isn’t a destination — it’s a continuous maturity model. Start with the foundation, layer in depth, and evolve toward intelligence. Companies that master this will turn security from a cost center into a competitive moat.

Which level is your organization at today? And what’s your plan to reach the next?

A three-level framework means nothing without an execution plan. Find out exclusive content on how to operationalize these controls and robust AI systems at ai-gentic.io/blog. Explore more technical details at Hugging Face.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.