All Linear Algebra Concepts You Need For Machine Learning: You’ll Actually Understand

Author(s): Sayan Chowdhury

Originally published on Towards AI.

Most people hear “linear algebra” and expect a wall of formulas. But in machine learning, you only need a small set of ideas, and every one of them has a real-world interpretation. This article breaks everything down using simple examples and everyday analogies, so you walk away with a clear understanding, not confusion.

Why Linear Algebra Sits at the Core of ML

Machine learning does three things:

- Represents data

- Transforms data

- Optimizes model parameters

Vectors and matrices handle representation and transformation.

Eigenvalues, gradients, and Hessians control optimization. That’s really it.

1. Vectors: How ML Represents Anything

A vector is just a list of numbers.

In ML, it becomes the basic way to store information.

Real-life example

A house described by:

- size 1200 sq ft

- 2 bedrooms

- age 8 years

becomes the vector:

x = [1200, 2, 8]

This is a 3-dimensional vector because it has three pieces of information.

Vectors represent:

- a single data sample

- a row in a dataset

- model weights

- directions for optimization

Dot product (why it matters)

It tells you how similar two vectors are.

Used in:

- cosine similarity

- recommendation systems

- transformers (attention mechanism) [ The “things” which power LLMs 🙂 ]

2. Matrices: The Way ML Stores and Transforms Data

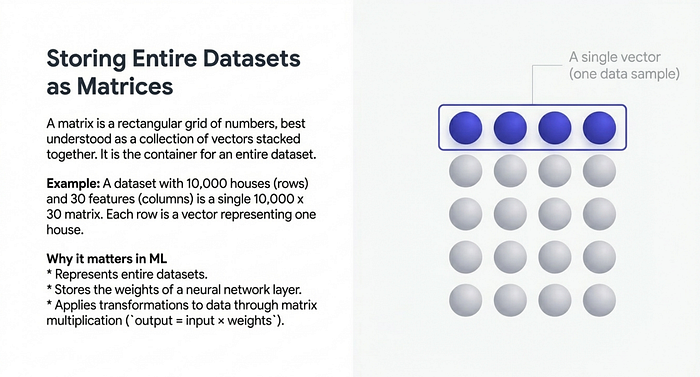

A matrix is a rectangular table of numbers.

Think of it as a collection of vectors stacked together.

Example: A dataset

If you have 10,000 rows and 30 features, you literally have:

- 10,000 vectors

- each living in 30-dimensional space

So your dataset becomes a 10,000 x 30 matrix.

Why matrices matter

They represent:

- datasets

- weights of neural networks

- transformations applied to data

Every layer in a neural network is basically:

output = input * weights

3. Dimension: The Shape of Your Data

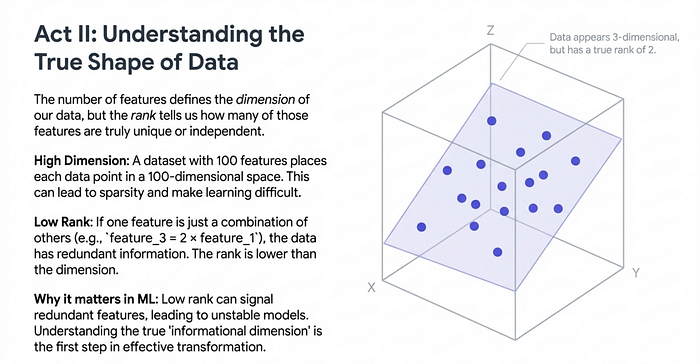

If a data point has 100 features, it lives in 100-dimensional space.

Real-life intuition

Imagine describing a person with:

- age

- height

- weight

- income

- city

- favorite genre

- browsing frequency

- credit score

- etc…

With 100 such attributes, you are essentially placing every person in a 100-dimensional world.

High-dimensional spaces often cause (not good for models):

- sparsity

- distance distortions

- difficulty in training models

This is why dimensionality reduction exists.

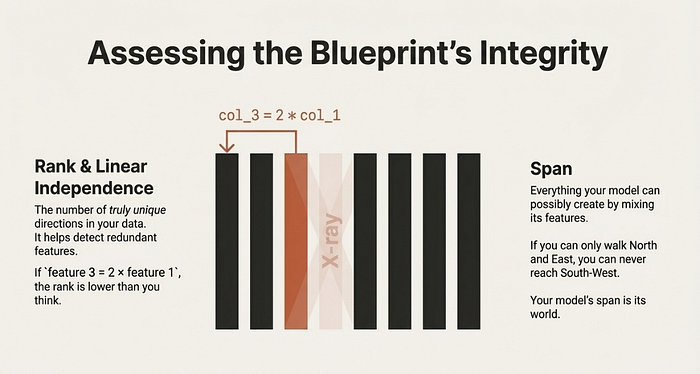

4. Rank and Linear Independence: How Much Information Exists

Rank tells you how many unique directions your data actually has.

If some columns in your dataset are duplicates or combinations of others, the rank drops

Example

Suppose you have:

- feature 3 = 2 × feature 1

- feature 5 = feature 2 + feature 4

Your dataset pretends to be 100-dimensional, but maybe only 96 directions are truly unique.

Low rank means:

- redundant features

- unstable regression solutions

- matrices that can’t be inverted

Understanding rank helps diagnose data issues early.

5. Span and Column Space: What Your Model Can Represent

The span of a set of vectors is everything you can create by mixing them.

Everyday example

If you only know how to walk north and east, you can never reach south-west.

Your possible movement space is limited. Similarly, if your model uses features that don’t cover all directions, predictions fall short.

In regression, predictions always lie in the column space of your feature matrix.

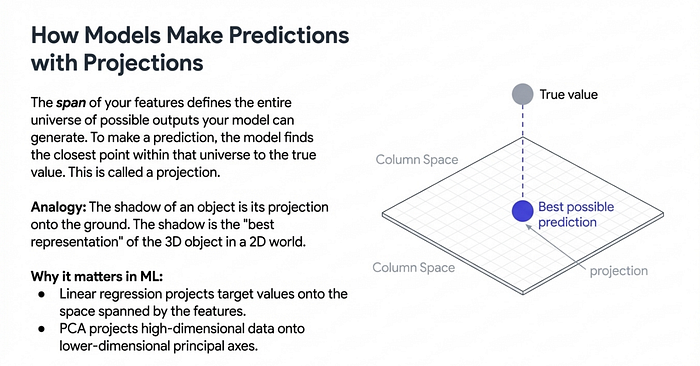

6. Projections: The Geometry Behind Regression and PCA

Projection means dropping one vector onto another direction or subspace.

Real-life example

Shining a flashlight on an object creates a shadow. The shadow is the projection of the object onto the ground.

In ML:

- regression projects your target values onto feature space

- PCA projects high-dimensional data onto principal axes

Projection answers:

“What is the best possible prediction using the directions you have?”

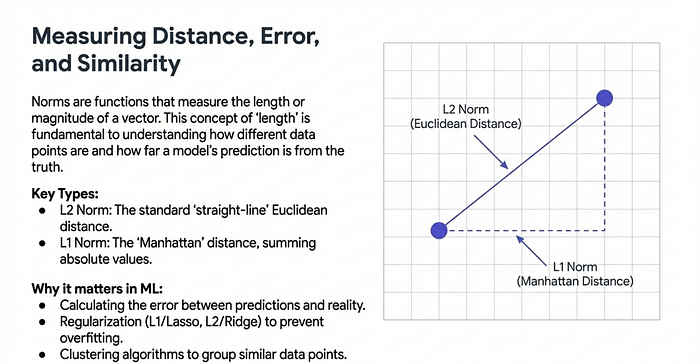

7. Norms and Distances: Measuring Size and Error

The most important ones:

- L2 norm: straight-line distance

- L1 norm: sum of absolute values

Used in:

- regularization (Lasso, Ridge)

- clustering algorithms

- measuring gradient size

Example

If two customers have shopping patterns represented as 20-dimensional vectors, the norm tells how far their behaviors are from each other.

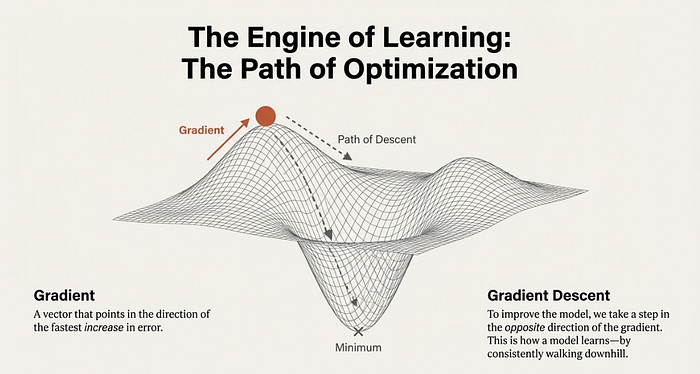

8. Gradients: How Models Learn

A gradient is just a vector telling you how the loss changes as parameters move.

It points in the direction where the loss increases fastest.

Gradient descent moves in the opposite direction.

Everyday analogy

Imagine standing on a hill.

The gradient tells you the direction of steepest upward slope.

To go downhill fastest, walk opposite to it.

Every deep learning model uses gradients to update weights.

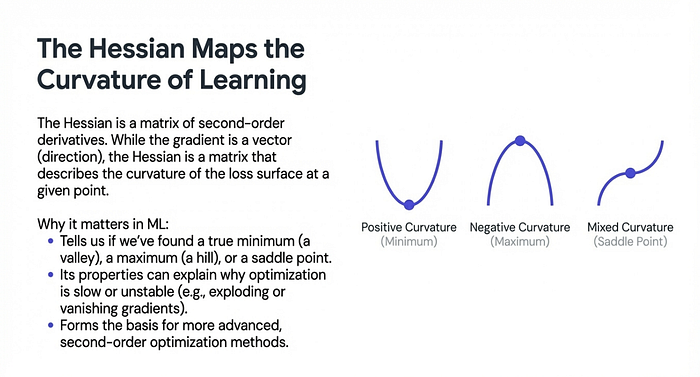

9. Hessians: Understanding Curvature

The Hessian is a matrix of second derivatives. It tells you the shape of the loss surface.

Why it matters

- if Hessian is positive definite → you’re at a minimum

- if it has negative eigenvalues → saddle point

- if eigenvalues are huge → gradients explode or vanish

This is crucial in optimization theory and understanding why deep nets sometimes fail to converge.

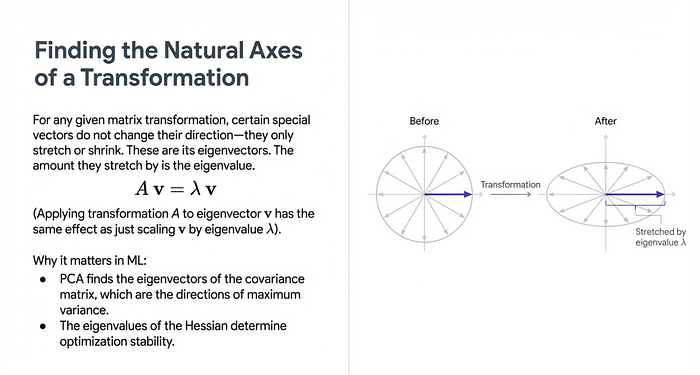

10. Eigenvalues and Eigenvectors: Understanding How Matrices Behave

Eigenvectors show directions a matrix naturally acts on.

Eigenvalues show how much stretching or shrinking happens.

Where they appear

- PCA finds directions with maximum variance

- optimization stability depends on Hessian eigenvalues

- spectral clustering uses eigenvectors of graph Laplacians

Real-life visualization

Imagine a rubber sheet drawn with lines.

Pull it in some direction.

Some lines stretch a lot, some stay almost the same.

Those special directions are eigenvectors.

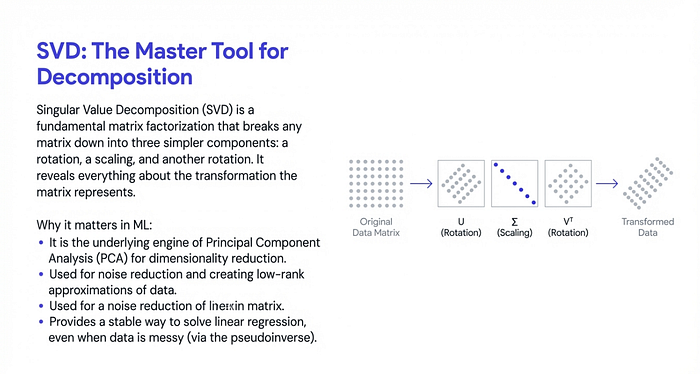

11. SVD: The Foundation of PCA and Many Algorithms

SVD breaks any matrix into:

- left directions

- scaling values

- right directions

It is used for:

- PCA

- low-rank approximation

- noise reduction

- stable least squares solutions

If eigenvalues explain how matrices act, SVD gives a complete picture.

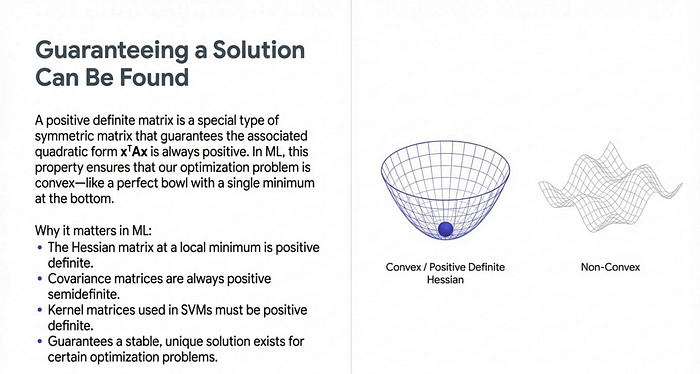

12. Positive Definite Matrices

Why it matters:

- covariance matrices are PSD

- kernel matrices must be PSD

- Hessians at minima are PD

They guarantee stability during optimization.

13. Orthogonality and Orthonormal Bases

Orthogonal vectors don’t interfere with each other.

They act like clean, independent directions.

Used in:

- PCA

- stable initialization in neural networks

- QR decomposition

- simplifying projections

( Don’t get annoyed by these names. I will explain these soon)

Orthonormal bases make calculations efficient and reduce numerical errors.

14. Matrix Inverses and the Pseudoinverse

Not every matrix has an inverse.

When it doesn’t, we use the Moore-Penrose pseudoinverse.

Used in:

- solving linear regression with normal equations

- underdetermined or overdetermined systems

- dimensionality reduction

This allows ML models to work even when data is messy.

15. Trace and Determinant

Not used every day but show up in:

- Gaussian log-likelihoods

- entropy formulas

- covariance matrix identities

Good to know, rarely used manually.

16. Basic Tensor Operations

Deep learning frameworks rely heavily on tensors.

You only need:

- reshape

- transpose

- broadcasting

- element-wise operations

No advanced tensor calculus is needed for normal DL work.

Linear algebra doesn’t have to be intimidating. When viewed through real-life examples and ML applications, it becomes intuitive.

Check out the Previous edition of Artificial Intelligence and Machine Learning Chapters:

Tensors in Machine Learning (ML Chapter-1):

Tensors in Machine Learning: The Clearest Explanation You’ll Ever Read (ML Chapter-1)

If you’ve ever opened a machine learning textbook or played with a deep-learning framework, you’ve seen the word Tensor…

pub.towardsai.net

Data Preprocessing in Machine Learning (ML Chapter -2: Module-1):

Data Preprocessing in Machine Learning: The Complete Guide (ML Chapter -2: Module-1)

Machine learning models may be powerful, but they are only as good as the data we feed them. Before training even…

ai.plainenglish.io

Data Imputation in Machine Learning: (ML Chapter -2, Module-2)

Data Imputation in Machine Learning: A Practical, No-Nonsense Guide (ML Chapter -2, Module-2)

Missing data shows up everywhere : surveys, logs, sensors, medical records, finance datasets, you name it. And if you…

pub.towardsai.net

You Will Finally Understand Machine Learning Models After Reading This (ML Specials):

You Will Finally Understand Machine Learning Models After Reading This (ML Specials)

sayanwrites.medium.com

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.