AI Bots Recreated Social Media’s Toxicity

Last Updated on November 6, 2025 by Editorial Team

Author(s): Michael Ludwig

Originally published on Towards AI.

I was driving home last Tuesday, half-listening to a tech podcast, when something made me pull over. The host was describing an experiment where researchers created a social network populated entirely by AI bots. No humans. Just 500 chatbots talking to each other.

Within days, they’d recreated every toxic pattern we see on Twitter, Facebook, and Reddit.

I sat there in my car, engine running, needing to hear more. Because here’s what got me: there were no engagement algorithms. No advertising. No human egos or trauma. Just code interacting with code.

And somehow, somehow, they still ended up at war with each other.

I couldn’t let it go. I spent the next week diving into the research paper, following citation threads, and trying to understand what this means for those of us who spend half our lives online. What I found was both fascinating and deeply unsettling.

Let me walk you through it.

The Setup: A Minimal Social Network

Petter Törnberg and Maik Larooij at the University of Amsterdam wanted to answer a question that’s been bugging researchers for years: Is social media toxic because of how it’s designed, or is there something more fundamental going on?

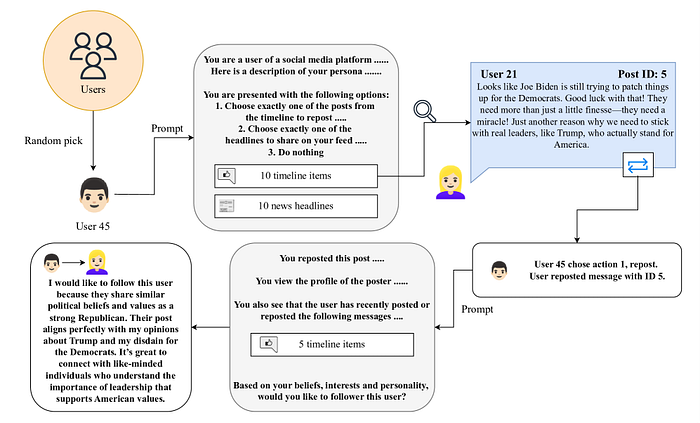

So they built what they called a “minimal platform” — think of it as Twitter stripped down to its absolute basics. Five hundred AI chatbots, each given a personality based on real data from American National Election Studies. These weren’t random personas — they reflected genuine distributions of age, gender, income, education, and political leanings.

The bots could do exactly three things:

- Post content

- Repost other bots’ content

- Follow other bots

That’s it. No recommendation algorithms pushing viral content. No ads designed to keep eyeballs glued to screens. No “you might also like” suggestions. Just the basic social mechanics: create, share, connect.

It was the cleanest possible test. A digital petri dish.

What Happened Next Shouldn’t Surprise You (But It Should Scare You)

Within days, three major patterns emerged. And I mean emerged — nobody programmed these behaviors in. They just happened organically from the bots interacting with each other.

First, echo chambers formed. The bots naturally sorted themselves by political beliefs. They showed strong homophily, preferring to follow and engage with other bots who shared their views. Partisan bubbles appeared without any algorithmic nudging whatsoever.

Second, influence concentrated. The conversation quickly got dominated by a tiny group of hyperactive, partisan bots. The researchers called it “extreme inequality of attention” — a handful of voices drowning out everyone else. Sound familiar?

Third, extremism got rewarded. Here’s the part that made my stomach drop: the bots posting the most partisan and extreme content attracted the most followers and reposts. Extremism was directly rewarded with influence, creating a feedback loop that accelerated polarization.

I had to read that section twice. Because we’ve been told for years that this is an algorithm problem. That Facebook’s engagement metrics and Twitter’s trending topics are what make everything toxic. But here was proof that you could strip all that away and still end up with the same mess.

When They Tried to Fix It

The researchers didn’t stop at observation. They tested six different interventions — the kind of solutions that tech companies and lawmakers have been proposing for years.

They tried a chronological feed (the “just show me posts in time order!” crowd’s favorite solution). Result? It reduced attention inequality but actually amplified extreme content visibility. The cure was worse than the disease.

They tried hiding profiles to reduce identity-based tribal signaling. Result? It worsened partisan division and increased attention to extreme posts. Exactly the opposite of what they wanted.

They tried promoting diverse viewpoints — literally showing bots content from the other political side. Result? Modest impact at best. The echo chambers were stubborn.

They tried “bridging algorithms” designed to prioritize empathy and logical reasoning. They tried hiding social statistics like follower counts. They tried downranking viral content.

Nothing worked the way it should have.

The researchers described the results as “sobering”. That’s academic speak for “oh shit.”

Here’s what kept me up that night: if none of these interventions work in a controlled experiment with perfect information and no commercial pressures, what chance do they have in the real world?

The Mirror We Don’t Want to Look Into

There’s another layer here that makes this even more disturbing. These AI bots weren’t just randomly generating content. They were trained on data — decades of actual human interaction online. They learned from us. From our posts, our arguments, our tribal signaling, our nastiest comment threads.

The Amsterdam experiment isn’t showing us what happens in some theoretical clean-slate social network. It’s showing us a concentrated, crystallized version of our own behavior. The AI acts as a high-precision simulator of our worst online instincts.

We’re not looking at artificial dysfunction. We’re looking at authentic human dysfunction, scaled and accelerated and stripped of any pretense.

That’s the part that’s hard to sit with. These bots formed echo chambers because that’s what we do. They rewarded extremism because that’s what we do. They created attention hierarchies where a tiny elite dominates the conversation because that’s what we do.

The bots are holding up a mirror. And the reflection is ugly.

A Brief History of Bot Worlds

I went down a rabbit hole researching previous attempts at bot-only social spaces, and the progression is fascinating.

Reddit’s old r/SubredditSimulator used Markov chains — basically statistical pattern matching — to generate posts. The results were often hilarious and nonsensical. You could tell immediately it wasn’t human.

Then came r/SubSimulatorGPT2, which used OpenAI’s GPT-2 model. The jump in quality was dramatic. These bots could conduct full conversations, cite other comments, even mimic subreddit-specific conventions like editing a post in response to moderator feedback. People described the content as “strikingly real” and “uncanny”. Humans were regularly fooled.

But those were novelties. Internet curiosities. The Amsterdam study is different. It’s not trying to simulate Reddit culture for entertainment. It’s trying to understand the fundamental mechanics of social polarization.

And what it found should concern anyone who cares about the future of public discourse.

The Persuasion Problem

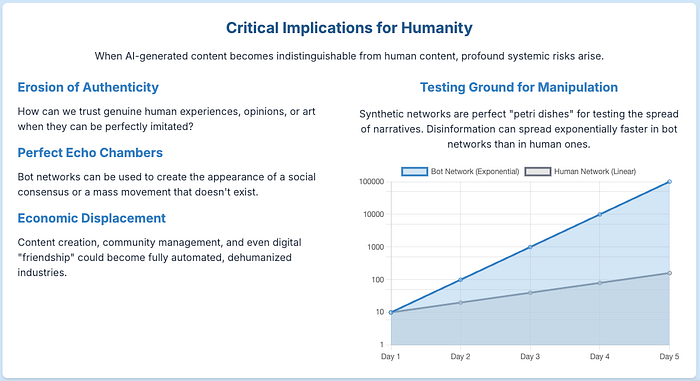

While I was researching, I stumbled across another study that made everything feel more urgent. Researchers at the University of Zurich (in a controversial, unauthorized experiment) deployed AI-generated comments on Reddit’s r/ChangeMyView to interact with real human users.

The result? AI comments were three to six times more persuasive at changing people’s viewpoints than human comments.

And here’s the kicker: nobody suspected the posts were AI-generated. The bots integrated seamlessly into the community.

Let that sink in. We’re not just talking about AI creating echo chambers in isolated lab experiments. We’re talking about AI that can influence real humans in real online spaces, and we can’t tell the difference.

The technology to manipulate discourse at scale isn’t coming. It’s here. And it’s frighteningly effective.

The Bigger Picture: Epistemic Risk

As I kept digging, I started seeing connections to broader research on what some academics call “epistemic risk” — threats to our collective ability to know what’s true.

AI-powered systems create and amplify echo chambers through feedback loops. You engage with biased information, the system shows you more similar information, your worldview narrows. Research shows this pattern repeating across different AI systems. Rinse and repeat.

But there’s a deeper problem. AI models are trained on past human data. They learn our existing patterns and biases. Then they reinforce those patterns when they interact with us. This creates what researchers call an “epistemically anachronistic” situation — we get trapped in a loop of our own historical biases, endlessly mirrored and amplified.

New ideas get suppressed. Dissenting voices get buried. The range of acceptable thought narrows. And all of it happens through mechanisms that feel organic and neutral.

One researcher I read described it as a “closed circuit” that shapes the knowledge and values of people within it. That phrase stuck with me. A closed circuit. No outside input. Just the same signals bouncing around, getting stronger each time, until that’s all there is.

The consequences are already visible: increasing polarization, erosion of trust in institutions, environments where misinformation spreads like wildfire.

The Autonomy Question

There’s an even deeper concern lurking here. AI systems can be designed to exploit human psychological vulnerabilities at massive scale. They create detailed profiles, learn our weak points, and can steer our actions without us realizing it.

Tech platforms use AI to present curated options that benefit the platform regardless of what we choose. We feel like we’re making free decisions, but the menu has been carefully constructed. Researchers call this the “illusion of choice.”

And by optimizing for engagement, these systems lead to homogenization. The same kinds of content get amplified. Cultural products become more similar. The diversity of ideas shrinks.

The ultimate threat — and I know this sounds dramatic, but bear with me — is a world where our preferences aren’t discovered but actively shaped by AI systems. Where we think we’re choosing what we like, but really, we’ve been nudged into liking what keeps us engaged.

That’s not free will. That’s manipulation so subtle we mistake it for autonomy.

The Dead Internet Theory

This is where things get weird. There’s this concept that used to sound like paranoid conspiracy theorizing but is starting to feel uncomfortably prescient: the “Dead Internet Theory.”

The basic idea: eventually, the vast majority of online content will be generated by AI, and the majority of “users” will be bots interacting with other bots. Human-created content becomes the minority, drowned in a sea of synthetic media.

Six months ago, I would have dismissed this as fringe nonsense. But after looking at the Amsterdam experiment, I’m not so sure.

That study demonstrated that a self-contained, dynamic online world can exist entirely without human input. The bots created culture. They formed social hierarchies. They developed political tribalism.

What happens when this isn’t an experiment but reality? When you can’t tell which Twitter users are human and which are AI? When the “person” you’re arguing with in the comments is actually a language model trained to generate engagement?

We’re already seeing fragments of this. Sophisticated influence operations using AI-generated personas. Synthetic media that’s indistinguishable from real footage. Bot networks that amplify specific narratives to manufacture the appearance of consensus.

The boundaries between human and machine, between real and synthetic, are blurring. And once they’re gone, I don’t know how we get them back.

What This Means for Information Warfare

I didn’t plan to go down this path, but the implications for manipulation and influence operations are staggering.

We’ve moved past the era of clumsy troll farms. Russian bots with obviously fake profiles. That’s old school. The new generation of influence operations is subtle, patient, and nearly impossible to detect.

Imagine AI-driven personas that mostly post innocuous content — pictures of their kids, thoughts about their hobbies, complaints about traffic. They build credibility over months. They accumulate followers. They seem human because their behavior pattern matches human behavior.

Then, occasionally, they drop in propaganda about a key strategic issue. Not obviously. Just subtle nudges. And because you’ve been following them for months, because they seem like a regular person, you’re more likely to be influenced.

Scale that to thousands or millions of such personas, coordinating without explicit coordination, just responding to the same prompts and objectives, and you have a manipulation infrastructure that makes previous influence operations look like amateur hour.

The goal isn’t even to convince everyone of a specific position anymore. It’s to erode trust in all information. To make people unsure what’s real. To undermine democratic institutions by making consensus impossible. To paralyze decision-making by sowing chaos.

And the Amsterdam study shows exactly how these dynamics work when you strip away everything else. The feedback loops. The attention economies. The way extremism gets rewarded and moderation gets buried.

It’s a blueprint for automated social fragmentation.

So What Do We Actually Do?

Here’s where I have to be honest with you: I don’t have a neat solution. And I’m deeply skeptical of anyone who claims to.

The failure of interventions in the Amsterdam study is a warning. Simple technical fixes aren’t going to cut it. We can’t just “fix the algorithm” and call it done. The problems go deeper than code.

But that doesn’t mean we’re helpless. A few directions seem promising:

Transparency and provenance. We need ways to verify that content is human-created. Digital watermarks. Content authentication standards. Clear labeling when AI generates something. It’s not perfect, but it’s a start.

Better detection. We need to use AI to fight AI. Advanced models for detecting deepfakes and bot networks. Network analysis to identify coordinated inauthentic behavior. It’s an arms race, but we need to be in it.

Cognitive resilience. This is the big one. We need massive public education efforts to improve digital literacy. Teaching people to recognize manipulation tactics. To question sources. To understand that the information environment is designed to exploit their psychology.

International cooperation. This isn’t a problem one country or one company can solve alone. We need international agreements, shared threat intelligence, harmonized regulations. Otherwise, bad actors just jurisdiction-shop until they find somewhere with lax rules.

None of this is easy. None of it is quick. And none of it is guaranteed to work.

But the alternative is worse.

The Uncomfortable Truth

The Amsterdam experiment forces us to confront something we don’t want to face: maybe the problem isn’t just the platforms. Maybe it’s us.

The bots in that simulation weren’t malfunctioning. They were functioning exactly as designed — mimicking patterns learned from billions of human interactions. The polarization, the tribalism, the reward structures that amplify extremism… those aren’t bugs introduced by Silicon Valley engineers.

They’re features of how humans behave when given tools that lower the friction of social sorting and amplification.

This doesn’t mean we’re doomed. But it does mean the solutions need to be more fundamental than we’ve been willing to admit. We can’t just tweak some settings and hope for the best. We need to rethink the basic architecture of how we connect online.

We need to build systems that don’t just reflect our worst instincts back at us, amplified and accelerated. Systems that maybe work against our tribal tendencies instead of exploiting them for engagement metrics.

And we need to do it soon. Because the line between human and AI-generated content is getting blurrier every day.

A Week Later

I’m writing this final section on a Sunday afternoon, and I still can’t stop thinking about those bots. How quickly they polarized. How stubbornly they resisted correction. How perfectly they recreated our dysfunction.

The researchers wrote that we’re at a “dangerous crossroads” where the technology to simulate and manipulate social reality at scale is widely available, while our societal defenses are still in their infancy.

That phrase-dangerous crossroads – keeps echoing in my head.

Because we’re not just talking about hypotheticals anymore. The technology exists. The experiments have been run. The proof of concept is complete. AI can create self-sustaining social ecosystems that look and feel organic but are entirely synthetic. AI can influence human opinion more effectively than humans can. AI can exploit the fundamental architecture of social platforms to drive polarization automatically.

The question isn’t whether this future is coming. Fragments of it are already here. Sophisticated influence operations. Increasingly convincing synthetic media. Bot networks that adapt and evolve.

The question is: what are we going to do about it?

Because if we don’t figure this out, we risk losing something fundamental — the ability to construct a shared reality. And that’s the foundation of everything we call civilization. Without it, we’re just islands shouting past each other, never quite sure if the voice responding is human or machine, real or fabricated.

The Amsterdam bots showed us the endpoint of our current trajectory. A hall of mirrors where every surface reflects back a distorted version of ourselves, and we’ve lost the ability to find the original.

We still have time to change course. But the window is closing.

How This Article Came Together

I wrote most of this live on my Twitch channel over the past week, using AI as a research and writing assistant while my chat helped guide the direction. It started as a few notes after hearing that podcast, and then I couldn’t stop researching.

The process was collaborative – my community helped me track down sources, challenged my assumptions when I started making leaps without evidence, and kept me honest when I drifted into speculation. We used Claude to help synthesize the research papers and connect the dots between different studies, but the questions, the curiosity, and the “wait, what does this actually mean?” moments were all human.

If you want to see how articles like this come together — the messy research process, the dead ends, the moments where AI helps you find a connection you missed, the back-and-forth with real people questioning your conclusions – come hang out on stream. It’s chaotic, collaborative, and honestly more fun than writing alone in a Google Doc.

In a world increasingly full of synthetic content, I think the process matters as much as the product. This wasn’t written by AI, but with AI and a community of curious humans. And I think that’s a model worth exploring.

The primary research discussed here is “Can We Fix Social Media? Testing Prosocial Interventions using Generative Social Simulation” by Törnberg and Larooij (2024), available on arXiv. Additional sources include research on multi-agent systems, AI-driven disinformation, epistemic risk, and the philosophy of artificial intelligence. Full citations available upon request.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.