Agents 2.0: From Shallow Loops to Deep Agents

Last Updated on November 25, 2025 by Editorial Team

Author(s): Kushal Banda

Originally published on Towards AI.

For the past year, building an AI agent usually meant one thing: setting up a while loop, take a user prompt, send it to an LLM, parse a tool call, execute the tool, send the result back, and repeat. This is what we call a Shallow Agent or Agent 1.0.

This architecture is fantastically simple for transactional tasks like “What’s the weather in Tokyo and what should I wear?”, but when asked to perform a task that requires 50 steps over three days, and they invariably get distracted, lose context, enter infinite loops, or hallucinates because the task requires too many steps for a single context window.

We are seeing an architectural shift towards Deep Agents or Agents 2.0. These systems do not just react in a loop. They combine agentic patterns to plan, manage a persistent memory/state, and delegate work to specialized sub-agents to solve multi-step, complex problems.

Agents 1.0: The Limits of the “Shallow” Loop

To understand where we are going, we must understand where we are. Most agents today are “shallow”. This means rely entirely on the LLM’s context window (conversation history) as their state.

- User Prompt: “Find the price of Apple stock and tell me if it’s a good buy.”

- LLM Reason: “I need to use a search tool.”

- Tool Call:

search("AAPL stock price") - Observation: The tool returns data.

- LLM Answer: Generates a response based on the observation or calls another tool.

- Repeat: Loop until done.

This architecture is stateless and ephemeral. The agent’s entire “brain” is within the context window. When a task becomes complex, e.g. “Research 10 competitors, analyze their pricing models, build a comparison spreadsheet, and write a strategic summary” it will fail due to:

- Context Overflow: The history fills up with tool outputs (HTML, messy data), pushing instructions out of the context window.

- Loss of Goal: Amidst the noise of intermediate steps, the agent forgets the original objective.

- No Recovery mechanism: If it goes down a rabbit hole, it rarely has the foresight to stop, backtrack, and try a new approach.

Shallow agents are great at tasks that take 5–15 steps. They are terrible at tasks that take 500.

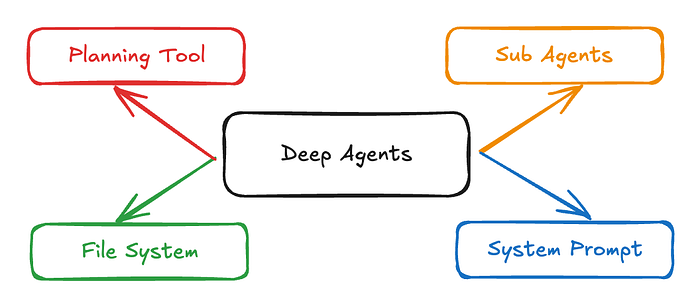

The Architecture of Agents 2.0 (Deep Agents)

Deep Agents decouple planning from execution and manage memory external to the context window. The architecture consists of four pillars.

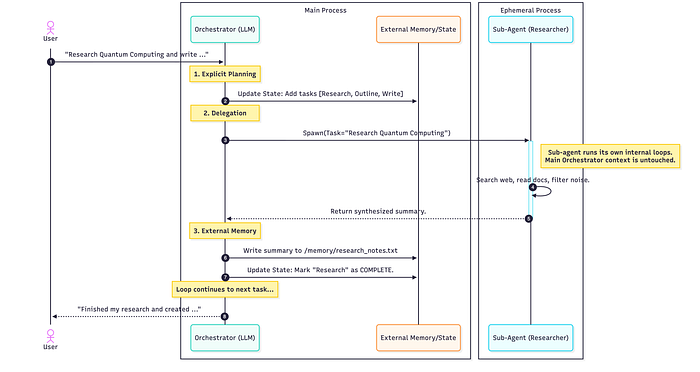

Pillar 1: Explicit Planning

Shallow agents plan implicitly via chain-of-thought (“I should do X, then Y”). Deep agents use tools to create and maintain an explicit plan, which can be To-Do list in a markdown document.

Between every step, the agent reviews and updates this plan, marking steps as pending, in_progress, or completed or add notes. If a step fails, it doesn’t just retry blindly, it updates the plan to accommodate the failure. This keeps the agent focused on the high-level task.

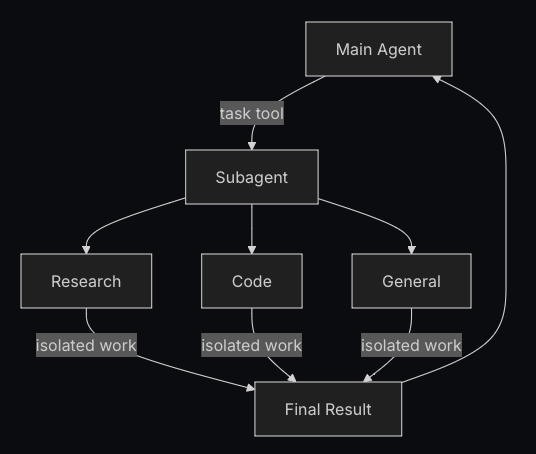

Pillar 2: Hierarchical Delegation (Sub-Agents)

Complex tasks require specialization. Shallow Agents tries to be a jack-of-all-trades in one prompt. Deep Agents utilize an Orchestrator → Sub-Agent pattern.

The Orchestrator delegates task(s) to sub-agents each with a clean context. The sub-agent (e.g., a “Researcher,” a “Coder,” a “Writer”) performs its tool call loops (searching, erroring, retrying), compiles the final answer, and returns only the synthesized answer to the Orchestrator.

Pillar 3: Persistent Memory

To prevent context window overflow, Deep Agents utilize external memory sources, like filesystem or vector databases as their source of truth. Frameworks like Claude Code and Manus give agents read/write access to them. An agent writes intermediate results (code, draft text, raw data). Subsequent agents reference file paths or queries to only retrieve what is necessary. This shifts the paradigm from "remembering everything" to "knowing where to find information."

Pillar 4: Extreme Context Engineering

Smarter models do not require less prompting, they require better context. You cannot get Agent 2.0 behavior with a prompt that says, “You are a helpful AI.”. Deep Agents rely on highly detailed instructions sometimes thousands of tokens long. These define:

- Identifying when to stop and plan before acting.

- Protocols for when to spawn a sub-agent vs. doing work themselves.

- Tool definitions and examples on how and when to use.

- Standards for file naming and directory structures.

- Strict formats for human-in-the-loop collaboration.

Deep Agents overview

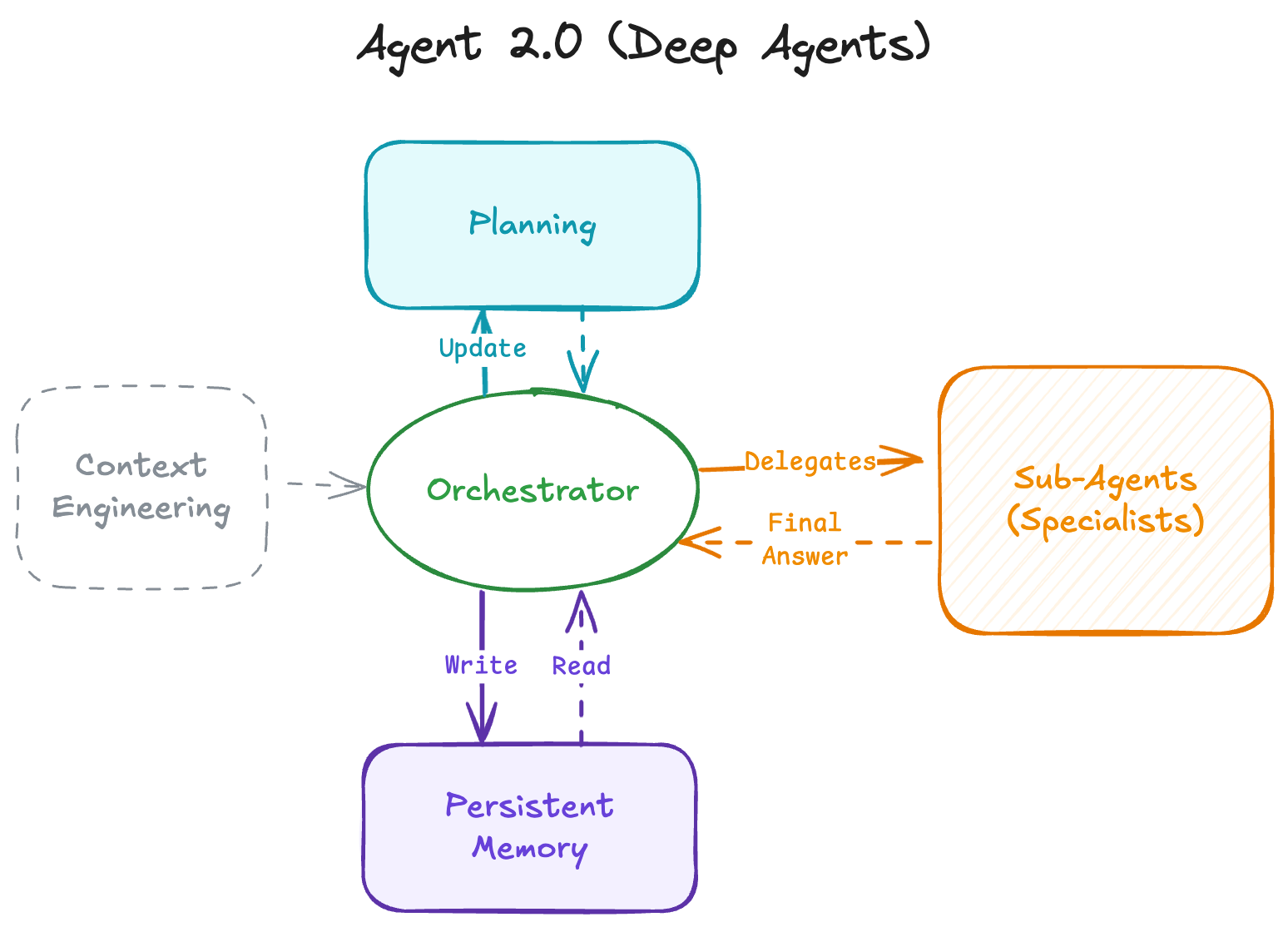

deepagents is a standalone library for building agents that can tackle complex, multi-step tasks. Built on LangGraph and inspired by applications like Claude Code, Deep Research, and Manus, deep agents come with planning capabilities, file systems for context management, and the ability to spawn subagents.

When to use deep agents

Use deep agents when you need agents that can:

- Handle complex, multi-step tasks that require planning and decomposition

- Manage large amounts of context through file system tools

- Delegate work to specialized subagents for context isolation

- Persist memory across conversations and thread

Core Capabilities

Agent harness capabilities

We think of deepagents as an “agent harness”. It is the same core tool calling loop as other agent frameworks, but with built-in tools and capabilities.

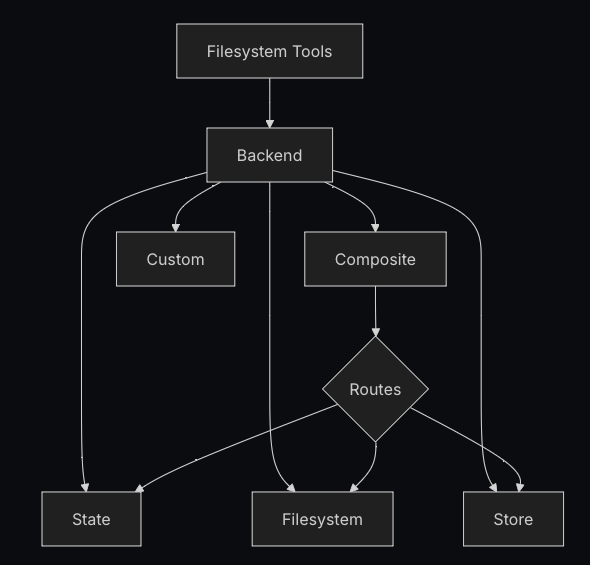

Backends

Choose and configure filesystem backends for deep agents. You can specify routes to different backends, implement virtual filesystems, and enforce policies.

Deep agents expose a filesystem surface to the agent via tools like ls, read_file, write_file, edit_file, glob, and grep. These tools operate through a pluggable backend.

Subagents

Deep agents can create subagents to delegate work. You can specify custom subagents in the subagents parameter. Subagents are useful for context quarantine(keeping the main agent’s context clean) and for providing specialized instructions.

Code Snippet (Subagents)

import os

from typing import Literal

from tavily import TavilyClient

from deepagents import create_deep_agent

tavily_client = TavilyClient(api_key=os.environ["TAVILY_API_KEY"])

def internet_search(

query: str,

max_results: int = 5,

topic: Literal["general", "news", "finance"] = "general",

include_raw_content: bool = False,

):

"""Run a web search"""

return tavily_client.search(

query,

max_results=max_results,

include_raw_content=include_raw_content,

topic=topic,

)

research_subagent = {

"name": "research-agent",

"description": "Used to research more in depth questions",

"system_prompt": "You are a great researcher",

"tools": [internet_search],

"model": "openai:gpt-4o", # Optional override, defaults to main agent model

}

subagents = [research_subagent]

agent = create_deep_agent(

model="claude-sonnet-4-5-20250929",

subagents=subagents

)

Human-in-the-loop

Some tool operations may be sensitive and require human approval before execution. Deep agents support human-in-the-loop workflows through LangGraph’s interrupt capabilities. You can configure which tools require approval using the interrupt_onparameter.

The interrupt_on parameter accepts a dictionary mapping tool names to interrupt configurations. Each tool can be configured with:

True: Enable interrupts with default behavior (approve, edit, reject allowed)False: Disable interrupts for this tool{"allowed_decisions": [...]}: Custom configuration with specific allowed decisions

from langchain.tools import tool

from deepagents import create_deep_agent

from langgraph.checkpoint.memory import MemorySaver

@tool

def delete_file(path: str) -> str:

"""Delete a file from the filesystem."""

return f"Deleted {path}"

@tool

def read_file(path: str) -> str:

"""Read a file from the filesystem."""

return f"Contents of {path}"

@tool

def send_email(to: str, subject: str, body: str) -> str:

"""Send an email."""

return f"Sent email to {to}"

# Checkpointer is REQUIRED for human-in-the-loop

checkpointer = MemorySaver()

agent = create_deep_agent(

model="claude-sonnet-4-5-20250929",

tools=[delete_file, read_file, send_email],

interrupt_on={

"delete_file": True, # Default: approve, edit, reject

"read_file": False, # No interrupts needed

"send_email": {"allowed_decisions": ["approve", "reject"]}, # No editing

},

checkpointer=checkpointer # Required!

)

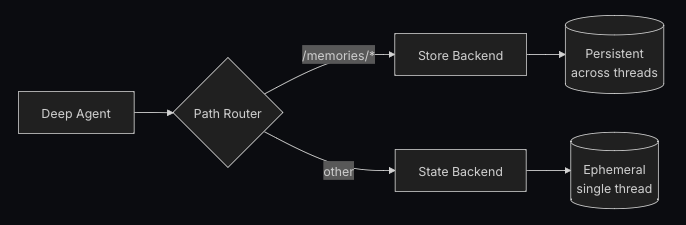

Long-term memory

Deep agents come with a local filesystem to offload memory. By default, this filesystem is stored in agent state and is transient to a single thread files are lost when the conversation ends.

You can extend deep agents with long-term memory by using a CompositeBackend that routes specific paths to persistent storage. This enables hybrid storage where some files persist across threads while others remain ephemeral.

Setup

Configure long-term memory by using a CompositeBackend that routes the /memories/ path to a StoreBackend:

from deepagents import create_deep_agent

from deepagents.backends import CompositeBackend, StateBackend, StoreBackend

from langgraph.store.memory import InMemoryStore

def make_backend(runtime):

return CompositeBackend(

default=StateBackend(runtime), # Ephemeral storage

routes={

"/memories/": StoreBackend(runtime) # Persistent storage

}

)

agent = create_deep_agent(

store=InMemoryStore(), # Required for StoreBackend

backend=make_backend

)

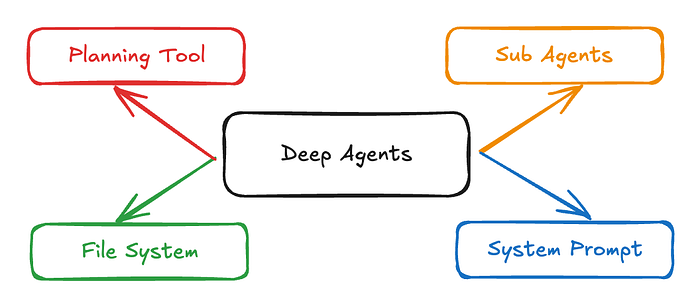

Deep Agents Middleware

Deep agents are built with a modular middleware architecture. Deep agents have access to:

- A planning tool

- A filesystem for storing context and long-term memories

- The ability to spawn subagents

Each feature is implemented as separate middleware. When you create a deep agent with create_deep_agent, we automatically attach TodoListMiddleware, FilesystemMiddleware, and SubAgentMiddleware to your agent.

To-do list middleware

Planning is integral to solving complex problems. If you’ve used Claude Code recently, you’ll notice how it writes out a to-do list before tackling complex, multi-part tasks. You’ll also notice how it can adapt and update this to-do list on the fly as more information comes in.

TodoListMiddleware provides your agent with a tool specifically for updating this to-do list. Before and while it executes a multi-part task, the agent is prompted to use the write_todos tool to keep track of what it’s doing and what still needs to be done.

from langchain.agents import create_agent

from langchain.agents.middleware import TodoListMiddleware

# TodoListMiddleware is included by default in create_deep_agent

# You can customize it if building a custom agent

agent = create_agent(

model="claude-sonnet-4-5-20250929",

# Custom planning instructions can be added via middleware

middleware=[

TodoListMiddleware(

system_prompt="Use the write_todos tool to..." # Optional: Custom addition to the system prompt

),

],

)

Visualizing a Deep Agent Flow

How do these pillars come together? Let’s look at a sequence diagram for a Deep Agent handling a complex request: “Research Quantum Computing and write a summary to a file.”

Conclusion

Moving from Shallow Agents to Deep Agents (Agent 1.0 to Agent 2.0) isn’t just about connecting an LLM to more tools. It is a shift from reactive loops to proactive architecture. It is about better engineering around the model.

Implementing explicit planning, hierarchical delegation via sub-agents, and persistent memory, allow us to control the context and by controlling the context, we control the complexity, unlocking the ability to solve problems that take hours or days, not just seconds.

Acknowledgements

This overview was created with the help of deep and manual research. The term “Deep Agents” was notably popularized by the LangChain team to describe this architectural evolution.

Thanks for reading! If you have any questions or feedback, please let me know on LinkedIn and for more insights on AI, data formats, and LLM systems follow on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.