The Hidden Pitfalls of Elasticsearch Terms Aggregation (and How to Fix Them)

Author(s): Rafay Qayyum

Originally published on Towards AI.

1. Introduction

Elasticsearch’s terms aggregation is commonly used for faceted search, top-N lists, and grouping data by field values, much like GROUP BY in SQL.

It’s powerful and fast, but has several hidden limitations. Under the hood, it uses approximations, shard-level merging, and default limits that can lead to incomplete or inaccurate results, especially with high-cardinality fields.

In this article, we’ll break down the non-obvious caveats of terms aggregation: from why high-cardinality fields can omit globally significant terms unless configured properly, to how shard-level behavior and sorting options introduce subtle but dangerous inaccuracies. We’ll also discuss how to recognize these pitfalls and share strategies to work around them.

2. Basics of Terms Aggregation

At its core, a terms aggregation groups documents by the unique values of a given field and returns the number of documents (doc_count) that fall into each bucket.

Let’s say you have an index named payments with 5 million records and a high-cardinality field product.id containing 650,000 unique products. The index is spread across 5 primary shards.

Now, you want to answer the following questions:

- Which 5 products have the most sales?

- What’s the total revenue for each of them?

- And what are their 2 latest payments?

To do this, you can use a terms aggregation on product.id with two sub-aggregations:

- A

sumon theamountfield to calculate total revenue. - A

top_hitsaggregation to fetch the 2 most recent payments for each product.

Example Query

GET /payments/_search

{

"size": 0,

"aggs": {

"top_products": {

"terms": { "field": "product.id", "size": 5 },

"aggs": {

"total_revenue": { "sum": { "field": "amount" } },

"latest_payments": {

"top_hits": { "size": 2,

"sort": [

{ "payment_date": "desc" }

],

"_source": {

"includes": ["amount", "payment_date", "user_id"]

}

}

}

}

}

}

}

Example Response

# Simplified Structure

{

"aggregations": {

"top_products": {

"buckets": [

{

"key": "A123",

"doc_count": 13400,

"total_revenue": { "value": 2650000.0 },

"latest_payments": {

"hits": {

"hits": [

{ "_source": { "amount": 200, "payment_date": "2024-08-20" }},

{ "_source": { "amount": 250, "payment_date": "2024-07-29" }}

]

}

}

},

{

"key": "B456",

"doc_count": 9100,

"total_revenue": { "value": 1750000.0 },

"latest_payments": { ... }

}

]

}

}

}

3. How Terms Aggregation Actually Works

Before diving into caveats and fixes, it’s important to understand how Elasticsearch actually computes results for a terms aggregation because most surprises stem from this internal execution model.

a) Execution Happens Per Shard

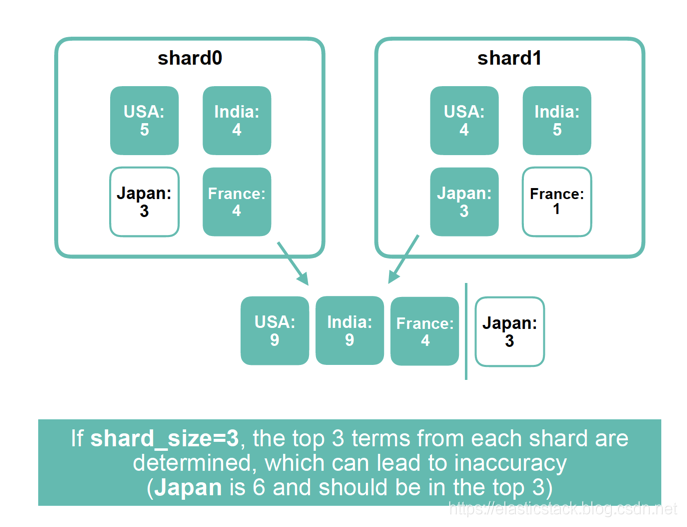

Elasticsearch is a distributed system, so when you run a terms aggregation, Elasticsearch doesn’t scan the entire dataset globally. Instead:

- Each shard (e.g. shard-0 to shard-4) runs the terms aggregation independently on its local data.

- To reduce memory and network overhead, it doesn’t send all terms.

Instead, each shard sends back only its top N terms, whereshard_sizecontrols how many.

If you don’t specify

shard_size, Elasticsearch uses a heuristic:

shard_size = size * 1.5 + 10→ So forsize: 5, each shard sends top 18 terms.

b) Results Are Merged by the Coordinating Node

Once each shard returns its top terms and sub-aggregations:

- The coordinating node merges buckets with the same

product.id. - It sums their doc_counts and combines sub-aggregations (like

sum). - It sorts by total doc_count and picks the global top 5 buckets.

This is only based on the terms that were returned by the shards. If a term was missed locally (not in top 18 on any shard), it will be missing entirely from the global view, even if it should be in the top 5.

c) Key Fields in the Aggregation Response

The response includes more than just the top terms. Here’s what each field tells you:

key: the term value.buckets: A list of terms that made it into the global top-N, each with:doc_count: the sum of matching documents for term across all shards.doc_count_error_upper_bound:estimates the maximum number of documents potentially missed for a term due to shard-level approximations. See Section 6b for a deeper explanation.sum_other_doc_count: Total number of documents not included in the returned top buckets. This gives a sense of how much data is being left out.

4. Sub-Aggregations Can Be Misleading or Omitted

Now that the shard-level selection process is clear, it’s easier to see why even globally significant terms can result in incomplete results especially when sub-aggregations are involved. The problem isn’t limited to just doc_count; associated sub-aggregations like sum, avg, or top_hits can also be partially computed.

a) The Problem

In a terms aggregation with sub-aggregations (such as sum of a numeric field or top_hits for recent documents), only the terms that make it into the local top shard_size on each shard are included in the final merge phase.

Returning to the earlier example:

The index contains 5 million payment records, distributed across 5 shards, and grouped by product.id (with 650,000 unique product IDs). A terms aggregation is used to find the top 5 highest-selling products. Within each bucket, two sub-aggregations are defined:

sum(amount)to calculate total revenue per product- a

top_hitsaggregation to fetch the two most recent payments

When the aggregation runs, each shard returns up to 18 top product IDs (based on the default shard_size heuristic). If a particular product appears frequently across shards 1–4 but only sparsely on shard 5, and doesn't make it into shard 5's top 18, the coordinating node never receives shard 5's contribution for that product.

As a result:

- The

sum(amount)will exclude payments from shard 5, underreporting the total revenue - If the newest payments for that product exist only on shard 5, the

top_hitssub-aggregation may omit them entirely

Even more critically, if the product never makes it into the top 18 of any shard, it will be completely missing from the final output regardless of its global importance.

b) Why This Happens More Often with High Cardinality

High-cardinality fields like product.id increase the likelihood of this problem. With so many unique values competing for a limited number of local slots (shard_size), it becomes statistically less likely for any single term to appear in multiple shards' top N lists.

This leads to:

- Skewed or incomplete results

- Sub-aggregations that reflect only a partial view of the term

- Analytical inaccuracies in dashboards, reporting, or monitoring systems

However, some sub-aggregations like min or max are safe from this issue, because their global result only depends on the smallest or largest value from any shard — not full data from all shards.

c) Clues in the Response

Two fields in the aggregation response hint at these issues:

sum_other_doc_count: Indicates how many documents were associated with terms not included in the final result. A high value suggests potentially important data has been left out.doc_count_error_upper_bound: Provides an upper estimate of how much any reported count might be off due to missed terms. See Section 6b for details.

However, neither field reveals which specific terms were excluded only that omissions occurred.

d) Better Aggregation Strategies

There are two main solutions:

- Manually increase

shard_size

A highershard_sizemeans each shard returns more terms, improving the chances that globally significant values make it into the merge phase. For high-cardinality fields,shard_sizeshould be set far above the default to minimize omissions. - Use a

compositeaggregation

Thecompositeaggregation paginates through all terms, rather than sampling top values. While slightly more complex to implement, it guarantees complete and deterministic results especially useful for use cases like billing, reporting, and data exports.

While increasing shard_size or using composite aggregations can reduce inaccuracy in terms aggregations, both come with tradeoffs. A higher shard_size improves accuracy but increases memory usage, network overhead, and latency between nodes. Composite aggregations don't support custom sorting on sub-aggregations (like sorting by max(amount)), making them unsuitable for some use cases like ranked leaderboards or top-N queries.

5. Why Sorting by Sub-Aggregations Can Mislead

Once it’s clear that terms aggregation results are approximate due to per-shard top-N filtering, it becomes easier to see how sorting the resulting buckets can introduce even more misleading results, especially when dealing with high-cardinality fields like product.id.

In the payments example, aggregations are being run on 5 million documents across 5 shards, grouped by product.id, with sub-aggregations for revenue (sum(amount)) and recent activity (top_hits). Sorting the buckets by different criteria directly affects which products show up and how accurate the results are.

a) Why Missing Data Breaks Sorting by Sub-Aggregation

Suppose you’re grouping 5 million documents (across 5 shards) by product.id, and sorting buckets by sum(amount) to get the top 5 products by revenue.

If product A appears on all shards but isn't in the top shard_size (e.g. 18) on shard 3, its revenue from that shard doesn’t make it to the coordinator node. The coordinator only sees a partial sum and may place it below a less important product or exclude it entirely.

So even if you’re using a valid sorting metric, the sort order is misleading because the inputs to that sort were already compromised.

This affects:

sum,avg, and other aggregations requiring full participation- Any high-cardinality grouping, like

product.idoruser_id

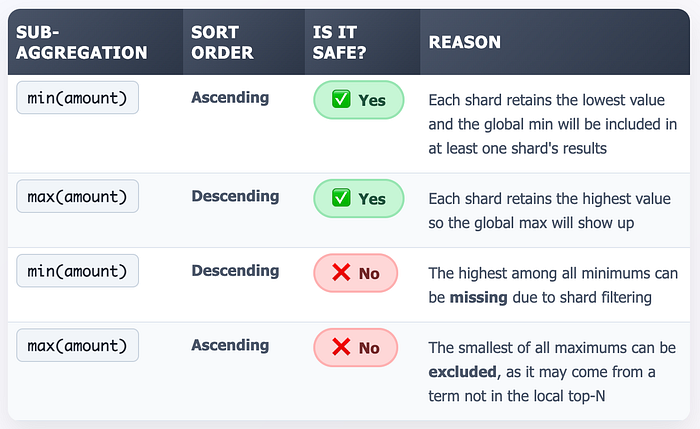

b) Sorting by max ASC or min DESC Is Also Problematic

Even though min and max aggregations are generally considered safer when used for ordering terms buckets, they are only safe when sorted in the right direction. Specifically, you should sort max values in descending order and min values in ascending order.

Why? Because these directions guarantee that the global extreme value (largest max or smallest min) will appear in at least one shard’s local top buckets. For example, if you’re ordering products by max(price) DESC, and Product A has the highest price globally ($120), it will still be the highest locally on at least one shard, so it makes it into the local top N buckets and reaches the coordinator. The coordinator then merges the shard responses and correctly computes the global result.

However, if you sort in the wrong direction like max(price) ASC you risk missing products whose true global max price is high, because on each shard, their max might be lower than others. If the product doesn't make it into any shard’s top N, the coordinator never sees it, and your final results will be incomplete or misordered.

max DESC and min ASC ensure accurate results other directions risk missing the true global values.So even with min or max, you must only sort in the safe direction otherwise, you risk wrong ordering e.g., when the global max is not among the local maxima from the top buckets on any shard.

Suppose you’re aggregating for product.id with size 2and ordering by max(price) ASC, with two shards:

- Shard 1: A: 100, B: 110, C: 120

- Shard 2: C: 130, A: 140, B: 160

Now suppose shard_size = 2, meaning each shard returns only its top bucket.

- Shard 1 → A (100), B (110)

- Shard 2 → C (130), A (140)

The coordinator receives:

- A: 100 (S1), 140 (S2) → max = 140

- B: 110 (S1 only)

- C: 130 (S2 only)

It selects the lowest two (in-order): B (110) and C (130), when it should’ve been C (130), A(140).

6. Avoiding Inaccurate Results: Best Practices

Now that we understand the mechanics of how terms aggregation works and the pitfalls around ordering, approximation, and field types, let’s turn that insight into concrete recommendations.

These practices help you get more accurate, reliable, and scalable aggregations, especially as your data volume or field cardinality grows.

a) Set shard_size Wisely

By default, Elasticsearch uses this formula:

shard_size = size * 1.5 + 10

This is a performance-oriented heuristic. It works okay for low-cardinality fields, but often fails for large datasets or many shards.

Why it matters:

- Each shard returns only its top

shard_sizeterms. - If a term is globally common but not in the top terms of any single shard, it will be missing from the final result.

What to do:

- Manually increase

shard_sizeoften 2x or 3x thesizeto improve accuracy. - Keep in mind this increases memory and compute cost, so balance accordingly.

b) Understand doc_count_error_upper_bound

This field appears in the response when show_term_doc_count_error: true is set.

It indicates the maximum number of documents that could have been missed per bucket, due to the shard-level approximation.

Important notes:

- Only meaningful when sorting by

_count: "desc". - When sorting by

_key, it is always0. - For sub-aggregation sorts or

_count: asc, the error cannot be computed and is set to-1to indicate uncertainty. - A high error bound = less trustworthy results.

Tip: Always enable show_term_doc_count_error when you care about result accuracy.

c) Respect search.max_buckets Limit

Elasticsearch has a hard safety limit on how many buckets an aggregation query can generate but can be adjusted via cluster settings:

search.max_buckets = 65,535 (default)

This is a global limit across all buckets, all levels of nesting.

Why it matters:

- Large

sizevalues can trip this limit. - Deeply nested or wide aggregations (e.g. nested

terms → sub-terms → sub-sub-terms) add up quickly. - If the limit is hit, your query will fail with an error.

Solutions:

- Keep

sizeandshard_sizereasonable. - Avoid unbounded bucket creation.

- Use

compositeaggregation if you need to paginate through many buckets.

d) Pre-Filter Data to Reduce Cardinality

In high-cardinality scenarios, accuracy can often be improved by reducing the number of competing terms before aggregation. One effective strategy is to wrap your terms aggregation inside a filter aggregation.

For example, instead of aggregating over all documents in a 5-million record index, you might first filter for a relevant subset such as a time window (last 30 days), a category (product.category = "electronics"), or a user segment (region = "US").

This limited scope reduces the number of unique terms that need to compete for the top shard_size slots, improving the chances that globally significant terms are included in each shard’s response.

The defaults in Elasticsearch prioritize speed and scalability not perfect accuracy. If you care about precision, take control of the knobs:

shard_size,doc_count_error_upper_bound, field mappings, and bucket limits.

7. Final Thoughts

terms aggregation is one of the most powerful tools in Elasticsearch but it’s optimized for speed, not exactness. It works well out of the box for low-cardinality fields and small datasets. In practice, however, this often breaks down as your data scales and shard count grows.

To avoid misleading results:

- Test with a higher

shard_sizeto capture globally frequent terms. - Enable and monitor

doc_count_error_upper_boundto understand the confidence of your results. - Avoid unsafe sort orders like

_count: "asc"or sorting by sub-aggregations such asavg,sum, ormin DESC/ max ASC. These aggregations may be based on incomplete data due to shard-level filtering, leading to misleading results when used for sorting. - Use the right aggregation type:

compositefor pagination,rare_termsfor uncommon values,cardinalityfor distinct counts.

In short: if accuracy matters, don’t rely on defaults. Know the trade-offs, and tune accordingly.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.