Static Embeddings to Contextual AI: Fine-Tuning NLP Models Explained

Author(s): Parth Saxena

Originally published on Towards AI.

1. The Evolution of NLP Models

Natural Language Processing (NLP) has transformed how machines understand and generate human language. From simple text processing to powerful language models capable of complex text generation, the journey of NLP has been remarkable. At the core of this evolution are two types of models, static embeddings and contextual models.

In this blog I’ll talk about how NLP models work, how to fine-tune them, and why some models require advanced techniques for fine-tuning. Lets start with how large language models (LLMs) work and then move to understanding embeddings, fine-tuning techniques with Gensim for static models, and the transition to transformer-based models like LLaMA and Mistral.

2. How Large Language Models (LLMs) Work

Large Language Models (LLMs) are the backbone of modern NLP. At their core, LLMs are advanced text auto-completion systems. They take an input text and generate text predictions based on the patterns they have learned from massive text corpus.

Think of an LLM as a super-smart text predictor. It has been trained on millions of documents, and its primary skill is to understand context and predict what comes next.

How LLMs Make Predictions

- LLMs break down text into smaller units called tokens.

- They then use their knowledge of word relationships and context to predict the next token.

- The longer the text context, the more accurate the prediction.

Example:

If you give an LLM the text “The stock market is”, it might predict “rising” or “falling” depending on the context of the training data.

3. Understanding Embeddings — The Backbone of NLP

Embeddings are the secret sauce of NLP. At their core, embeddings are numerical representations of text, making it possible for machines to understand and work with language.

What Are Embeddings?

- Embeddings are vectors (arrays of numbers) that represent words, phrases, or even entire documents.

- These vectors capture the meaning of words based on their relationships with other words.

- Similar words have similar vectors, meaning they are close to each other in the vector space.

Visualizing Embeddings:

Imagine a 3D space where words like “king” and “queen” are close together, while “apple” is far away because it has a different meaning.

Types of Embeddings:

- Static Embeddings: The vector of a word is fixed. (Example models: Word2Vec, GloVe)

- Contextual Embeddings: The vector of a word changes based on context. (Example models: BERT, LLaMA)

4. Static Embeddings with Word2Vec and GloVe

Word2Vec and GloVe are two of the most popular techniques for generating static embeddings. They are known as “static” because each word has a fixed vector representation, regardless of the context.

A Brief History of Static Embeddings

- The concept of static embeddings gained popularity with the introduction of Word2Vec by researchers at Google in 2013.

- Word2Vec revolutionized NLP because it allowed words to be represented as vectors, capturing their meanings in a multi-dimensional space.

- Following Word2Vec, GloVe (Global Vectors) was introduced by researchers at Stanford in 2014, focusing on capturing word co-occurrences globally.

Word2Vec: Predicting Words Using Context

- Word2Vec is a neural network-based method for generating word vectors.

- It has two main variants:

- Continuous Bag of Words (CBOW): Predicts a word given its surrounding context.

- Example: For the context “I am reading a ___,” the model predicts “book.”

- Skip-Gram: Predicts the surrounding context given a word.

- Example: For the word “coffee,” the model predicts context words like “drink,” “morning,” “hot.”

- Word2Vec works by learning from millions of text documents, creating vector representations that capture semantic relationships between words.

GloVe: Global Vectors for Word Representation

- GloVe (Global Vectors) is a count-based model developed by Stanford University.

- Unlike Word2Vec, which focuses on predicting words, GloVe creates word vectors by analyzing how often words appear together in a massive text corpus.

- The main idea is that the meaning of a word can be derived from the company it keeps (its co-occurrence with other words).

- For example, the vector of “king” is close to “queen,” but also has a relationship with “man,” “woman,” and “royalty.”

Why Static Embeddings are Called Static

- The word vectors generated by Word2Vec or GloVe do not change based on the context of the sentence.

- For example, the word “apple” always has the same vector, whether it is used in the context of “fruit” or “technology.”

5. Introducing Gensim and Why Use It?

Gensim is an open-source Python library specifically designed for topic modeling, document indexing, and natural language processing. It provides a fast and memory-efficient implementation of various NLP algorithms, including Word2Vec, Doc2Vec, and FastText.

How Gensim is Used for Fine-Tuning

- Gensim allows you to load pre-trained models and continue training them with new text data.

- This process is known as fine-tuning and is especially useful when you want to adapt a general model to a specific domain (like finance, healthcare, or legal text).

Why Fine-Tuning is Important

Imagine you have a Word2Vec model trained on general news articles. But now, you want to use it to analyze financial documents. Fine-tuning allows you to adapt the existing model to understand financial terminology and relationships without starting from scratch.

Example Use Case:

- Initial Model: A Word2Vec model trained on general text (news articles).

- Fine-Tuned Model: The same model, further trained on financial news articles.

- Result: The model now understands financial terms better, such as “bull market,” “hedge fund,” and “leveraged buyout.”

Code Snippet for Training Word2Vec with Gensim

from gensim.models import Word2Vec

# Sample corpus

sentences = [

['hello', 'world'],

['machine', 'learning', 'is', 'amazing'],

['word', 'embedding', 'with', 'gensim']

]

# Training a Word2Vec model

model = Word2Vec(sentences, vector_size=100, window=5, min_count=1, sg=1)

# Getting the vector for a word

print(model.wv['hello'])

# Finding similar words

print(model.wv.most_similar('hello'))

While training a Word2Vec model from scratch is useful, fine-tuning an existing model can save time and enhance its performance for specific tasks. Here are some key differences between Training vs. Fine-Tuning:

- Training a Model from Scratch: This means starting with an empty model and teaching it everything from the ground up using a large text corpus. It is time-consuming and computationally expensive.

- Fine-Tuning an Existing Model: This means taking a pre-trained model and further training it on domain-specific data. It is faster, more efficient, and retains general knowledge while gaining domain expertise.

Code Snippet for Fine-Tuning a Word2Vec Model with Gensim

from gensim.models import Word2Vec

# Load an existing pre-trained Word2Vec model

model = Word2Vec.load("your_pretrained_model.model")

# Define your domain-specific text corpus (example: financial terms)

new_sentences = [

['financial', 'market', 'stocks'],

['bank', 'loan', 'interest'],

['mortgage', 'default', 'risk']

]

# Update the model's vocabulary with new words (domain-specific)

model.build_vocab(new_sentences, update=True)

# Fine-tune the model using the new sentences

model.train(

new_sentences, # New domain-specific text data

total_examples=len(new_sentences), # Number of sentences for training

epochs=5 # Number of training epochs (adjust as needed)

)

# Save the fine-tuned model for future use

model.save("fine_tuned_word2vec_gensim.model")

# Testing the fine-tuned model

print("Vector for 'financial':", model.wv['financial'])

print("Most similar words to 'financial':", model.wv.most_similar('financial'))

6. The Rise of Contextual Models: Transformers and LLMs

Transformers are the breakthrough technology that powers modern LLMs. They were introduced in the groundbreaking paper “Attention is All You Need” (Vaswani et al., 2017). Unlike static embedding models like Word2Vec, which create a single vector for each word, transformers generate contextual embeddings, meaning the vector representation of a word can change depending on the sentence it appears in.

How Transformers Work:

- They use a mechanism called “self-attention”, which allows the model to focus on different parts of the input text to understand word relationships.

- Each word’s vector representation is adjusted based on its context in the sentence.

- Transformers can be scaled into massive models, leading to LLMs like BERT, GPT, LLaMA, and Mistral.

What Problem Do They Solve?

- Traditional models like Word2Vec have a major limitation: they generate static embeddings. The word “apple” has the same vector whether it means the fruit or the company.

- Transformers solve this by creating context-aware representations, making them much better for understanding the meaning of text in different scenarios.

- They power a wide range of NLP tasks, including text generation, sentiment analysis, question answering, and more.

Popular Transformer-Based Models:

- BERT (Bidirectional Encoder Representations from Transformers): Optimized for understanding text (NLP tasks like classification).

- GPT (Generative Pre-trained Transformer): Optimized for text generation (chatbots, content creation).

- LLaMA and Mistral: Advanced, scalable transformer models designed for efficient training and powerful language understanding.

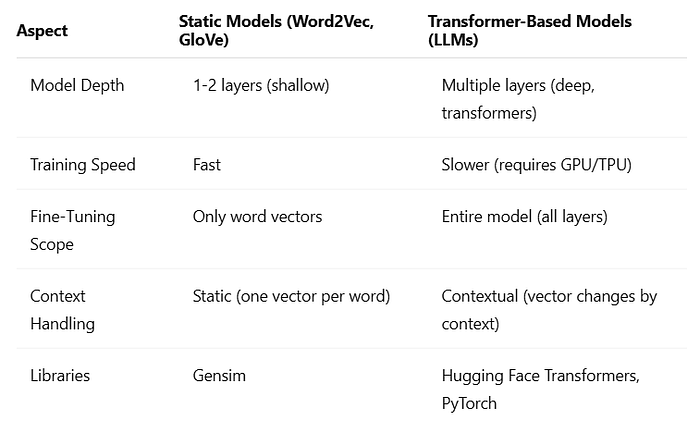

7. Comparing Fine-Tuning Techniques for Static Models and Transformer-Based Models

I hope this gave you a clear understanding of static embeddings and contextual AI-based models. In a future blog, I plan to dive deeper into Transformers, a deep technical topic on its own!

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.