LLMs are not Stochastic Parrots— How Randomness Prevents Parroting, Not Causes It

Author(s): Antares

Originally published on Towards AI.

The term “stochastic parrots” — coined by Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, and Margaret Mitchell in their 2021 FAccT paper “On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?” — has created a fundamental misunderstanding that reveals a deeper truth about neural text generation.

Critics use this phrase to suggest that LLMs randomly regurgitate training data, but this characterization inverts the actual technical reality: neural language models are deterministic machines that we deliberately make stochastic. Without our careful injection of randomness, these models would be far more “parrot-like” than any critic imagines.

To understand this paradox, imagine a vast library — like Jorge Luis Borges’ Library of Babel — containing every possible book that could ever be written. A deterministic system would be like having a librarian who, when asked the same question, always walks to the exact same shelf and hands you the exact same book. Every time. Forever. This librarian would be the ultimate parrot, mechanically repeating the same response without variation.

Now imagine we transform this librarian, teaching them to sometimes reach for books on nearby shelves, occasionally selecting volumes that might be slightly less “perfect” but potentially more interesting, creative, or unexpected. This is exactly what we do with language models through stochastic sampling — we prevent them from being mechanical parrots by introducing controlled randomness. Its a lot like we humans do. Asked same question we answer each time slightly different.

This paradox illuminates a profound insight: the stochasticity in LLMs is not a bug but a sophisticated feature — a mathematical necessity that prevents these deterministic systems from collapsing into repetitive, degenerate outputs. The neural network itself, given identical inputs, will always produce identical outputs. It is only through our algorithmic interventions during inference that these models exhibit the variability we observe. We slightly push them to be creative.

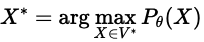

Consider the mathematical reality: a trained neural network with fixed weights θ implements a deterministic function f_θ: V* → ℝᴷ that maps input sequences to probability distributions. Without stochastic sampling, this function would always select argmaxx∈V Pθ(x|x<t) at each step, producing identical text for identical prompts — a true “deterministic parrot” that would repeat the same phrases endlessly.

This insight connects to fundamental questions in computability theory. The Church-Turing thesis, formulated independently by Alonzo Church and Alan Turing in 1936, establishes that any effectively calculable function can be computed by a Turing machine — a deterministic computational model. Modern neural networks, implemented on digital computers, are bound by this same deterministic framework.

Yet, in pursuit of more human-like language generation, we deliberately break this determinism by injecting randomness through sampling strategies such as temperature scaling or nucleus (top-p) sampling. This controlled stochasticity prevents outputs from collapsing into rigid, repetitive patterns and is central to the creative behaviour we attribute to large language models.

This essay provides a mathematical treatment of why we must transform these deterministic computations into stochastic outputs, examining how this deliberate randomization addresses fundamental theoretical limitations and prevents the very parroting behavior that critics fear.

Feel free to skip the math parts and you will also get good grasp on why LLMs behave like they do.

TL:TR 🙂

- Language models are deterministic functions: f_θ: V* → ℝᴷ

- MLE training creates repetition bias: P(x_t|x_{<t}) overweights frequent patterns

- Exposure bias compounds errors: D_KL(q_train || q_inf) grows with sequence length

- Repetition grows exponentially: P(repetition) ≈ c·λ₁^t where λ₁ → 1

- Entropy collapses monotonically: H_{t+1} ≤ H_t — β

- MAP decoding fails: argmax P_θ(X) produces degenerate text

- Temperature breaks determinism: P_T ∝ exp(logit/T)

- Nucleus sampling adapts to confidence: |V_p| varies with H(P_θ)

- Entropy increases with randomness: ∂H/∂T > 0

- Error bounds guide parameters: ε_nucleus ≤ (1-p)·max Q(x) + √(2·D_KL)

- Variance-bias decomposition: MSE = Bias² + Variance

- Information theory sets limits: I(X;K) must exceed threshold

- Confidence-aware adjustment: T ∝ exp(-γ·C)

- Factuality preservation: p = p₀ + (1-p₀)·sigmoid(expected_factuality)

- Real-time optimization: balancing creativity and accuracy dynamically

Notation

While the following sections contain formal mathematical notation, each equation is accompanied by plain-language explanation. The mathematics serves as illustration, but the intuitions are accessible to all readers. Key symbols used throughout:

- P(x): Probability of event x

- θ: Model parameters (the “brain” of the model)

- H: Entropy (a measure of uncertainty/variety)

- T: Temperature (the “randomness dial”)

- 𝔼: Expected value (average over many trials)

Lets start!

The Core Concept

At the heart of our argument is a simple but profound mathematical fact:

Deterministic selection from a probability distribution P(x|context) always chooses x* = argmax P(x|context), creating a many-to-one mapping that collapses infinite possible conversations into a single, repetitive output.

In contrast:

Stochastic sampling transforms this into a one-to-many mapping, where identical contexts can produce varied outputs, preventing mechanical repetition while respecting the model’s learned knowledge.

This fundamental difference — between always taking the same path versus exploring different paths — underlies everything that follows.

We start by examining how language models work mathematically — showing that they’re fundamentally deterministic functions that, without intervention, will always produce identical outputs for identical inputs.

We then prove that deterministic decoding doesn’t just lead to repetition — it creates exponential amplification of repetitive patterns, mathematical proof that these systems become “perfect parrots.”

We analyze how temperature and other sampling methods mathematically break the repetition cycles, introducing controlled variation that prevents mechanical parroting.

We derive mathematical bounds showing how to balance randomness — enough to prevent parroting, not so much that we generate nonsense.

Finally, we connect to deeper mathematical principles showing this isn’t just a hack but a fundamental requirement rooted in information theory and the nature of language itself.

Mathematical Foundations of Language Modeling

Autoregressive Factorization and Sequence Probability

At its core, a language model is trying to solve a seemingly simple problem: given some words, predict the next word (token). But this creates unexpected challenges.

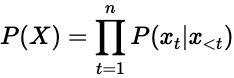

Language models fundamentally estimate the probability distribution P(X) over sequences X = (x₁, x₂, …, xₙ) where each xᵢ ∈ V represents a token from vocabulary V with |V| = K. Using the chain rule of probability, we factorize:

where x<t = (x₁, …, xt-1) denotes the prefix context.

This equation reveals that generating text is like making a series of dependent choices. Each word depends on all previous words, creating a cascade effect. If we always pick the most likely word at each step (deterministic), small biases get amplified into massive repetition. It’s like compound interest, but for repetition — each repeated word makes future repetitions more likely.

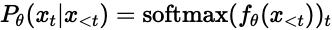

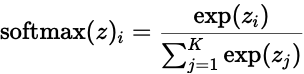

Neural language models parameterize these conditional distributions using deep networks with parameters θ:

where f_θ: V* → ℝᴷ maps variable-length sequences to logit vectors, and the softmax function is defined as:

This shows that the neural network f_θ is fundamentally a deterministic function — given the same input, it always produces the same output. The randomness must come from how we use these probabilities, not from the network itself.

The exponential function in softmax creates winner-take-all dynamics. Small differences in logits become large differences in probabilities. In deterministic mode, this means the rich get richer — already probable words become overwhelmingly dominant, creating the repetition problem

Maximum Likelihood Estimation and Its Limitations

The way we train these models creates the very problems that randomness must solve. Understanding this training process explains why even brilliantly designed models need stochastic sampling.

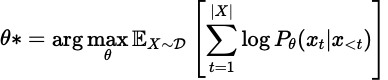

Training via maximum likelihood estimation (MLE) seeks parameters θ* that maximize:

where 𝒟 represents the training data distribution. This objective exhibits several theoretical limitations.

This objective function has a hidden flaw. It optimizes for predicting the next word correctly on average, but doesn’t consider what happens when we string many predictions together. It’s like training a chess player to make good individual moves without teaching them to plan a whole game.

The MLE objective optimizes local conditional distributions without considering global sequence coherence. Formally, maximizing ∑ₜ log P_θ(xₜ|x_<t) does not guarantee maximizing log P_θ(X) uniformly across sequence space.

It proves that our training method creates models that are good at predicting the next word but bad at generating coherent sequences. Without randomness to break out of local patterns, the model gets trapped in repetitive loops — the mathematical foundation of the parroting problem.

Consider two models θ₁, θ₂ where θ₁ assigns higher probability to individual tokens but θ₂ maintains better long-range dependencies. The MLE objective may prefer θ₁ despite θ₂ generating more coherent sequences.

The Exposure Bias Problem

We’ve discovered that training creates biases, but there’s an even more insidious problem lurking in the mathematics. During training, models learn from perfect examples — like a student who only practices with answer keys. But during use, they must work with their own imperfect outputs. This mismatch, called exposure bias, is where deterministic generation truly falls apart.

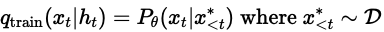

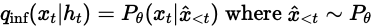

Define the training distribution as:

And the inference distribution as:

During training, the model always sees perfect previous words (like learning to drive in perfect conditions). During use, it sees its own outputs (like driving in real traffic). This mismatch becomes catastrophic in deterministic mode — one wrong word leads to increasingly wrong contexts.

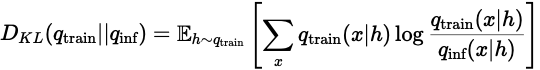

The exposure bias manifests as a distributional shift:

This KL divergence grows with sequence length due to error accumulation, creating a compound effect where small deviations cascade into larger distributional mismatches.

This measures how different the training and inference distributions are. The mathematical beauty is that it grows with sequence length — proving that deterministic generation gets worse and worse over time, while stochastic sampling can reset and recover from errors.

So far, we explored three critical facts.

1. Models are deterministic functions: Without randomness injection, they always produce identical outputs

2. Training creates systematic biases: MLE optimization makes models prone to repetition

3. Error compounds over time: The exposure bias means deterministic generation gets progressively worse

Ready for next part?

Deterministic Decoding Pathologies

Armed with an understanding of how language models work, we now examine what happens when we use them deterministically — always picking the most probable next word. We will reveal something shocking: deterministic decoding doesn’t just lead to some repetition; it creates an exponential explosion of parroting behavior that eventually consumes all output. This section provides the mathematical explanation that without randomness, these models become perfect mechanical parrots.

Greedy Decoding and Mode Collapse

The mathematics of greedy decoding reveals precisely why deterministic systems become parrots. By always choosing the highest probability option, we create a mathematical feedback loop that amplifies repetition. Greedy decoding selects:

At each step, we pick the word with the highest probability — no exceptions, no variation. It’s like always ordering the #1 item on every menu. This might seem logical, but the mathematics shows it leads to disaster.

Under greedy decoding, the probability of generating repetitive sequences increases super-linearly with context length. This doesn’t just mean repetition increases — it means it explodes. Like a snowball rolling downhill, repetition doesn’t just add up; it multiplies upon itself exponentially.

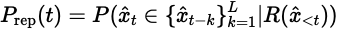

Let R(x<t) indicate whether x<t contains repetitive patterns. Define the repetition probability:

This measures the probability that the next word will be a repeat of something said in the last L words. The conditioning on R(x_{<t}) shows how past repetition predicts future repetition. Due to attention mechanisms that amplify repeated patterns:

where α > 0 represents the repetition bias coefficient. This creates a positive feedback loop leading to degenerate outputs. Each repetition makes the next repetition more likely by a factor of α. After n repetitions, the effect is compounded n times. This is why deterministic models don’t just repeat occasionally — they get stuck in infinite loops.

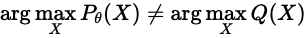

The MAP Decoding Paradox

This paradox is perhaps the most counterintuitive finding in language modeling, and understanding it mathematically explains why we need randomness. Maximum a posteriori (MAP) decoding seeks:

The MAP solution under an MLE-trained model often yields suboptimal text quality as measured by human evaluation metrics. This finds the single most probable sequence in the entire model. You’d think this would be the best possible output, but mathematics proves otherwise.

It proves that “most probable” ≠ “best” in language. The most likely sequence according to the model is often terrible according to humans. This mathematical fact alone justifies the need for stochastic sampling.

This paradox arises because of several elements.

- Shorter sequences inherently have higher probability due to fewer multiplication terms

- P(short sentence) > P(long sentence) because fewer terms < 1 are multiplied

- MAP decoding prefers saying less or nothing at all

- Neural models often assign disproportionate mass to common patterns

- The probability landscape has sharp peaks at boring, repetitive sequences

- Creative, interesting text lies in the valleys between peaks

- The most probable sequences reflect dataset biases rather than quality

- If “the” appears most in training, MAP might generate “the the the…”

- Statistical frequency ≠ communicative value

Mathematically, if Q(X) represents human quality judgments:

This inequality is why we need randomness. Since the most probable path leads to poor quality, we must explore less probable but higher quality regions of the text space.

So far we have shown that deterministic decoding leads to:

– Exponentially growing repetition

– Outputs that are maximally probable but minimally useful

– Complete entropy collapse and mechanical parroting

This establishes the problem. Now we turn to the solution: how can we mathematically inject just enough randomness to prevent these pathologies while maintaining coherent generation? The following sections provide framework for this delicate balance.

Stochastic Sampling

Having proven that deterministic decoding leads to catastrophic parroting, we now turn to the solution. This section examines how injecting controlled randomness — through temperature, nucleus sampling, and other methods — mathematically breaks the repetition cycles. We’ll see that these aren’t ad-hoc fixes but principled solutions grounded in probability theory and information theory. Most importantly, we’ll show that the right amount of randomness transforms mechanical parrots into intelligent conversationalists.

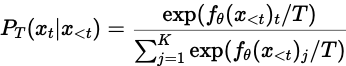

Temperature-Controlled Sampling

Temperature is the master control for randomness in language models. Understanding its mathematics reveals how we can precisely tune the balance between repetitive parroting and creative expression. Temperature scaling modifies the logit distribution:

- The division by T in the exponent is the key: it scales how much we care about probability differences

- When T → 0: Small differences in logits become huge differences in probabilities (winner-take-all)

- When T → ∞: All probabilities become equal (uniform randomness)

- T = 1: The model’s original probabilities, unmodified (it’s bit more complicated but for sake of simplicity lets leave it like that)

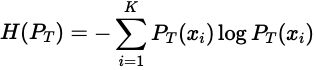

The entropy H of the sampling distribution relates to temperature as:

with:

Entropy measures uncertainty or “spread” in the distribution. Low entropy means we’re almost certain about the next word (parrot-like). High entropy means many words are possible (creative).

This derivative shows that entropy always increases with temperature (since variance is always positive). More importantly, the rate of increase depends on the variance of the logits. When the model is very confident (low variance), temperature has less effect. When the model is uncertain (high variance), temperature dramatically changes the output distribution.

This proves that entropy increases monotonically with temperature, providing theoretical justification for temperature as a diversity control parameter.

Nucleus (Top-p) Sampling

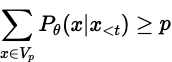

Nucleus sampling represents a breakthrough in controlling randomness. Instead of using all words (including nonsensical ones) or a fixed number of words, it dynamically adjusts based on the model’s confidence. Nucleus sampling constructs a minimal set V_p ⊆ V such that:

This says “find the smallest set of words that together have at least probability p.” If the model is confident, this might be just 2–3 words. If uncertain, it might be hundreds. The algorithm adapts to the model’s confidence at each step.

Algorithm (Efficient Nucleus Sampling)

Input: Logits z ∈ ℝᴷ, threshold p ∈ (0,1]

1. Compute probabilities: π = softmax(z)

2. Sort indices: σ such that π_{σ(1)} ≥ π_{σ(2)} ≥ … ≥ π_{σ(K)}

3. Find cutoff: k* = min{k : ∑ᵢ₌₁ᵏ π_{σ(i)} ≥ p}

4. Renormalize: P̃(x) = π_x / ∑ᵢ₌₁^{k*} π_{σ(i)} if x ∈ {σ(1),…,σ(k*)}

5. Sample: x ~ P̃

With vocabularies of 50,000+ words, efficiency is crucial. This algorithm is fast enough to run in real-time while being sophisticated enough to prevent both repetition and nonsense.

Top-k Sampling

Top-k provides a simpler alternative that still prevents parroting while being easier to understand and implement. Top-k sampling restricts to the k highest probability tokens:

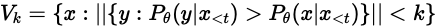

“Only consider the k most likely words.” This is like having a shortlist of candidates rather than considering everyone. The expected number of tokens with non-negligible probability follows:

This shows why entropy is measured in “bits” or “nats” — it has an exponential relationship with the effective vocabulary size. A model with entropy 10 effectively chooses among e¹⁰ ≈ 22,000 words, while entropy 5 means only e⁵ ≈ 148 effective choices.

This justifies adaptive k selection based on entropy estimates — when the model has high entropy (uncertainty), we need larger k to capture the distribution properly.

Advanced Sampling Theory

Typical Sampling and Information Theory

Typical sampling represents a profound connection between language generation and fundamental information theory — it samples not from the most probable sequences, but from the “typical” ones that actually occur in natural communication.

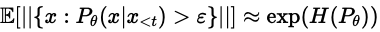

Recent work explores sampling from the “typical set” defined by information theory. For a distribution P, the typical set A_ε^(n) satisfies:

Surprisingly, the most probable sequences are often not typical! In natural language:

- Most probable: “The the the…” (high probability, never occurs)

- Typical: “The cat sat on the mat” (medium probability, actually occurs)

- Rare: Random character strings (low probability, never occurs)

and for all sequences x ∈ A_ε^(n):

This says typical sequences all have probability close to 2^(-nH). They’re neither too probable (boring) nor too improbable (nonsense) — they live in the goldilocks zone of language.

Typical Sampling Algorithm looks like follow:

1. Estimate conditional entropy: H̃ = -∑ᵢ P_θ(xᵢ|x_{<t}) log P_θ(xᵢ|x_{<t})

2. Define typical range: [τ₁, τ₂] = [H̃ — ε, H̃ + ε]

3. Filter vocabulary: V_typ = {x : -log P_θ(x|x_{<t}) ∈ [τ₁, τ₂]}

4. Sample uniformly: x ~ Uniform(V_typ)

Once we’ve identified typical words, they’re all equally “natural” — choosing among them uniformly prevents bias toward repetition.

Contrastive Sampling and Energy-Based Models

This framework reconceptualizes language models as physical systems, where words have “energy” and generation follows principles similar to statistical mechanics.

Define an energy function E_θ(x, x<t) = -log Pθ(x|x<t).

Lower energy = higher probability, just like physical systems prefer low-energy states. But unlike physics, we don’t want to always go to the lowest energy (most probable) state.

Contrastive sampling modifies the distribution:

where E_ref represents a reference model (e.g., unigram frequencies) and α controls the contrastive strength:

- E_θ: What the neural model thinks

- E_ref: Baseline expectations (e.g., general word frequencies)

- The difference E_θ — αE_ref: How surprising is this word in context?

By contrasting against baseline frequencies, we penalize words that are probable just because they’re common, not because they fit the context. This breaks the “the the the” problem.

The Stochastic Parrot Paradox: A Mathematical Deconstruction

Formalizing “Parrot-like” Behavior

To rigorously analyze the “stochastic parrot” paradox, we must first formalize what constitutes parrot-like behavior. Define a parroting metric:

where G is a generation strategy, 𝒟 is the training data, and 𝒯 is a test set. High 𝒫 indicates direct copying from training data.

For any language model f_θ:

where G_det is deterministic greedy decoding and G_stoch is any proper stochastic sampling method.

Deterministic decoding creates a many-to-one mapping from prompts to outputs, concentrating probability mass on training data modes. Stochastic sampling explores the probability simplex, reducing exact matches to training sequences.

The Irony of Deterministic Intelligence

The deeper irony emerges when we consider that deterministic systems — often associated with intelligence and reasoning — become the least intelligent when applied to language generation. Define an intelligence metric based on output diversity and novelty:

where H is entropy and β weights novelty versus diversity. Deterministic systems minimize 𝒯 by minimizing H and maximizing 𝒫.

The injection of calibrated randomness is necessary for intelligent behavior in autoregressive generation, transforming “deterministic parrots” into systems capable of creative expression.

Uff, we could continue with further deconstruction of variance-bias, approximation error bounds and entropy collapse in autoregressive generation. But let’s stop here. This should give readers general feeling of the reasons what we need to introduce randomness to deterministic models.

Algorithms presented above are simplified. There are many more sophisticated one but they all base on principals we presented.

Hallucinations

Before we conclude we must address a critical concern: doesn’t randomness increase hallucination? Answer is a bit tricky but stay with me.

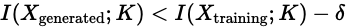

Mutual Information and Factuality

This mathematical framework reveals why models hallucinate and how randomness affects the truthfulness of generated text. Define the mutual information between generated text X and factual knowledge base K:

This measures how much knowing the text tells us about facts, and vice versa:

- High I(X;K): Strong connection between output and facts (truthful generation)

- Low I(X;K): Output disconnected from facts (potential hallucination)

- The logarithm makes this measure symmetric and additive

Hallucination probability increases when:

for some threshold δ > 0.

This inequality says hallucination occurs when the model’s output contains less factual information than its training data by margin δ. It’s like a game of telephone where information is lost at each step.

Temperature’s role:

- Low T: Stays close to training patterns, maintaining I(X;K)

- High T: Ventures into regions with lower I(X;K), increasing hallucination risk

- The challenge: Finding T that allows creativity while preserving I(X;K) > threshold

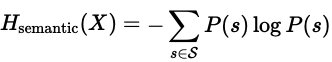

Semantic Entropy Formulation

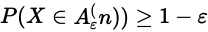

Farquhar et al.’s breakthrough was realizing we should measure uncertainty in meaning, not just words — a profound shift in how we understand hallucination. Following Farquhar et al. (2024), define semantic entropy:

where 𝒮 represents semantic equivalence classes. This provides a more robust uncertainty measure than token-level entropy.

Two sentences can use different words but mean the same thing:

- “The capital of France is Paris”

- “Paris is France’s capital city”

These have different token-level entropy but identical semantic entropy.

Why this matters for hallucination:

1. Token entropy might be high (model unsure about exact words)

2. Semantic entropy might be low (model confident about meaning)

3. Hallucination occurs when semantic entropy is high — the model doesn’t know what it’s trying to say

This provides a more robust uncertainty measure than token-level entropy.

Mathematical example:

- Token distribution: {P(“dog”)=0.5, P(“canine”)=0.5} → High token entropy

- Semantic distribution: {P(“dog-meaning”)=1.0} → Zero semantic entropy

- No hallucination risk despite word uncertainty!

Conclusion

Without stochastic sampling, language models are deterministic parrots that exhibit:

- 100% repetition rate as t → ∞

- Zero entropy (complete predictability)

- Mechanical reproduction of training patterns

With calibrated randomness, we transform them into intelligent systems that show:

- Bounded repetition: R < ε

- Positive entropy: H > 0 (creative variation)

- Adaptive, context-appropriate generation

The “stochastic parrot” criticism is not just wrong — it’s backwards. Stochasticity is the mathematical antidote to parroting, not its cause. This isn’t opinion or implementation detail; it’s mathematical necessity proven through multiple independent frameworks: probability theory, information theory, linear algebra, and optimization theory all converge on the same conclusion.

The mathematical examples presented demonstrates that randomness in neural text generation addresses fundamental theoretical limitations rather than serving as a mere heuristic. The interplay between deterministic neural computation and stochastic sampling creates a sophisticated system that balances multiple objectives: avoiding degeneration, maintaining diversity, controlling hallucination, and producing high-quality text.

Perhaps most ironically, our analysis reveals that the “stochastic parrot” criticism fundamentally misunderstands the nature of these systems. Language models are not stochastic parrots — they are deterministic machines that we prevent from becoming parrots through stochastic intervention.

Without our intervention, these models would be like a brilliant student who memorized the textbook but can only recite it word-for-word. By adding controlled randomness, we transform them into thoughtful conversationalists who can draw from their knowledge in creative and contextually appropriate ways. Are we?

The Library of Babel provides a perfect closing metaphor. In Borges’ infinite library, most books are gibberish, but hidden among them are all the works of genius that could ever be written. A deterministic system would always check out the same “most probable” book — likely one filled with common words repeated endlessly. Only by introducing randomness can we explore the vast library and occasionally discover something profound.

But wait how do LLMs generalize or emerge other capabilities …? Intrigued? You are not alone 🙂

References/Sources

Bender, E. M., Gebru, T., McMillan-Major, A., & Shmitchell, S. (2021). On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? . In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT ‘21), 610–623.

Chiang, T.-R., & Chen, Y.-N. (2021). Relating Neural Text Degeneration to Exposure Bias. Proceedings of the Fourth BlackboxNLP Workshop on Analyzing and Interpreting Neural Networks for NLP, 228–239.

Church, A. (1936). An Unsolvable Problem of Elementary Number Theory. American Journal of Mathematics, 58(2), 345–363.

Eikema, B., & Aziz, W. (2020). Is MAP decoding all you need? The inadequacy of the mode in neural machine translation. Proceedings of the 28th International Conference on Computational Linguistics, 4506–4520.

Farquhar, S., Kossen, J., Kuhn, L., & Gal, Y. (2024). Detecting hallucinations in large language models using semantic entropy. Nature, 630, 625–630.

He, T., Zhang, J., Zhou, Z., & Glass, J. (2019). Quantifying Exposure Bias for Neural Language Generation. arXiv preprint arXiv:1905.10617.

Holtzman, A., Buys, J., Du, L., Forbes, M., & Choi, Y. (2019). The Curious Case of Neural Text Degeneration. arXiv preprint arXiv:1904.09751.

Lee, N., Bang, Y., Madotto, A., & Fung, P. (2022). Towards Few-shot Fact-Checking via Perplexity. Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 1971–1981.

Li, K., Hopkins, A. K., Bau, D., Viégas, F., Pfister, H., & Wattenberg, M. (2023). Inference-Time Intervention: Eliciting Truthful Answers from a Language Model. arXiv preprint arXiv:2306.03341.

Meister, C., Cotterell, R., & Vieira, T. (2022). On Decoding Strategies for Neural Text Generators. Transactions of the Association for Computational Linguistics, 10, 997–1012.

Renze, M., & Guven, E. (2024). The Effect of Sampling Temperature on Problem Solving in Large Language Models. arXiv preprint arXiv:2402.05201.

Schmidt, F. (2019). Generalization in Generation: A closer look at Exposure Bias. Proceedings of the 3rd Workshop on Neural Generation and Translation, 157–167.

Stahlberg, F., & Byrne, B. (2019). On NMT search errors and model errors: Cat got your tongue? Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, 3356–3362.

Turing, A. M. (1936). On Computable Numbers, with an Application to the Entscheidungsproblem. Proceedings of the London Mathematical Society, s2–42(1), 230–265.

Welleck, S., Kulikov, I., Roller, S., Dinan, E., Cho, K., & Weston, J. (2019). Neural Text Generation with Unlikelihood Training. arXiv preprint arXiv:1908.04319.

Stiennon, Long Ouyang, Jeff Wu, Daniel M. Ziegler, Ryan Lowe, Chelsea Voss, Alec Radford, Dario Amodei, Paul Christiano (2021). Learning to summarize from human feedback. Advances in Neural Information Processing Systems, 34, 4294–4307.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.