The Essential Guide to Model Evaluation Metrics for Classification

Author(s): Ayo Akinkugbe

Originally published on Towards AI.

Introduction

Classification is one of the most common machine learning tasks, where models predict discrete categories or classes. Examples include detecting fraud, diagnosing diseases, or filtering spam emails. To understand the true performance of such models, choosing the right evaluation metric is important. This post provides a comprehensive exploration of classification metrics, explaining each in easy to grasp terms with practical use cases.

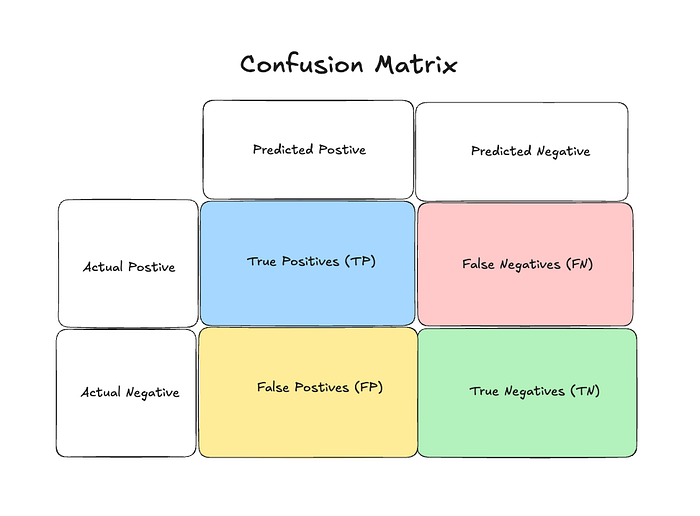

The Confusion Matrix: Foundation of Classification Metrics

The confusion matrix can really be confusing. This section’s goal is to help get a grasp of it as most classification metrics are derived from the confusion matrix, which organizes predictions into four categories:

- True Positives (TP): Cases correctly predicted as positive

- False Positives (FP): Cases incorrectly predicted as positive (Type I error). i.e the model incorrectly predicts a positive case when it’s actually negative.

- True Negatives (TN): Cases correctly predicted as negative

- False Negatives (FN): Cases incorrectly predicted as negative (Type II error). i.e the model incorrectly predicts a negative case when it’s actually positive.

Different classification metrics emphasize different parts of this matrix

Accuracy

Accuracy measures the proportion of correct predictions (both true positives and true negatives) among the total number of predictions.

Accuracy = (True Positives + True Negatives) / (Total Predictions)

To put it simply, Accuracy tells you what percentage of your predictions were correct. If you made 100 predictions and got 85 right, your accuracy is 85%.

Case Study:

A company developed an email classification system to sort messages into “Important” and “Not Important” categories. Their model achieved 92% accuracy, meaning it correctly classified 92 out of every 100 emails.

When to Use:

- When classes are balanced (similar number of samples in each class). For instance in our case study, there could be class imbalance if most emails are “Not Important”. For example — 92 out of 100. In that case, a naive model that always predicts “Not Important” would still achieve 92% accuracy, failing to identify actual “Important” emails.

- When false positives and false negatives have similar costs

- When you need a simple, intuitive metric for your stakeholders

Significance:

Accuracy is the most intuitive classification metric and is widely understood even by non-technical stakeholders. However, it can be misleading when classes are imbalanced or when certain types of errors are more costly than others.

Precision

Precision measures the proportion of positive identifications that were actually correct. It answers the question: “Of all instances predicted as positive, how many were actually positive?”

Precision = True Positives / (True Positives + False Positives)

For example, if your model identifies 100 emails as spam, and 90 of them are actually spam, your precision is 90%.

Case Study:

A content moderation system for a social media platform was designed to flag inappropriate content. With a precision of 95%, when the system flagged content as inappropriate, it was correct 95% of the time i.e for every 100 content flagged, only 95 of them are inappropriate content.

When to Use:

- When false positives are costly or problematic. In the case study example, if a high number of appropriate content is wrongly flagged, this can frustrate users, limit free expression, and damage trust in the platform — especially if the content is important or time-sensitive.

- When you want to be very confident in your positive predictions

- In information retrieval, recommendation systems, and content filtering

Significance:

Precision is crucial when the cost of false positives is high. For example, in spam detection, medical diagnostics, or legal document review, falsely identifying something as positive could have significant consequences.

Recall

Recall, also known as sensitivity, measures the proportion of actual positives that were correctly identified. It answers the question: “Of all actual positive instances, how many did we correctly identify?”

Recall = True Positives / (True Positives + False Negatives)

If there are 100 spam emails in your inbox, and your filter catches 85 of them, your recall is 85%.

Case Study:

A cancer screening test prioritized recall, achieving 98%. This meant that 98% of patients who actually had cancer received a positive test result, minimizing missed diagnoses. The high recall came at the cost of more false positives, but in this context, missing a cancer diagnosis was considered far worse than a false alarm.

When to Use:

- When false negatives are costly or dangerous

- When finding all positive instances is critical

- In medical screening, fraud detection, and safety systems

Significance:

Recall is essential when missing a positive case has serious consequences. In medical diagnostics, security applications, and predictive maintenance, high recall ensures that critical cases aren’t missed, even if it means more false alarms.

Specificity (True Negative Rate)

Specificity, also known as the True Negative Rate, measures the proportion of actual negatives that are correctly identified as such. It quantifies a model’s ability to correctly identify negative cases.

Specificity = True Negatives / (True Negatives + False Positives)

Specificity tells what percentage of the negative cases a model correctly identified as negative. For example, if there are 100 healthy patients and a medical test correctly identifies 95 of them as healthy, your specificity is 95%.

Case Study:

A hospital implemented an AI system to screen chest X-rays for signs of pneumonia in the emergency department. While the system had high sensitivity (98%), detecting almost all pneumonia cases, initial testing revealed a specificity of only 72%, meaning 28% of healthy patients were incorrectly flagged as potentially having pneumonia. This led to unnecessary additional testing, increased patient anxiety, and resource strain on the radiology department. After retraining the model with a more balanced dataset and implementing a specialized feature extraction network for distinguishing between pneumonia and similar-appearing conditions (like atelectasis and pulmonary edema), they improved specificity to 94% while maintaining high sensitivity at 96%. This improvement reduced false positive alerts by 79%, allowing radiologists to focus on true positive cases and reducing unnecessary follow-up procedures. The hospital estimated this saved approximately $1.2 million annually in avoided tests while improving patient experience and reducing radiologist workload.

When to Use:

- When the cost of false positives is high

- When resources for follow-up testing or treatment are limited

- When testing a large population with a low prevalence of the condition

- When false alarms would cause significant harm or disruption

- In screening contexts where further testing is expensive, invasive, or risky

- When you need to minimize unnecessary interventions

Significance:

Specificity complements sensitivity (recall) to provide a complete picture of a model’s discriminative ability. While sensitivity focuses on finding all positive cases, specificity ensures that negative cases are correctly identified. The relative importance of these metrics depends on the specific application context and the comparative costs of false positives versus false negatives.

In many real-world applications, the optimal balance between sensitivity and specificity is determined by ROC curve analysis (reviewed later in this post), which plots the trade-off between these metrics at different classification thresholds, allowing stakeholders to select the operating point that best meets their particular needs.

Balance Accuracy Score

Balanced accuracy is the average of recall obtained on each class. It addresses the problem of imbalanced datasets by giving equal weight to the performance on each class.

Balanced Accuracy = (Sensitivity + Specificity) / 2

= ((True Positives / (True Positives + False Negatives)) + (True Negatives / (True Negatives + False Positives))) / 2

Balanced accuracy tells us how well our model performs across all classes, regardless of how many samples each class has. It’s like taking the average of performance on each class.

Case Study:

A medical diagnostic model for a rare disease (affecting only 1% of patients) achieved 99% accuracy by simply predicting “no disease” for every patient. However, its balanced accuracy was only 50%, revealing that the model had no predictive power for the minority class.

When to Use:

- When your classes are imbalanced

- When you care equally about performance on all classes

- When standard accuracy would be misleading

Significance:

Balanced accuracy prevents models from appearing artificially good by simply predicting the majority class. It’s particularly important in medical diagnostics, fraud detection, and other domains where the class of interest is rare but important.

F1 Score

The F1 score is the harmonic mean of precision and recall, providing a single metric that balances optimizing for both.

F1 = 2 × (Precision × Recall) / (Precision + Recall)

F1 score gives you a single number that represents how well your model balances making correct positive predictions and finding all positive cases.

Case Study:

A fraud detection system at a financial institution used F1 score for evaluation because they needed to balance two concerns: minimizing false fraud alerts (precision) while still catching as many actual fraud cases as possible (recall). Their model achieved an F1 score of 0.82, indicating a good balance between these competing objectives.

When to Use:

- When you need to balance precision and recall

- When both false positives and false negatives have costs

- When you need a single metric for model comparison

- When classes are imbalanced

Significance:

F1 score is widely used in research and production because it provides a balanced assessment that considers both precision and recall. It’s particularly valuable when working with imbalanced datasets where accuracy might be misleading.

F-beta Score

F-beta is a generalization of the F1 score that allows you to give more weight to either precision or recall.

F-beta = (1 + beta²) × (Precision × Recall) / ((beta² × Precision) + Recall)

Where beta is a positive real number.

F-beta lets you customize how much you care about precision versus recall. If beta is greater than 1, recall is more important; if beta is less than 1, precision is more important. Notice that when beta is 1 for the F-beta formula, it becomes F1 score.

Case Study:

A legal document review system used an F2 score (beta=2) to evaluate performance, giving recall twice the importance of precision. This reflected the business reality that missing relevant documents (false negatives) was more problematic than including some irrelevant ones (false positives).

When to Use:

- When either precision or recall is more important than the other

- When you can quantify the relative importance of these metrics

- When you need a single metric that reflects your specific business priorities

Significance:

F-beta allows for customization based on domain-specific needs, making it more flexible than F1. Common choices include F2 (emphasizing recall) and F0.5 (emphasizing precision).

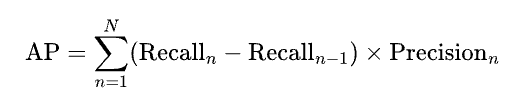

Average Precision Score

Average Precision (AP) summarizes a precision-recall curve as the weighted mean of precisions achieved at each threshold, with the increase in recall from the previous threshold used as the weight.

Where n represents different threshold levels. Average Precision tells you how well your model ranks positive instances above negative ones, considering all possible classification thresholds.

Case Study:

A search engine evaluated its ranking algorithm using Average Precision. With an AP of 0.92, the system demonstrated excellent ability to rank relevant results higher than irrelevant ones, regardless of the specific threshold chosen to determine what constitutes a “match.”

When to Use:

- When you’re interested in rankings rather than binary classifications

- When you need to evaluate performance across all possible thresholds

- In information retrieval, search engines, and recommendation systems

Significance:

Average Precision is particularly valuable in ranking problems and when the threshold for classification might vary. It’s a core component of the popular Mean Average Precision (MAP) metric used in information retrieval and object detection.

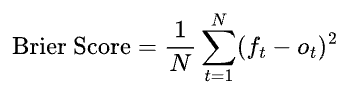

Brier Score Loss

Often times classification models are built to output probabilities rather than just class labels. The Brier Score measures the mean squared difference between predicted probabilities and actual outcomes. Lower values indicate better calibrated probabilities.

Where:

- N is the number of predictions

- f_t is the predicted probability for instance t

- o_t is the actual outcome (0 or 1) for instance t

Brier Score tells you how well your predicted probabilities match the actual outcomes. If you predict a 70% chance of rain and it rains 7 out of 10 times when you make that prediction, your probabilities are well-calibrated.

Case Study:

A weather forecasting service used Brier Score to evaluate their precipitation predictions. With a score of 0.12 (on a scale from 0 to 1, where lower is better), they demonstrated well-calibrated probability estimates that correctly reflected the actual frequency of rain.

When to Use:

- When you care about probability calibration, not just binary outcomes

- When making decisions based on risk assessments

- In weather forecasting, sports predictions, and risk modeling

Significance:

Brier Score is unique in that it evaluates the quality of predicted probabilities rather than just binary classifications. Well-calibrated probabilities are important for risk assessment, decision-making under uncertainty, and communicating confidence levels.

ROC AUC Score

The Receiver Operating Characteristic Area Under Curve (ROC AUC) measures the area under the curve created by plotting the true positive rate against the false positive rate at various threshold settings.

The formula involves integrating the ROC curve, but conceptually:

ROC AUC = P(score(positive instance) > score(negative instance))

ROC AUC tells you the probability that your model will rank a randomly chosen positive instance higher than a randomly chosen negative instance. A perfect model has an AUC of 1, while a model that guesses randomly has an AUC of 0.5.

Case Study:

A credit scoring model achieved an ROC AUC of 0.92. This meant that 92% of the time, the model assigned a higher credit risk score to a customer who defaulted than to one who didn’t default. This demonstrated strong discriminative ability regardless of the specific threshold chosen to approve or deny credit.

When to Use:

- When you want to evaluate model performance independent of any specific classification threshold

- When class distributions may change between training and deployment

- When you need to compare models at a high level

Significance:

ROC AUC is one of the most popular classification metrics because it evaluates performance across all possible thresholds and is insensitive to class imbalance. It’s particularly valuable when the optimal classification threshold isn’t known in advance or might change over time.

Jaccard Score

The Jaccard score (or Jaccard similarity coefficient) measures the similarity between predicted and actual label sets as the size of their intersection divided by the size of their union.

Jaccard Score = |A ∩ B| / |A ∪ B|

= True Positives / (True Positives + False Positives + False Negatives)

Jaccard score tells you what proportion of the combined positive predictions and actual positives were correctly identified. It ignores true negatives.

Case Study:

A species identification model for ecological surveys used the Jaccard score to evaluate performance. With a score of 0.78, the model demonstrated good ability to identify which species were present in an area, without being unduly influenced by the large number of species correctly identified as absent (true negatives).

When to Use:

- When true negatives are not relevant or are overwhelmingly common

- In multi-label classification problems

- When evaluating the similarity between sets

- In document classification, image segmentation, and ecological presence/absence studies

Significance:

Jaccard score is particularly valuable in multi-label classification and when the number of true negatives is very large or not meaningful. It focuses on the model’s ability to find the positive class without being influenced by the often large number of true negatives.

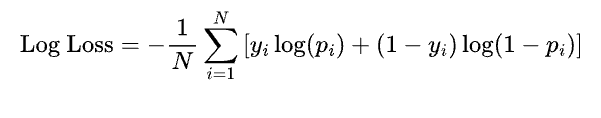

Log Loss

Log Loss (or cross-entropy loss) measures the performance of a classification model where the prediction is a probability value between 0 and 1. It increases as the predicted probability diverges from the actual label.

Where:

- N = total number of samples

- y_i = true label (0 or 1)

- p_i = predicted probability that y_i = 1

Log Loss heavily penalizes confident but wrong predictions. If you’re 99% confident in a prediction that turns out to be wrong, you’ll be penalized much more than if you were only 51% confident.

Case Study:

A medical diagnostic algorithm was evaluated using Log Loss because confidence calibration was critical. With a Log Loss of 0.18, the model demonstrated well-calibrated probability estimates, providing doctors with reliable confidence levels for diagnoses.

When to Use:

- When you need well-calibrated probability outputs

- When confident but wrong predictions are particularly problematic

- In risk-sensitive applications

- When training models with gradient-based methods

Significance:

Log Loss is often used as the loss function during training of many classification algorithms. It provides a more nuanced view of model performance than accuracy by considering the confidence of predictions. It’s particularly valuable when the probability estimates themselves will be used for decision-making.

D² Log Loss Score

D² Log Loss Score measures the relative improvement in log loss compared to a baseline model that predicts the class probabilities based on the overall class distribution.

D² = 1 — (Log Loss of Model / Log Loss of Baseline)

D² Log Loss tells you how much better your model’s probability predictions are compared to simply guessing based on the overall frequency of each class.

Case Study:

A customer churn prediction model achieved a D² Log Loss Score of 0.42, indicating that it reduced the uncertainty about customer churn by 42% compared to simply using the overall churn rate as the prediction for every customer.

When to Use:

- When you want to measure improvement over a naive baseline

- When working with imbalanced datasets

- When you need a normalized metric that’s easier to interpret than raw Log Loss

Significance:

D² Log Loss provides context to the raw Log Loss value by comparing it to a baseline. This makes it easier to interpret across different datasets and problems. It’s particularly useful for communicating the value of a model to non-technical stakeholders.

P4-metric

The P4-metric (Predictive Positive Precision Performance) combines precision, recall, and the positive predictive value to evaluate classification performance, particularly for imbalanced datasets.

P4 = Precision × Recall × Positive Predictive Value × (1 — False Discovery Rate)

P4 gives you a single number that captures how well your model performs across several important dimensions, with a focus on correctly identifying positive cases.

Case Study:

A cybersecurity team evaluating intrusion detection systems used the P4-metric because they needed to balance detecting actual intrusions (recall) with minimizing false alarms (precision) in a highly imbalanced dataset where intrusions were rare.

When to Use:

- When dealing with highly imbalanced datasets

- When you need a comprehensive metric that considers multiple aspects of performance

- When positive class detection is the primary concern

Significance:

The P4-metric addresses some of the limitations of other metrics for imbalanced datasets. By combining multiple aspects of performance, it provides a more holistic view than metrics like F1 score, especially when the positive class is rare but important.

Cohen’s Kappa

Cohen’s Kappa measures agreement between two raters (or a model and the ground truth), taking into account the agreement that would happen by chance.

κ = (po — pe) / (1 — pe)

Where po is the observed agreement and pe is the expected agreement by chance. Kappa tells you how much better your model is than random guessing, accounting for the class distribution. A kappa of 0 means your model is no better than random, while 1 means perfect agreement.

Case Study:

A sentiment analysis model for customer reviews achieved a Cohen’s Kappa of 0.78 when compared to human annotators. This indicated substantial agreement beyond what would be expected by chance, suggesting the model was capturing genuine sentiment patterns rather than simply predicting the most common sentiment category.

When to Use:

- When you want to account for agreement that would occur by chance

- When comparing model performance to human raters

- When class distributions are skewed

- In inter-rater reliability studies and model evaluation

Significance:

Cohen’s Kappa provides a more robust measure than simple accuracy by accounting for the expected agreement due to chance. This is particularly important when classes are imbalanced or when evaluating agreement between different raters (human or algorithmic). Values are typically interpreted as:

- < 0: Worse than random

- 0.01–0.20: Slight agreement

- 0.21–0.40: Fair agreement

- 0.41–0.60: Moderate agreement

- 0.61–0.80: Substantial agreement

- 0.81–1.00: Almost perfect agreement

Phi Coefficient

The Phi Coefficient (also known as the Matthews Correlation Coefficient or MCC) measures the correlation between predicted and actual classifications. It returns a value between -1 and +1.

Phi = (TP × TN — FP × FN) / √[(TP + FP)(TP + FN)(TN + FP)(TN + FN)]

Where TP = True Positives, TN = True Negatives, FP = False Positives, FN = False Negatives.

Phi tells you how well your predictions correlate with the actual values, taking into account all four confusion matrix categories. A value of +1 represents perfect prediction, 0 represents random prediction, and -1 represents perfect disagreement.

Case Study:

A genomics research team used the Phi Coefficient to evaluate a gene function prediction model. With highly imbalanced data (few genes with the target function), traditional metrics were misleading. The model achieved a Phi Coefficient of 0.62, indicating good predictive power despite the imbalance.

When to Use:

- When you have imbalanced classes

- When all four confusion matrix categories (TP, TN, FP, FN) are meaningful

- When you need a single, balanced measure of classification performance

- In binary classification problems where both classes are equally important

Significance:

The Phi Coefficient is considered one of the most balanced measures of binary classification performance. Unlike accuracy, F1, or precision/recall, it uses all four confusion matrix categories and is resistant to imbalanced datasets. It’s particularly valuable in computational biology, medical diagnostics, and other fields with naturally imbalanced classes.

Conclusion

Classification metrics are essential tools for evaluating and comparing model performance. The appropriate choice depends on your specific problem context, data characteristics, and business objectives. By understanding the strengths, weaknesses, and appropriate use cases for each metric, you can make more informed decisions about how to evaluate and improve your classification models.

It’s often valuable to consider multiple metrics simultaneously, as they can provide complementary insights into a model’s performance. A model that performs well across several relevant metrics is likely to be more robust and useful in real-world applications.

Which of these classification metrics have you used? Which are new to you? Chime in the comments.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.