Agent Control Patterns — Part 2: Reflection — A Simple Way to Improve Answer Quality

Last Updated on March 4, 2026 by Editorial Team

Author(s): Vahe Sahakyan

Originally published on Towards AI.

An agent can execute a workflow correctly and still produce a weak answer.

It may follow the graph, respect routing rules, and stop exactly where it should — yet return a response that lacks depth or misses important reasoning steps.

The output might be clear and well-structured, but incomplete. It may sound confident while relying on unstated assumptions.

Execution only tells us that the system followed the process.

It does not guarantee that the answer is good.

Introduction — Acting Correctly Isn’t the Same as Improving the Answer

In Part 1, we looked at how control patterns shape how an agent executes. Sequential flows, routing, parallelization, and orchestration determine where work goes, how tasks are split, and when the system stops.

But correct execution does not guarantee a good answer.

An agent can follow the workflow exactly as designed and still return a weak result. It may satisfy routing rules, reach the stop condition, and produce a clear response — yet miss key reasoning steps, rely on unstated assumptions, or lack depth.

This is the difference between execution and improvement.

Execution checks whether the task was completed.

Improvement asks whether the result is actually good.

Most LLM systems operate in a single pass: generate once, return the result, stop. If the output looks acceptable, the process ends. But many real-world tasks — analysis, writing, explanation, design — benefit from revision.

To improve quality, generation and evaluation must be separated.

One step produces an answer.

Another step reviews it.

The feedback guides revision.

This idea is similar in spirit to generator–discriminator setups in GANs — not in how models are trained, but in separating creation from evaluation. No weights change. No learning happens. The improvement occurs within the reasoning process.

When generation and evaluation happen in the same step, improvement is inconsistent.

When they are separated, improvement becomes more reliable.

In Part 1, we changed how execution flows through a system.

In this article, we change how answers are evaluated before they are accepted.

That is the focus of reflection.

What Is Reflection?

Reflection separates generation from evaluation so a system can improve its own answer within a single interaction.

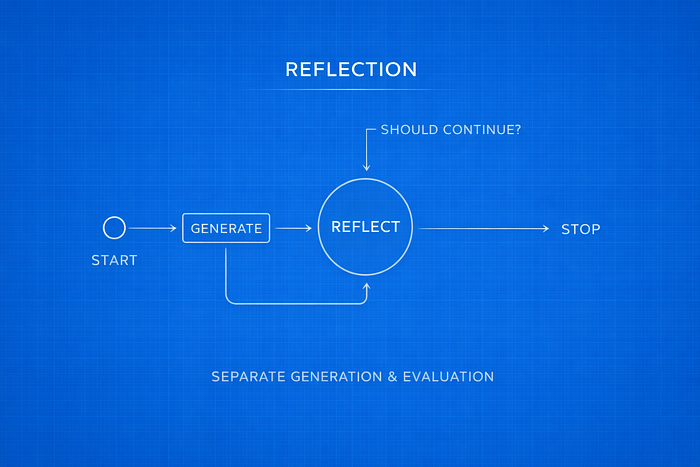

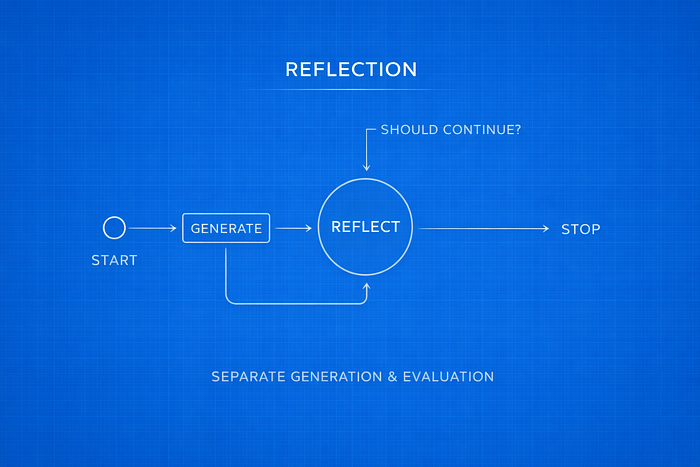

At a high level, the process looks like this:

Generate → Critique → Revise → Stop

The system starts by generating a response. A critic then reviews it using clear criteria, and the system revises the answer based on that feedback. This process continues until a stop condition is met.

The model itself does not change. There is no training, no weight updates, and no new memory stored. The improvement happens entirely within the reasoning process of that interaction.

This is not learning. It is structured review.

The generator and critic act as separate roles, even if they use the same model. One produces the answer. The other evaluates it.

Because evaluation is handled explicitly, weaknesses become easier to see — missing assumptions, gaps in logic, unclear explanations, weak structure. Instead of accepting the first response as final, the system checks whether it can be improved.

Reflection does not change how tasks are routed or broken down. It keeps the overall workflow the same. What it adds is a review step before the answer is accepted.

Reflection is one of the simplest ways to improve answer quality without changing the model itself.

Separation of Roles

Reflection works because it separates writing from reviewing.

In a single-pass system, generation and evaluation happen at the same time. The same prompt produces the answer and implicitly accepts it. There is no explicit review step.

But writing and reviewing are different tasks.

The generator’s job is to produce content — explain ideas, connect concepts, and organize the response clearly.

The critic’s job is to evaluate that content — find gaps, question assumptions, and point out unclear sections.

When both responsibilities are combined into a single instruction, the model often produces longer answers instead of better ones. Asking it to “write and critique at the same time” usually does not improve reasoning in a consistent way.

Separating the roles avoids that problem.

In a basic reflection setup, the generator and critic receive different instructions.

A simple generator prompt might say:

You are an AI that explains machine learning concepts to beginners. If feedback is provided, revise the explanation accordingly.

A critic prompt might say:

You are a reviewer of explanations written for beginners. Evaluate the most recent explanation for clarity and structure. Do not rewrite it. Provide strengths and specific improvements.

The generator writes or revises.

The critic reviews.

Even if both roles use the same model, separating them changes how the reasoning unfolds. The system alternates between producing an answer, reviewing it, and revising it.

The first draft is no longer automatically accepted. Weaknesses are made explicit, and revisions become targeted.

Without role separation, improvement depends on repeating the prompt and hoping for a better result. With separation, improvement becomes part of the design.

Reflection is not simply asking the model to “try again.” It organizes the task so that review and revision are distinct steps. That structure makes improvement more consistent.

The Minimal Reflection Loop

Reflection becomes concrete when we implement it as a simple execution loop over message history.

The simplest version uses a MessageGraph, where the state is a growing list of BaseMessage objects. Each node reads the full message history and appends one new message.

Basic Graph Structure

We define two nodes:

- generate: produce or revise the explanation

- reflect: review the latest explanation and return feedback

Each node returns a list of messages to append to the state:

from langchain_core.messages import AIMessage, HumanMessage, BaseMessage

from typing import Sequence, List

def generate_node(state: Sequence[BaseMessage]) -> List[BaseMessage]:

response = generator_chain.invoke({"messages": state})

return [AIMessage(content=response.content)]

def reflect_node(state: Sequence[BaseMessage]) -> List[BaseMessage]:

critique = reflector_chain.invoke({"messages": state})

return [HumanMessage(content=critique.content)]Notice that the critique is returned as a HumanMessage. This allows it to act as feedback for the generator in the next step.

We then connect the nodes using MessageGraph:

from langgraph.graph import MessageGraph

graph = MessageGraph()

graph.add_node("generate", generate_node)

graph.add_node("reflect", reflect_node)

# After reflecting, generate a revised answer

graph.add_edge("reflect", "generate")

# Start by generating from the initial user prompt

graph.set_entry_point("generate")

This creates the loop: generate → reflect → generate.

Stop Rule

A reflection loop must include a clear stop condition. Without one, the system could continue revising forever.

In the simplest version, we stop after a fixed number of messages:

from langgraph.graph import END

def should_continue(state: List[BaseMessage]):

if len(state) >= 7:

return END

return "reflect"We attach this rule as a conditional edge:

graph.add_conditional_edges("generate", should_continue)

workflow = graph.compile()

Now the loop runs:

generate → reflect → generate → … → stop

The key idea is simple: repetition alone does not improve quality. What matters is that repetition follows clear rules. The stop condition is part of the system design, not an informal “try again.”

Structured Reflection (Typed-State Version)

The basic reflection loop works because it separates writing from reviewing and limits how many times the system can revise.

However, it has a limitation: the critic returns feedback as free-form text.

Free-form feedback can be vague. It may mix praise with suggestions, restate the problem, or fail to give clear guidance. When feedback is unclear, revisions become inconsistent.

To make reflection more reliable, we can structure the feedback itself.

Why Structure Helps

Instead of allowing the critic to respond with a paragraph, we require it to return feedback in a fixed format.

For example:

class Critique(BaseModel):

strengths: List[str]

weaknesses: List[str]

improvement_suggestions: List[str]

Now the critic must clearly state what works, what does not, and what should change.

This makes the feedback more precise.

The generator no longer has to interpret a general paragraph. It receives specific points to address. Instead of guessing what to revise, it can directly respond to listed weaknesses.

Structuring the critique has several practical benefits:

- It reduces ambiguity by requiring concrete suggestions.

- It keeps each iteration consistent.

- It improves revisions by defining clear targets.

- It allows the system to inspect the feedback programmatically and decide whether another revision is needed.

In this version, reflection becomes more predictable.

We can also represent the state explicitly:

class ReflectionState(TypedDict):

draft: str

critique: Critique

iteration: int

Instead of passing around unstructured message history, the system now works with defined fields: the draft, the critique, and the current iteration count.

The loop remains the same:

Generate → Critique → Revise → Stop

But now the feedback is structured, and the process is easier to control.

Structure does not make the model smarter. It makes improvement more consistent.

Why Reflection Works

Reflection improves answers by adding a review step before the response is finalized.

In a single-pass system, the first draft is accepted as final. The model produces a response and stops.

Reflection adds a step where the answer is reviewed before it is accepted.

When critique is separated from generation, weaknesses become easier to see. Missing assumptions, unclear explanations, and gaps in logic are pointed out instead of being left unnoticed.

Revision is no longer just generating a new version and hoping it is better. It becomes focused on specific issues raised in the critique.

This reduces the risk of accepting a weak first draft and helps the system produce clearer and more complete answers.

The model itself does not change, and no new information is added. Reflection works because it forces the system to review its own output before finishing.

Where Reflection Breaks

Reflection can improve the quality of reasoning, but it cannot add new knowledge.

The generator and critic use the same model. If the model does not know a fact, neither role knows it. A critic cannot point out missing information that the model never learned.

If an answer is confidently wrong, reflection may improve how the mistake is explained instead of correcting it. The loop can make an answer clearer while it remains incorrect.

Reflection also struggles when a task requires external data. If the answer depends on up-to-date information or specialized facts outside the model’s training data, reviewing the answer internally is not enough.

Reflection can rethink an answer. It cannot look up new information.

It can improve structure, clarify assumptions, and strengthen reasoning. But it cannot introduce knowledge that the model does not already have.

That is its main limitation.

Transition to Reflexion

Reflection improves answers by adding a review step before they are accepted. It separates generation from critique and makes revision part of the process.

However, it works only with the knowledge already inside the model.

The generator and critic use the same model. They can reorganize reasoning, clarify explanations, and point out weaknesses — but they cannot add new information.

If an answer is incomplete because the model lacks knowledge, reviewing it again is not enough. At that point, the system needs access to external information.

In the next article, we look at Reflexion — a pattern that goes beyond internal review by allowing the agent to search for new information when reasoning alone is not sufficient.

Reflection improves what the model already knows.

Reflexion allows it to look beyond that knowledge.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.