Agent Control Patterns — Part 3: Reflexion — When Review Triggers Research

Last Updated on March 4, 2026 by Editorial Team

Author(s): Vahe Sahakyan

Originally published on Towards AI.

A model can review its own answer and still return incorrect information.

It may recognize uncertainty, improve its wording, and clarify its reasoning. But if the missing piece is factual, reviewing the answer again will not fix it.

A system cannot produce information it does not have.

At that point, improving the answer requires external evidence.

Introduction — Reflection Cannot Add Missing Knowledge

In Part 2, we introduced Reflection — a pattern that improves answers by separating generation from evaluation. Reflection works well when the issue is clarity, structure, or incomplete reasoning.

However, it only works with the knowledge already inside the model.

Reflection can improve how an answer is written. It cannot correct facts the model does not know.

Consider a time-sensitive question:

Who won the Nobel Prize in Physics in 2025 and why?

If the model was trained before the announcement, it does not contain that information. Reviewing the answer again will not produce the correct result.

A reflection loop can improve phrasing, point out uncertainty, and highlight possible gaps. But it cannot add new facts that are not already in the model.

Improving wording does not make an outdated answer correct.

This is the limit of reflection: when correctness depends on external evidence, reviewing the answer again is not enough.

At that point, the system needs access to new information.

That transition — from internal review to external research — is the focus of this article.

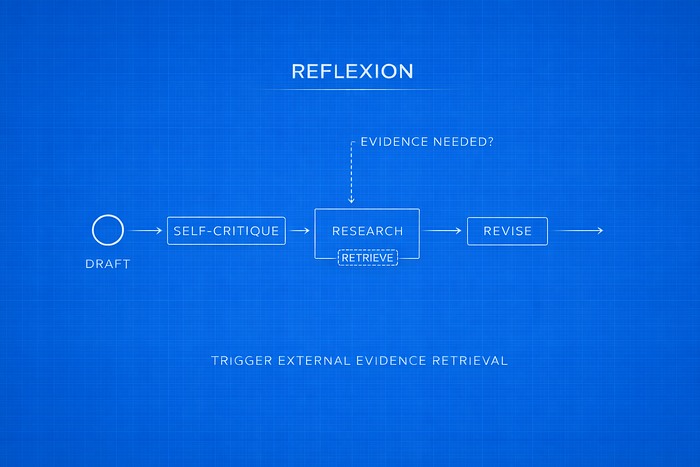

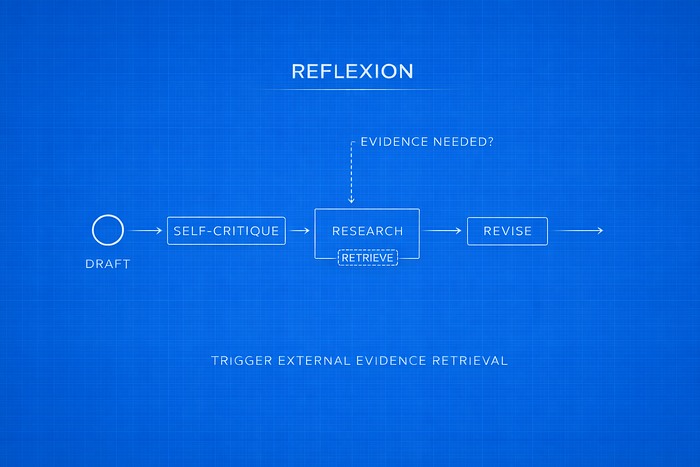

What Is Reflexion?

Reflexion builds on Reflection by allowing review to trigger research.

In Reflection, the loop stays internal:

Generate → Critique → Revise → Stop

In Reflexion, the process adds a research step:

Draft → Self-Critique → Generate Search Queries → Run Tools → Revise Using Evidence → Stop (or repeat)

The main difference is not just the use of tools. It is that research becomes part of the improvement process.

The workflow starts the same way as Reflection: the system produces a draft and reviews it to find gaps, uncertainty, or unsupported claims. However, instead of revising immediately, the critique produces structured search queries. Those queries are executed using external tools, and the retrieved results are added before revision happens.

Revision is no longer based only on internal reasoning. It uses new information gathered during the research step.

Reflection improves clarity.

Reflexion improves factual accuracy.

Research is not always required. It is triggered only when the critique identifies missing or uncertain information. This avoids unnecessary tool calls while still allowing the system to verify important claims.

Reflexion changes the loop from internal review to review followed by evidence collection.

Difference from Reflection

Reflection and Reflexion may look similar because both include critique and revision.

The difference is simple.

In Reflection, the entire process stays inside the model. The system reviews the draft and revises it using only the knowledge it already has. No new information is added.

In Reflexion, review can trigger a research step. If the critique identifies missing or uncertain facts, the system generates search queries, retrieves external information, and then revises the draft using that evidence.

Here is the comparison:

Reflection improves answers that are unclear or incomplete.

Reflexion improves answers that may be factually wrong or outdated.

In Reflection, the question is: can this be expressed more clearly?

In Reflexion, the question is: is something missing, and do we need to verify it?

That is the core difference.

Structured Draft Package

Reflexion requires structure from the beginning.

If the initial draft is just free-form text, the system cannot reliably tell what is uncertain, what needs verification, or what should be searched. In that case, research becomes inconsistent and harder to control.

To connect critique to research in a predictable way, the first response must follow a defined format.

In the Reflexion pattern, the model returns three components:

- The draft answer

- A self-critique describing gaps or uncertainty

- A list of specific search queries

This is enforced using a Pydantic model:

from pydantic import BaseModel, Field

from typing import List

class DraftOutput(BaseModel):

"""Structure for the first draft and intermediate revisions"""

answer: str = Field(description="Initial answer to the user's question.")

reflection: str = Field(description="Self-critique of the initial answer. Identify gaps and uncertainty.")

search_queries: List[str] = Field(description="1-3 focused web search queries to fill gaps.")

This model defines how reasoning connects to research.

Why Structure Helps

1. The critique becomes concrete

The reflection field forces the system to clearly describe what is uncertain or incomplete. Problems must be stated before they can be addressed.

2. Queries are ready to execute

The search_queries field turns critique into specific search instructions. Instead of deciding whether to search, the system already has defined queries tied to the draft.

3. Research can be triggered programmatically

Because queries are structured, the system can detect when research is required, execute the searches, and pass the results to the revision step. The workflow follows clear phases instead of relying on loose prompt behavior.

4. Easier debugging

Each part of the output is inspectable. If something goes wrong, you can check whether the issue is in the draft, the critique, or the search queries.

Reflexion is not simply “reflection plus search.” It defines a structured draft format that allows research to be triggered and handled in a controlled way.

Without that structure, the system cannot reliably move from critique to evidence.

Research Phase (Tool Integration)

Reflexion becomes different from Reflection when review can trigger research.

After the model returns a structured draft package — answer, reflection, and search_queries — the system moves to a research step. In this step, the proposed queries are executed using an external tool, and the results are added before revision.

What Tool Integration Means

In this example, the external tool is a web search API (Tavily) used to retrieve up-to-date facts.

The specific tool is not the main point. What matters is how responsibilities are split:

- The model does not run searches directly.

- It produces search queries as part of its critique.

- A separate step executes those queries and returns the results.

The model suggests what to search.

The system performs the search.

This separation allows the system to add new information instead of relying only on what the model already knows.

The Research Node

The research step is implemented as a node that reads the search_queries, executes them, and appends the results as ToolMessage objects. These results are then available during revision.

from langchain_core.messages import ToolMessage

import json

def run_searches(state: List[BaseMessage]) -> List[BaseMessage]:

last_ai_message = state[-1]

tool_messages = []

for tool_call in last_ai_message.tool_calls:

if tool_call["name"] in ["DraftOutput", "FinalOutput"]:

call_id = tool_call["id"]

search_queries = tool_call["args"].get("search_queries", [])

query_results = {}

for query in search_queries:

result = tavily_client.search(query=query, max_results=1)

query_results[query] = result

tool_messages.append(ToolMessage(

content=json.dumps(query_results),

tool_call_id=call_id)

)

return tool_messages

Two points are important:

- The tool step executes exactly what the model proposed in search_queries.

- The retrieved evidence becomes part of the system state and is available for revision.

Where It Sits in the Workflow

The research step sits between drafting and revision:

respond → execute_tools → revisor

The process becomes:

- Draft and critique (produce queries)

- Run research (collect evidence)

- Revise using that evidence

Research is not always performed. It runs only when the critique produces search queries.

The key idea is simple: revision can now use new information gathered during the workflow.

Evidence-Based Revision

After research results are retrieved, the system moves to the final step: revising the answer using that evidence.

Unlike Reflection, revision is no longer based only on internal reasoning. The model now works with three inputs:

- The original draft

- The self-critique

- The retrieved search results

To keep the output structured, the final response also follows a defined format:

class FinalOutput(DraftOutput):

"""Structure for the final revised answer"""

references: List[str] = Field(description="List of URLs that support your claims")

The references field requires the model to include sources that support its claims. The system does not accept a revised answer without citations.

What Changes in This Step

In Reflection, revision improves clarity and structure.

In Reflexion, revision can:

- Correct unsupported claims

- Add missing facts

- Replace outdated information

- Cite sources

The goal is not just a clearer answer. It is an answer supported by evidence.

The revision step is instructed to:

- Use the retrieved evidence

- Address weaknesses identified in the critique

- Remove unsupported statements

- Provide references

Now you can compare the initial draft with the final answer and see exactly what changed. The difference comes from the integration of new information.

Reflexion turns critique into verification and verification into evidence-based revision.

Control Strategy

Reflexion is implemented as a step-by-step workflow.

Instead of letting the model decide what to do next inside a single prompt, the process is broken into separate nodes. Each node performs one task and passes its result to the next step.

In this workflow, three nodes are defined:

- respond — produces the structured draft (DraftOutput)

- execute_tools — runs the external search queries

- revisor — produces the final answer (FinalOutput)

The graph wiring looks like this:

graph.add_node("respond", responder)

graph.add_node("execute_tools", run_searches)

graph.add_node("revisor", revisor)

graph.add_edge("respond", "execute_tools")

graph.add_edge("execute_tools", "revisor")

This creates a clear order:

Draft → Research → Revision

After revision, the system checks whether another round is needed. To avoid endless loops, the number of iterations is limited:

MAX_ITERATIONS = 4

def event_loop(state: List[BaseMessage]) -> str:

count_tool_visits = sum(isinstance(item, ToolMessage) for item in state)

num_iterations = count_tool_visits

if num_iterations >= MAX_ITERATIONS:

return END

return "run_searches"

The overall cycle becomes:

Draft → Research → Revise → (optional repeat)

Two things matter here:

- Research happens in a separate step, not mixed with drafting.

- The number of iterations is limited.

The system does not rely on informal “try again” prompts. It follows a defined sequence of steps, and each step has a specific role.

When to Use Reflexion

Use Reflexion when the answer depends on information the model may not have.

It is useful when:

- The question is time-sensitive (for example, recent awards, policy changes, or product releases).

- The answer requires up-to-date facts beyond the model’s training data.

- The task involves official documents, regulations, or technical specifications that need verification.

- Claims must be supported with clear sources.

In short, use Reflexion when accuracy depends on external evidence.

Reflection is still enough when the goal is to improve clarity, structure, or reasoning within what the model already knows.

A simple rule:

- If the answer can be improved by reorganizing reasoning, use Reflection.

- If the answer requires new information to be correct, use Reflexion.

Where Reflexion Breaks

Reflexion can add new information, but it also introduces new dependencies.

Its performance depends on several factors:

- Tool quality — If the search tool returns biased, incomplete, or low-quality results, the final answer will reflect those issues.

- Query quality — Weak or overly broad search queries lead to weak evidence.

- Latency and cost — External research requires additional model calls and tool execution time.

- Iteration control — Without clear limits, the research and revision loop can run longer than necessary.

Reflexion improves factual accuracy, but it makes the system more complex.

It replaces a simple internal loop with a workflow that depends on external tools.

As a result, the system’s reliability depends on the quality and relevance of the evidence it retrieves.

Transition to ReAct

Reflexion improves answers by separating the process into clear steps: draft, research, and revise.

This works well when the workflow can be divided in advance.

However, not all tasks follow a fixed sequence.

In some problems, reasoning and action need to happen together. A decision may change what should be searched next. A tool result may change what the system should reason about next. The steps cannot always be defined before execution starts.

When the number of steps is unknown, separating the process into fixed phases can become limiting.

In those cases, the system needs to reason and act incrementally, letting each observation influence the next step.

In the next article, we examine ReAct — a pattern where reasoning and tool use are interleaved instead of separated into phases.

Reflection focuses on improving reasoning.

Reflexion adds research when needed.

ReAct combines reasoning and action step by step.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.