GenAI Interview Questions asked in different companies

Last Updated on March 3, 2026 by Editorial Team

Author(s): Sachin Soni

Originally published on Towards AI.

Q.1 Can you define encoder-only, decoder-only, and encoder-decoder-only architecture?

1. Encoder-Only Architecture

- Uses only the encoder stack of the Transformer.

- The encoder focuses on building deep contextual representations (generating dynamic contextual embeddings) of the input.

- It uses bidirectional self-attention → meaning the model can see both left and right context at the same time.

Examples: BERT, RoBERTa etc.

When to use

- Text classification (sentiment, toxicity, spam)

- Named entity recognition (NER)

- Question classification

- Embedding generation

Not suitable for:

- Any task requiring text generation (summaries, stories, answers).

2. Decoder-Only Architecture

- Uses only the decoder stack of the Transformer.

- The decoder uses causal / masked attention, meaning it can see only previous tokens, not future ones.

- It is optimized for autoregressive generation (predict next token).

Examples: GPT-2, GPT-3, LLaMA 3 etc.

When to use

- Chatbots

- Story writing

- Code generation

- Autoregressive text continuation

- Reasoning tasks

- RAG-based generation

Why decoder-only models are inefficient for classification task ?

Decoder-only models like GPT-3 or LLaMA 3 are generative, meaning they always try to produce text token by token.

Even if you just want a label, they still:

- Perform causal-attention computations across the entire prompt.

- Generate answer tokens one by one (autoregressive generation).

- Use large vocabularies (30k–100k tokens), which is unnecessary for small label sets.

Example

Input:

“Is this review positive or negative?

The product stopped working after two days.”

Encoder-only model (BERT):

- Output:

Negative - One forward pass → classification head → done.

Decoder-only model (GPT):

- Must interpret the prompt

- Must generate tokens like:

"Negative" - Uses autoregressive next-token prediction

- Same computation cost as generating a sentence

Result

You waste compute, speed, and memory, especially during training.

3. Encoder–Decoder Architecture

- Combines encoder + decoder components.

- Encoder creates a rich representation of the input.

- Decoder generates output using cross-attention over encoder outputs.

Examples: T5, BART etc.

When to use

- Machine translation

- Summarization (abstractive)

- Text-to-image captioning

- Sequence-to-sequence labeling

- Multi-modal tasks (image → text)

Q.2 What are ROUGE, BLEU, METEOR, and BERTScore?

These are evaluation metrics used to measure how similar a generated text (LLM output,smmary, caption) is to a reference human-written text.

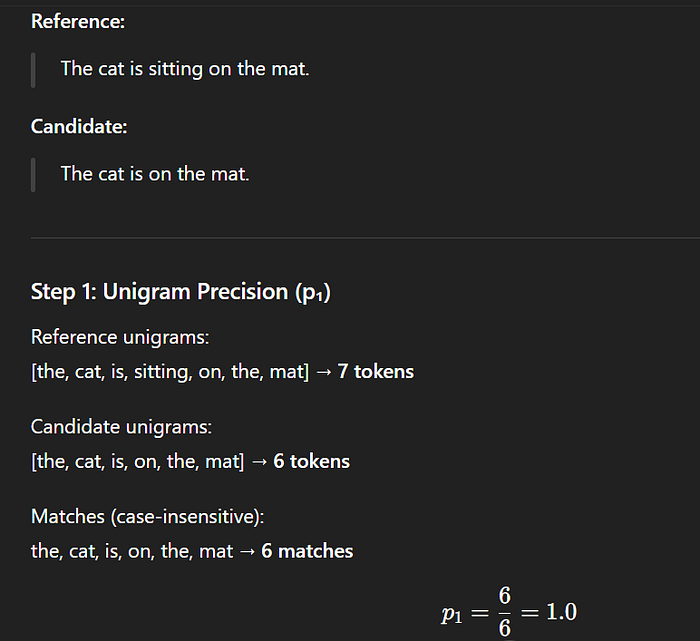

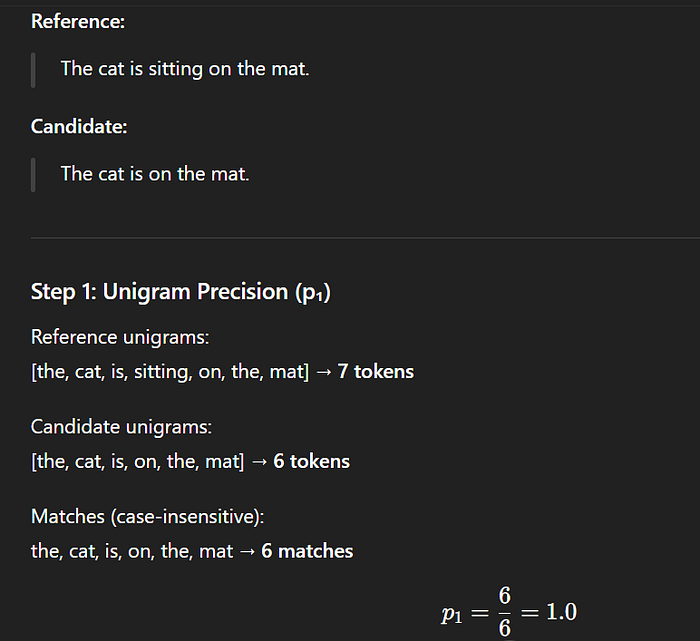

BLEU Score (Bilingual Evaluation Understudy) :

- Checks how many word sequences in the generated output appear in the reference text

- BLEU = measures PRECISION

Example :

BLEU limitations :

- Doesn’t understand meaning

- BLEU drops drastically when synonyms or paraphrasing are used

(because BLEU does NOT reward meaning, only exact matches)

2. ROUGE Score (Recall-Oriented Understudy for Gisting Evaluation)

How much of the reference text appears in my generated text?

ROUGE = measures RECALL

Most used variants:

- ROUGE-1: unigram overlap

- ROUGE-2: bigram overlap

- ROUGE-L: longest common subsequence (LCS)

Example :

ROUGE limitations

- Still relies on exact words

- Does not capture semantics well

3. METEOR (Metric for Evaluation of Translation with Explicit Ordering)

- Uses exact matches + stemming + synonyms + paraphrase matching

- More flexible than BLEU/ROUGE

Example

Reference:

The boy is running.

Generated:

The kid runs.

BLEU fails (low overlap) while METEOR succeeds because:

- “boy” ≈ “kid” (synonym)

- “running” ≈ “runs” (stem match)

METEOR limitations

- Requires linguistic resources (WordNet)

- Still not as semantic as embedding-based methods like BERTScore

4. BERTScore

BERTScore uses pretrained BERT embeddings to compare sentences by meaning, not just words.

Steps:

- Convert each token into an embedding

- Match tokens from candidate → reference using cosine similarity

- Compute precision, recall, F1 based on semantic closeness

Example :

Reference:

The man purchased a new car.

Generated:

He bought a new vehicle.

BLEU/ROUGE = low

BERTScore = high

Because:

- “purchased” ≈ “bought”

- “car” ≈ “vehicle”

- “man” ≈ “he”

Q.3 Can you explain different prompting techniques ?

1. Zero-Shot Prompting :

You ask the model a question without giving any example.

Example: “What is the capital of India?”

2. Few-Shot Prompting :

You provide 2–3 examples to show the model how to answer.

This helps it learn the pattern before solving your actual query.

3. Chain of Thought (CoT) Prompting

You instruct the AI to think step-by-step instead of giving the final answer directly. This is extremely useful for math, logical reasoning, and complex decision-making.

How does CoT work?

Example: Without CoT

Question:

“I had 5 apples. I ate 2 and bought 3 more. How many apples do I have now?”

AI (Direct Answer): “You have 6 apples.”

Example: With Chain of Thought

Question:

“I had 5 apples. I ate 2 and bought 3 more. How many apples do I have now?

Let’s think step by step.”

AI (CoT Response):

- You initially had 5 apples.

- You ate 2 → remaining apples = 5 − 2 = 3

- You bought 3 more → total apples = 3 + 3 = 6

Final Answer: You have 6 apples.

Two Types of Chain-of-Thought Prompting

1. Zero-Shot CoT : You simply add the magic phrase:

“Let’s think step by step.”

This alone increases accuracy by a large margin.

2. Few-Shot CoT : You provide a worked-out example showing how to solve a problem step-by-step.

The AI will follow the same reasoning pattern for the next question.

4. Role Prompting :

You assign the AI a specific role or identity.

Example: “You are a senior data scientist. Analyze this report.”

5. Iterative Prompting :

You start with a simple prompt, review the result, and then refine the prompt step-by-step until you get the perfect output.

Q.4 What are the different ways to evaluate the responses of a RAG-based chatbot ?

Retrieval-Augmented Generation (RAG) systems do two major things:

- Retrieve relevant documents

- Generate an answer using those documents

So evaluation must measure retrieval quality + generation quality.

To standardize this, we use : RAGAS (Retrieval Augmented Generation Assessment Suite), (a framework that provides automated metrics for evaluating RAG pipelines.)

1. Answer Relevancy

Check if the generated answer is relevant to the user’s question.

Mechanism:

- Convert query and generated answer into embeddings

using a sentence transformer (e.g., all-MiniLM-L6-v2). - Compute cosine similarity between them.

- Semantic content matches strongly.

2. Answer Correctness

Check if the answer is factually correct w.r.t. ground truth.

Mechanism:

Uses semantic similarity between:

- Generated Answer embeddings

- Ground Truth Answer embeddings

3. Context Recall:

Check how much of the necessary ground-truth context was retrieved.

Mechanism:

- Convert ground-truth context pieces into embeddings.

- Convert retrieved context into embeddings.

- Compute maximum similarity for each ground-truth chunk

against retrieved contexts.

4. Context Precision

Check how many retrieved chunks were actually relevant.

Mechanism:

For each retrieved chunk:

- Find the highest similarity between retrieved chunk and ground truth chunk.

- Mark as relevant if similarity > threshold (usually 0.5)

5. Faithfulness

Check if the answer is grounded in retrieved context or if the model added hallucinated facts.

Mechanism :

Step 1: Identify factual units

The LLM breaks the generated answer into atomic facts, like:

- “Newton discovered gravity.”

- “He did it in 1687.”

Step 2: Check grounding

For each claim:

- LLM checks:

“Does this fact exist in the retrieved context?”

If claim ∉ context → Hallucinated.

If claim ∈ context → Grounded.

How to use RAGAS ?

Step 1 — Create an evaluation dataset

You need:

- query

- retrieved_contexts

- generated_answer

- ground_truth_answer

Step 2 — Feed the dataset into RAGAS

RAGAS computes all metrics automatically using:

- Embedding similarity

- LLM-based scoring

- Semantic matching

Step 3 — Analyze the scores

Use the output scores to:

- Identify which questions have poor retrieval

- Identify hallucinations

- Improve prompts, chunking, retrievers, rerankers, etc.

Q.5 What are the different ways to detect hallucination in an LLM response, and if hallucinations are found, what methods can we use to mitigate them?

Here are the main methods used :

1. Faithfulness Check (Grounding Check)

Check whether each statement in the answer is present in the retrieved context.

How:

- Break answer into atomic claims

- For each claim, check: “Does context support this?”

- Used in RAGAS Faithfulness Metric

- If a claim is not found → hallucination.

2. LLM-as-a-Judge (Self-Consistency Check)

Ask another LLM (or the same LLM with a verification prompt):

“Verify if the above answer is factually correct using only the given context.”

If the model flags unsupported statements → hallucination.

Ways to Mitigate Hallucinations :

1. Improve Retrieval Quality (For RAG Systems)

Most hallucinations happen because context is missing.

Fix this by:

- Better chunking (structure aware chunking + semantic chunking)

- Hybrid retrieval (BM25 + vector search)

- Reranking retrieved documents

- Using stronger embedding models

Better retrieval → fewer hallucinations.

2. Force the Model to Stay Grounded

Use grounding instructions:

Prompt:

“Answer only using the provided context. If the answer is not in the context, say ‘Not Available in the context’.”

This alone reduces hallucination drastically.

3. Apply LLM Verification Layer

Add a second LLM to validate or rewrite the answer.

Example pipeline:

- Model generates answer

- Second model checks fact accuracy

- If wrong → regenerate or correct the answer

Used in enterprise production systems.

4. Temperature Control

Lower temperature = less creativity = fewer hallucinations.

5. Add Citations or Traceability

Ask model:

“Provide the exact context sentence used to generate each part of the answer.”

If the model is forced to cite the source of every statement, hallucination reduces.

Q.6 What are the key qualities that make a prompt effective and produce high-quality responses from an LLM?

- Tell the model exactly what you want and what you don’t want.

- Provide adequate context to reduce guessing.

- Adding 1–2 examples improves accuracy.

- Give the model a role to shape its behavior.

- Tell the model to stick to the provided information.

- Encourage the model to think before answering.

- Set constraints to control the output.

- “Answer in 3 steps”

- “Tone: professional”

- “Output in JSON format”

Q.7 What is Reranking in RAG?

In RAG, the retrieval system first fetches many candidate documents related to the query. But not all retrieved documents are equally relevant.

Reranking is the process of taking these retrieved documents and sorting them again using a more accurate, or more semantic model so that the best, most relevant documents appear at the top before passing them to the LLM.

What Models Are Used as Rerankers?

Common rerankers : Cross-Encoders (most accurate)

Cross-encoders are used because they give the most accurate relevance score between a query and each document.

What Does a Cross Encoder Do?

A cross-encoder combines the query and document in the same input, like this:

[CLS] QUERY [SEP] DOCUMENT [SEP]

Then the transformer processes them together, comparing them at every token level.

This allows:

- Deeper semantic matching

- Contextual interaction

Why Bi-Encoders Are Not Enough ?

A bi-encoder creates two separate embeddings:

- One for the query

- One for each document

Then uses dot product or cosine similarity. But it misses detailed interactions.

While Cross-Encoders process both sentences together in a single input, allowing the model to analyze deep interactions between every word pair through the attention mechanism. This gives the model full visibility into how words in Sentence A relate to words in Sentence B. For Example :

Sentence A:

“The bank raised interest rates.”

Sentence B1:

“The financial institution increased loan rates.”

Sentence B2:

“The river overflowed near the bank.”

Bi-Encoder Output:

It encodes A, B1, B2 separately and compares vectors.

- A ↔ B1 → similarity = medium/high (semantic match)

- A ↔ B2 → similarity = medium

Because the word “bank” appears in both A and B2, embeddings get confused.

Bi-Encoder cannot know which meaning of “bank” is used.

Cross-Encoder Output:

Cross-Encoder reads them together, so:

- With B1:

“bank ↔ financial institution” → strong match

“interest rates ↔ loan rates” → strong match

➝ High similarity - With B2:

“bank ↔ river bank” → mismatch

“interest rates ↔ water overflow” → mismatch

➝ Very low similarity

Cross-Encoder correctly identifies the right meaning of “bank”.

How a Cross-Encoder Works ?

If you have 10 documents, you must call the Cross-Encoder 10 separate times (or send them as a batch of 10 pairs). The process looks like this:

- Input 1: [Query] + [Document 1] → Score (e.g., 0.95)

- Input 2: [Query] + [Document 2] → Score (e.g., 0.42)

- Input 3: [Query] + [Document 3] → Score (e.g., 0.88)

… and so on until Document 10.

Finally, the model uses these scores to rerank the documents. The document with the highest score (e.g., Document 1) goes to the top.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.