Why Your AI Product Isn’t Software: The New Rules of Uncertainty, Evidence, and Economics

Last Updated on March 3, 2026 by Editorial Team

Author(s): Sasha Apartsin

Originally published on Towards AI.

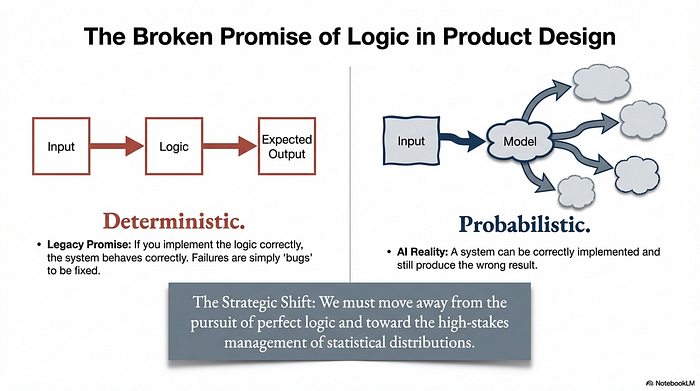

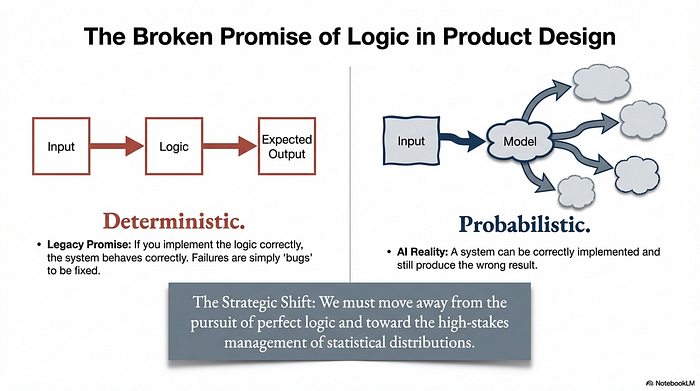

1. Introduction: The Broken Promise of Logic

Traditional software development is built on a comforting promise: if you implement the logic correctly, the system will behave correctly. In this deterministic world, failures are “bugs” — deviations from the intended code that can be fixed by refining that logic.

AI breaks this promise entirely. In an AI-driven product, a system can be “correctly implemented” and still produce the wrong result because its core behavior is probabilistic. This represents a fundamental shift in the craft of product building. We are moving away from the pursuit of perfect logic and toward the high-stakes management of statistical distributions. To win in this space, we must stop chasing “correctness” and start mastering the triad of uncertainty, evidence, and economics.

2. Takeaway 1: Design for Survivable Failure, Not Perfect Accuracy

Perhaps, the single greatest failure point for AI leaders is treating model errors as edge cases to be squashed. In a probabilistic environment, errors are not bugs; they are part of the operating environment. If your product’s value proposition collapses when the model hallucinates, you haven’t built a business — you’ve built a fragile experiment.

The winning move is making errors survivable. This requires a UI that proactively supports uncertainty through confidence scores, source citations, and transparent assumptions.

Strategist’s Perspective: The UI Paradigm Shift. For decades, designers have been trained in error prevention — disabling buttons or validating inputs to keep the user on the “happy path.” AI demands a radical pivot toward error recovery. This is often a difficult psychological shift for product teams because it requires relinquishing total control. You must design for the “unhappy path” as if it were a core feature, focusing on how quickly a user can detect an error, recover, and still extract value.

- Graceful Refusal: The system must know when to abstain, ask a question, or escalate to a human.

- Progressive Autonomy: Don’t automate on day one. Start with decision support and earn the right to automate through statistical evidence.

3. Takeaway 2: Feasibility is a Data Metric, Not a Code Prototype

In classic software, logic generalizes: if a prototype works for 10 cases, it likely works for 10,000. In AI, a prototype proves almost nothing unless it is anchored by representative data. Feasibility is not a functional property of your code; it is a statistical property of your data distribution.

Redefining “Feasible” in AI:

- Target Error Rates: You must define acceptable error rates globally and per “critical sub-slice” — the specific user segments or use cases where failure is most damaging.

- Confidence in Estimation: Feasibility means you can estimate, with statistical confidence, whether your current data can meet those target rates.

- Continuous Evaluation: Readiness isn’t a one-time checkmark; it’s a rolling measurement system that monitors for drift as real-world conditions evolve.

Strategist’s Perspective: The Data Milestone Product leaders often treat data as an implementation detail delegated to engineering. This is a strategic error. Data availability and quality must be treated as a first-class milestone in the product lifecycle. A system isn’t production-ready because the demo looked “magical” in the boardroom; it’s ready when you can defend its error profile across critical sub-slices with empirical evidence.

4. Takeaway 3: Your Roadmap Should Prioritize Learning Loops Over Features

Standard roadmaps are lists of features to be shipped. AI roadmaps must be frameworks for risk reduction. This means treating data as a “product surface” that requires as much investment as the UI.

While any competitor can buy access to the same high-performance APIs you use, they cannot easily replicate your proprietary evaluation and feedback loops. By prioritizing instrumentation and labeling workflows, you turn every user interaction into a competitive advantage.

“If your product doesn’t get measurably better with use, then competitors can catch up by buying the same models you do.”

Strategist’s Perspective: The Invisible Data Debt In AI, technical debt isn’t just messy code; it’s an invisible “data debt” that prevents your model from ever maturing beyond the demo phase. If your product doesn’t get measurably better every week, you are building on sand. Your real competitive moat isn’t the model you use today; it’s the compounding system of data and feedback that makes your product harder to replicate with every passing month.

5. Takeaway 4: Intelligence is Now a Variable Marginal Cost

Legacy SaaS economics scale with user seats and storage. AI economics scale with delivered intelligence. Every token generated, every tool call, and every “selective verification” step adds to the marginal cost. This creates a new reality where quality has a direct, fluctuating price tag.

The Strategic Quality-Cost Curve: As a product strategist, you must navigate the trade-offs between accuracy, latency, and cost. A more expensive model might offer 2% better accuracy but add 3 seconds of latency and triple your cloud bill. Is that 2% worth the degradation in user experience and margin?

- Intelligence Tiers: Move beyond marketing bundles to tiers defined by performance metrics (e.g., a “Pro” tier that uses selective verification for higher reliability).

- Cost-Aware Architecture: Managing the cloud bill is now a core part of the user experience. This includes routing simpler tasks to smaller models and implementing aggressive caching.

Strategist’s Perspective: The Bill as UX In the AI era, your cloud bill is a product feature. If your SI features aren’t cost-aware, you will eventually be forced to choose between a smart but expensive product or a cheap, fast product that isn’t smart enough to be useful. Balancing the intelligence-cost curve is the new frontier of product management.

6. Conclusion: Building for the Long Haul

Building an AI product is not “software engineering plus a model.” It is a distinct discipline built on three pillars:

- Error: Expect it, design for it, and build trust through recovery.

- Evidence: Treat data distributions as the only credible measure of readiness.

- Economics: Productize your spend by navigating the intelligence-cost curve.

If your roadmap consists primarily of features that a competitor can replicate by calling the same API, your product is fragile. Lasting value is found only in compounding systems of data, evaluation, and cost-aware intelligence.

Final Thought: If your API provider doubled their prices or went offline tomorrow, would your product have any intrinsic value left? Are you building a static feature set or a compounding data moat that becomes more unassailable with every user interaction?

About the author

Sasha Apartsin (PhD) is an AI scientist and faculty member at the Holon Institute of Technology. His research focuses on deep generative models, synthetic data generation for training and evaluation, and anomaly detection, with applications in NLP, computer vision, and robotics. He brings over three decades of industry experience across roles from developer and architect to VP/Head of AI, including leadership positions in AI for public safety, finance, document management, and vehicle telematics, and has founded and co-founded startups and innovation initiatives. www.apartsin.com

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.