Your Sentence Has a Secret Structure. Here’s How GPT Sees It.

Last Updated on March 3, 2026 by Editorial Team

Author(s): Rohini Joshi

Originally published on Towards AI.

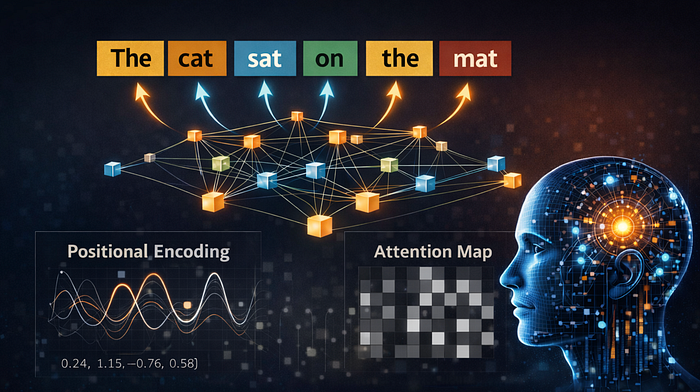

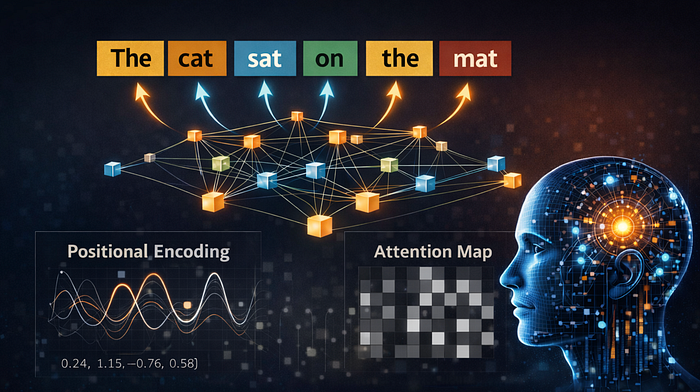

The sentence “dog bites man” and “man bites dog” contain the exact same words. A Transformer without positional encoding would treat them as identical. Here’s how modern LLMs learn word order and then decide which words actually matter.

The previous article here, explained how embeddings convert words into numbers, vectors in a high-dimensional space where distance reflects meaning. But embeddings alone have a problem. They represent individual words in isolation. They do not capture where a word appears in a sentence, or how it relates to other words around it.

Two mechanisms fix this. Positional encoding tells the model where each word sits. Attention tells the model which words matter for understanding each other word. Together, they are what make Transformers work.

Part 1: Positional Encoding: Teaching Word Order to a Model

The Problem: Without Order, Words Are Just a Bag

Recurrent neural networks (RNNs and LSTMs) process words one at a time, left to right. Word order is built into the architecture, the model sees “the” before “cat” before “sat” because it literally processes them in sequence.

Transformers do not work this way. They process all words simultaneously, in parallel. This makes them much faster to train, but it creates a fundamental problem: without intervention, a Transformer has no idea that “the” comes before “cat” which comes before “sat.” Every word is just a floating vector with no address.

Consider these two sentences:

- The cat sat on the mat

- The mat sat on the cat

The word embeddings are identical in both cases. The same words appear the same number of times. Without positional information, these two sentences are mathematically indistinguishable to the model. That is obviously unacceptable, one describes a normal cat and the other describes a very unusual mat.

The Solution: Add Position to the Embedding

The fix is elegant. Before feeding embeddings into the Transformer, a positional encoding vector is added to each word’s embedding. This vector encodes the word’s position in the sequence. After the addition, the embedding for “cat” in position 2 is numerically different from “cat” in position 5, even though the word is the same.

final_embedding = word_embedding + positional_encoding

That’s it. One addition. But the details of how the positional encoding is constructed makes all the difference.

Sinusoidal Positional Encoding

The original “Attention Is All You Need” paper used a mathematical approach based on sine and cosine waves at different frequencies. For each position and each dimension, each of the 300 numbers in the embedding vector from the previous article, the encoding is computed as:

Where pos is the word's position, i is the dimension index, and d is the total embedding dimension.

This looks abstract, but the intuition is simple: each dimension oscillates at a different frequency. Low dimensions change slowly (capturing broad position information), while high dimensions change rapidly (capturing fine-grained position). Together, they create a unique fingerprint for every position.

import numpy as np

import matplotlib.pyplot as plt

def sinusoidal_positional_encoding(max_len, d_model):

"""Generate positional encodings as described in 'Attention Is All You Need'"""

pe = np.zeros((max_len, d_model))

position = np.arange(max_len)[:, np.newaxis] # shape: (max_len, 1)

# Compute the division term: 10000^(2i/d_model)

div_term = 10000 ** (np.arange(0, d_model, 2) / d_model)

# Apply sin to even indices, cos to odd indices

pe[:, 0::2] = np.sin(position / div_term)

pe[:, 1::2] = np.cos(position / div_term)

return pe

# Generate encodings for 50 positions in a 64-dimensional space

pe = sinusoidal_positional_encoding(max_len=50, d_model=64)

plt.figure(figsize=(14, 6))

plt.imshow(pe, cmap="RdBu", aspect="auto")

plt.xlabel("Embedding Dimension")

plt.ylabel("Word Position in Sentence")

plt.title("Sinusoidal Positional Encoding — Each Position Gets a Unique Pattern")

plt.colorbar(label="Value")

plt.tight_layout()

plt.savefig("positional_encoding_heatmap.png", dpi=150, bbox_inches="tight")

plt.show()

Each row is one word position. The left side (low dimensions) shows wide, slow-changing waves, capturing broad position. The right side (high dimensions) shows tight, rapid stripes, capturing exact position. Every row has a unique pattern, which is exactly what the model needs to distinguish positions.

Why Sine and Cosine?

Three properties make this design effective:

Unique positions. No two positions get the same encoding. The model can always tell position 3 from position 17.

Relative distance is learnable. The relationship between position 5 and position 8 is consistent regardless of where in the sentence they occur. This is because sinusoidal functions have a mathematical property: PE(pos + k) can be expressed as a linear function of PE(pos). The model can learn to detect “3 positions apart” as a pattern.

Generalizes to unseen lengths. Since the encoding is computed from a formula (not looked up from a table), it works for sequences longer than anything seen during training.

# Demonstrating that relative distances are captured

pos_5 = pe[5]

pos_8 = pe[8]

pos_15 = pe[15]

pos_18 = pe[18]

# Distance between position 5 and 8

dist_5_8 = np.linalg.norm(pos_5 - pos_8)

# Distance between position 15 and 18 (same gap, different location)

dist_15_18 = np.linalg.norm(pos_15 - pos_18)

print(f"Distance between position 5 and 8: {dist_5_8:.4f}")

print(f"Distance between position 15 and 18: {dist_15_18:.4f}")

print(f"Difference: {abs(dist_5_8 - dist_15_18):.4f}")

# Distance between adjacent positions vs. far-apart positions

dist_1_2 = np.linalg.norm(pe[1] - pe[2])

dist_1_30 = np.linalg.norm(pe[1] - pe[30])

print(f"\nAdjacent positions (1,2): {dist_1_2:.4f}")

print(f"Far-apart positions (1,30): {dist_1_30:.4f}")

Distance between position 5 and 8: 3.5813

Distance between position 15 and 18: 3.5813

Difference: 0.0000

Adjacent positions (1,2): 1.4718

Far-apart positions (1,30): 5.6980

Nearby positions have smaller distances than far-apart positions. And the same gap (3 positions apart) produces similar distances regardless of absolute position. This is exactly the structure the model needs.

Learned vs. Sinusoidal Encodings

The original Transformer used the fixed sinusoidal approach described above. But modern models like BERT and GPT use learned positional embeddings instead, they treat position as another parameter that gets optimized during training, just like word embeddings.

Both approaches work. The sinusoidal version is mathematically principled and generalizes to longer sequences. The learned version is more flexible and can capture position patterns specific to the training data. In practice, learned encodings tend to perform marginally better when the model is large enough.

# Simulating what learned positional embeddings look like

# In reality, these are trained — here we show the concept

vocab_size = 30000

d_model = 64

max_positions = 512

# Word embedding table: each word gets a vector

word_embeddings = np.random.randn(vocab_size, d_model) * 0.02

# Position embedding table: each position gets a vector

position_embeddings = np.random.randn(max_positions, d_model) * 0.02

# For word "cat" at position 3:

word_id = 4237 # arbitrary ID for "cat"

position = 3

final_vector = word_embeddings[word_id] + position_embeddings[position]

print(f"Word embedding shape: {word_embeddings[word_id].shape}")

print(f"Position embedding shape: {position_embeddings[position].shape}")

print(f"Final vector shape: {final_vector.shape}")

print(f"\n'cat' at position 3 and 'cat' at position 7 are now different vectors.")

Word embedding shape: (64,)

Position embedding shape: (64,)

Final vector shape: (64,)

'cat' at position 3 and 'cat' at position 7 are now different vectors.

The key takeaway: after positional encoding, the model no longer sees isolated word meanings. It sees word meanings at specific positions. “Cat” at the start of a sentence is a different vector from “cat” at the end, and the model can use that difference.

Part 2: Attention: Deciding What Matters

The Problem Positional Encoding Doesn’t Solve

Positional encoding tells the model where words are. It does not tell the model how words relate to each other. Knowing that “bank” is at position 5 and “money” is at position 3 is useful, but the real question is: should the model use “money” to help interpret “bank”?

That question, which words should influence the interpretation of which other words, is what the attention mechanism answers.

The Core Idea

In a sentence like “The animal did not cross the street because it was too tired,” what does “it” refer to? A human instantly knows “it” means “the animal because the street can not be tired.” But how?

The answer is attention. When processing “it,” the model should “attend to” (pay attention to) “animal” more than “street.” The attention mechanism computes exactly this: for every word, it produces a set of weights indicating how much every other word matters.

Query, Key, Value: The Three Roles

The attention mechanism works by assigning each word three roles simultaneously:

Query (Q): “What am I looking for?” When processing “it,” the query represents the question: “What should I attend to?”

Key (K): “What do I contain?” Every other word broadcasts a key that says what information it offers. “Animal” has a key that says “I am a noun, I am a subject, I am an entity.”

Value (V): “What information do I give?” Once attention decides that “it” should attend to “animal,” the value is the actual information that gets passed along.

The process:

Step 1: Compute the similarity between the Query of one word and the Keys of all other words using dot product. A high dot product means two words are relevant to each other, a low dot product means they are not. For a sentence with 6 words, this produces a 6×6 grid of scores, every word scored against every other word.

For the word “sat,” this might produce:

sat → The: 0.8

sat → cat: 4.2

sat → sat: 1.1

sat → on: 0.5

sat → the: 0.7

sat → mat: 1.9

“Cat” scores highest because subjects are closely tied to their verbs. “Mat” scores moderately because it’s the object in the scene. Function words like “The” and “on” score low.

Step 2: Normalize these similarities into weights that sum to 1 (using softmax). Raw scores can be any number. Softmax converts them into a probability distribution that sums to 1, so the model knows the proportion of attention each word deserves.

The raw scores above become:

sat → The: 0.03 (3%)

sat → cat: 0.62 (62%)

sat → sat: 0.03 (3%)

sat → on: 0.02 (2%)

sat → the: 0.02 (2%)

sat → mat: 0.28 (28%)

Now the model knows: “When understanding ‘sat,’ get 62% of the context from ‘cat’ and 28% from ‘mat.’ Mostly ignore the rest.”

Step 3: Multiply each word’s Value by its weight and sum them up. Each word’s Value vector carries its actual information. The weights from Step 2 decide how much of each word’s information to pull in.

new "sat" = 0.03 × Value("The")

+ 0.62 × Value("cat")

+ 0.03 × Value("sat")

+ 0.02 × Value("on")

+ 0.02 × Value("the")

+ 0.28 × Value("mat")

The result is a new vector for “sat” that is no longer just the verb “to sit” in isolation. It now carries the meaning: “the action performed by the cat, directed at the mat.” One word’s embedding has absorbed context from the entire sentence.

Scaled Dot-Product Attention in Code

import numpy as np

def softmax(x, axis=-1):

"""Compute softmax along the specified axis"""

e_x = np.exp(x - np.max(x, axis=axis, keepdims=True))

return e_x / e_x.sum(axis=axis, keepdims=True)

def scaled_dot_product_attention(Q, K, V):

"""

Q, K, V: matrices of shape (seq_len, d_k)

Returns: attention output and attention weights

"""

d_k = Q.shape[-1]

# Step 1: Dot product of Q and K^T

scores = Q @ K.T

# Step 2: Scale by sqrt(d_k) to prevent vanishing gradients

scores = scores / np.sqrt(d_k)

# Step 3: Softmax to get weights (each row sums to 1)

weights = softmax(scores)

# Step 4: Multiply weights by V

output = weights @ V

return output, weights

# Simulate a 4-word sentence: "The cat sat quietly"

np.random.seed(42)

seq_len = 4

d_k = 8 # dimension of Q, K, V

Q = np.random.randn(seq_len, d_k)

K = np.random.randn(seq_len, d_k)

V = np.random.randn(seq_len, d_k)

output, weights = scaled_dot_product_attention(Q, K, V)

words = ["The", "cat", "sat", "quietly"]

print("Attention weights (each row = how much one word attends to others):\n")

for i, word in enumerate(words):

print(f" {word:>10} → ", end="")

for j, target in enumerate(words):

print(f"{target}: {weights[i][j]:.3f} ", end="")

print()

Attention weights (each row = how much one word attends to others):

The → The: 0.084 cat: 0.255 sat: 0.515 quietly: 0.145

cat → The: 0.641 cat: 0.133 sat: 0.017 quietly: 0.209

sat → The: 0.470 cat: 0.088 sat: 0.111 quietly: 0.331

quietly → The: 0.178 cat: 0.492 sat: 0.201 quietly: 0.130

Each row of the weight matrix shows the attention distribution for one word. “Cat” might attend strongly to “sat” (because subjects attend to their verbs) and weakly to “The” (a function word carrying less semantic information).

Visualizing Attention

plt.figure(figsize=(8, 6))

plt.imshow(weights, cmap="Blues", vmin=0, vmax=1)

plt.xticks(range(len(words)), words, fontsize=12)

plt.yticks(range(len(words)), words, fontsize=12)

plt.xlabel("Attending To (Keys)", fontsize=12)

plt.ylabel("Current Word (Queries)", fontsize=12)

plt.title("Attention Weights — Who Pays Attention to Whom?")

plt.colorbar(label="Attention Weight")

# Add text annotations

for i in range(len(words)):

for j in range(len(words)):

plt.text(j, i, f"{weights[i][j]:.2f}", ha="center", va="center",

fontsize=11, color="white" if weights[i][j] > 0.5 else "black")

plt.tight_layout()

plt.savefig("attention_weights.png", dpi=150, bbox_inches="tight")

plt.show()

This heatmap is the fundamental visualization of attention. Darker cells mean stronger attention. In a real trained model, patterns emerge: verbs attend to their subjects, pronouns attend to their antecedents, adjectives attend to the nouns they modify.

Why Scale by √d_k while calculating scores?

The scaling step (scores / np.sqrt(d_k)) is easy to overlook but critical. Without it, when the dimension d_k is large, the dot products become very large. Large values pushed through softmax produce distributions that are nearly one-hot, one word gets almost all the attention and everything else gets nearly zero. This kills the gradient during training.

Dividing by √d_k keeps the dot products in a range where softmax produces useful, distributed weights.

# Demonstrating the scaling problem

d_k_large = 512

Q_large = np.random.randn(4, d_k_large)

K_large = np.random.randn(4, d_k_large)

scores_unscaled = Q_large @ K_large.T

scores_scaled = scores_unscaled / np.sqrt(d_k_large)

print("Without scaling:")

print(f" Score range: [{scores_unscaled.min():.1f}, {scores_unscaled.max():.1f}]")

print(f" Softmax output: {softmax(scores_unscaled)[0]}")

print(f" Max attention weight: {softmax(scores_unscaled).max():.4f}")

print("\nWith scaling:")

print(f" Score range: [{scores_scaled.min():.1f}, {scores_scaled.max():.1f}]")

print(f" Softmax output: {softmax(scores_scaled)[0]}")

print(f" Max attention weight: {softmax(scores_scaled).max():.4f}")

Without scaling:

Score range: [-27.1, 35.4]

Softmax output: [0.04987816 0.54085002 0.36455102 0.0447208 ]

Max attention weight: 1.0000

With scaling:

Score range: [-1.2, 1.6]

Softmax output: [0.23819953 0.26466056 0.26008658 0.23705333]

Max attention weight: 0.5932

Without scaling, one word dominates. With scaling, attention is distributed more evenly, the model can attend to multiple words at once, which is what makes it powerful.

Part 2.1: Multi-Head Attention: Looking at Different Relationships

A single attention head captures one type of relationship. But language has many simultaneous relationships: syntactic (subject-verb), semantic (pronoun-antecedent), positional (adjacent words), and more.

Multi-head attention solves this by running several attention computations in parallel, each with its own Q, K, V projections. Each head learns to focus on a different type of relationship.

def multi_head_attention(X, n_heads, d_model):

"""

X: input embeddings (seq_len, d_model)

n_heads: number of attention heads

d_model: embedding dimension

"""

d_k = d_model // n_heads # dimension per head

seq_len = X.shape[0]

all_head_outputs = []

all_head_weights = []

for head in range(n_heads):

# Each head gets its own random projection matrices

# In a real model, these are learned parameters

W_Q = np.random.randn(d_model, d_k) * 0.1

W_K = np.random.randn(d_model, d_k) * 0.1

W_V = np.random.randn(d_model, d_k) * 0.1

Q = X @ W_Q

K = X @ W_K

V = X @ W_V

head_output, head_weights = scaled_dot_product_attention(Q, K, V)

all_head_outputs.append(head_output)

all_head_weights.append(head_weights)

# Concatenate all heads

concatenated = np.concatenate(all_head_outputs, axis=-1)

# Final linear projection

W_O = np.random.randn(d_model, d_model) * 0.1

output = concatenated @ W_O

return output, all_head_weights

# Simulate with 4 heads

np.random.seed(42)

d_model = 32

n_heads = 4

X = np.random.randn(4, d_model) # 4 words, 32-dim embeddings

output, head_weights = multi_head_attention(X, n_heads, d_model)

# Visualize each head's attention pattern

fig, axes = plt.subplots(1, 4, figsize=(20, 4))

words = ["The", "cat", "sat", "quietly"]

for h in range(n_heads):

ax = axes[h]

im = ax.imshow(head_weights[h], cmap="Blues", vmin=0, vmax=1)

ax.set_xticks(range(len(words)))

ax.set_xticklabels(words, fontsize=10)

ax.set_yticks(range(len(words)))

ax.set_yticklabels(words, fontsize=10)

ax.set_title(f"Head {h + 1}", fontsize=12)

for i in range(len(words)):

for j in range(len(words)):

ax.text(j, i, f"{head_weights[h][i][j]:.2f}", ha="center", va="center",

fontsize=9, color="white" if head_weights[h][i][j] > 0.5 else "black")

plt.suptitle("Multi-Head Attention — Each Head Learns Different Patterns", fontsize=14)

plt.tight_layout()

plt.savefig("multi_head_attention.png", dpi=150, bbox_inches="tight")

plt.show()

Each head develops a different attention pattern, in a trained model, one might track subject-verb relationships, another might focus on adjacent words, and another might capture long-range dependencies. The model learns this specialization entirely from data.

Part 2.2: Self-Attention vs. Cross-Attention

Everything described above is self-attention, a sequence attending to itself. In the encoder of a Transformer, each word looks at every other word in the same sentence.

There is also cross-attention, used in encoder-decoder models (like translation). In cross-attention, the decoder’s words (the output being generated) attend to the encoder’s words (the input sentence). The queries come from the decoder, but the keys and values come from the encoder. This is how a translation model knows which source words to focus on when generating each target word.

Putting It Together: From Raw Text to Contextual Understanding

The full pipeline chains everything together: raw text is tokenized, each token gets an embedding vector, positional encoding is added, and the result passes through multi-head attention, where every word absorbs context from every other word. This happens not once but across multiple layers (12 in BERT-base, 96 in GPT-4), each layer refining the representation further. By the final layer, the vector for each word is no longer just “what this word means.” It is “what this word means in this specific sentence, given every other word around it.

# Simulating the full pipeline

np.random.seed(42)

sentence = ["The", "cat", "sat", "quietly"]

d_model = 32

n_heads = 4

# Step 1-2: Token embeddings (random here, learned in real models)

token_emb = np.random.randn(len(sentence), d_model) * 0.1

# Step 3: Add positional encoding

pos_enc = sinusoidal_positional_encoding(len(sentence), d_model)

combined = token_emb + pos_enc

print("After token embedding only:")

print(f" 'cat' at position 1: first 5 values = {token_emb[1][:5].round(3)}")

print(f" Norm: {np.linalg.norm(token_emb[1]):.3f}")

print("\nAfter adding positional encoding:")

print(f" 'cat' at position 1: first 5 values = {combined[1][:5].round(3)}")

print(f" Norm: {np.linalg.norm(combined[1]):.3f}")

# Step 4: Pass through attention

attended, weights = multi_head_attention(combined, n_heads, d_model)

print("\nAfter attention:")

print(f" 'cat' at position 1: first 5 values = {attended[1][:5].round(3)}")

print(f" Norm: {np.linalg.norm(attended[1]):.3f}")

print(f"\nThe vector for 'cat' has changed at every step — absorbing position and context.")

After token embedding only:

'cat' at position 1: first 5 values = [-0.001 -0.106 0.082 -0.122 0.021]

Norm: 0.497

After adding positional encoding:

'cat' at position 1: first 5 values = [0.84 0.435 0.615 0.724 0.332]

Norm: 3.927After attention:

'cat' at position 1: first 5 values = [ 0.138 0.139 -0.102 -0.155 -0.162]

Norm: 1.087The vector for 'cat' has changed at every step, absorbing position and context.

After attention:

'cat' at position 1: first 5 values = [ 0.138 0.139 -0.102 -0.155 -0.162]

Norm: 1.087

The vector for 'cat' has changed at every step, absorbing position and context.

The Key Takeaways

Positional encoding and attention are the two mechanisms that turn static word embeddings into dynamic, context-aware representations. Without positional encoding, a Transformer cannot distinguish word order. Without attention, it cannot determine which words are relevant to each other.

Together, they enable what makes Transformers remarkable: the ability to process an entire sequence at once while still understanding that order matters and that meaning is shaped by context. Every time a model generates a response, translates a sentence, or answers a question, it is positional encoding and attention doing the work, thousands of times per second, across dozens of layers, over every word.

The embeddings are the vocabulary. The positions are the grammar. The attention is the understanding.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.