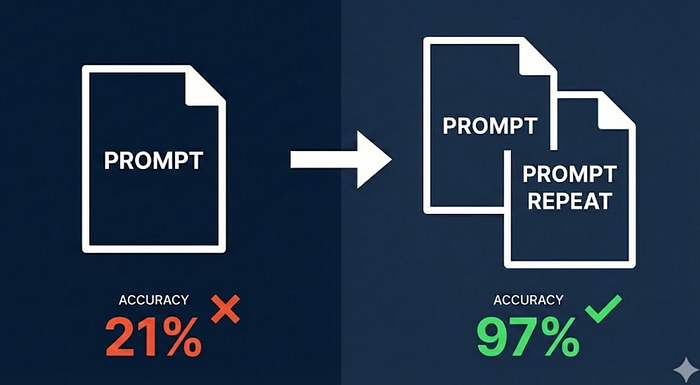

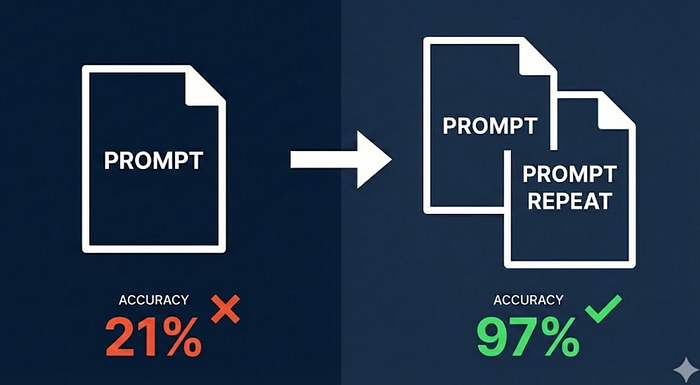

Prompt Repetition Boosts LLM Accuracy 76% Without Latency Increase

Last Updated on January 20, 2026 by Editorial Team

Author(s): MKWriteshere

Originally published on Towards AI.

How repeating prompts twice improves the non-reasoning model accuracy from 21% to 97% while maintaining zero latency overhead

I avoid reasoning models in production. Latency kills user experience, and the token costs add up quickly when processing thousands of requests daily. But here’s the problem: when I use non-reasoning models from GPT, Claude, or Gemini, I get the speed I need but sacrifice the one thing I can’t afford to lose — accuracy.

The article discusses a promising technique developed by Google researchers that significantly boosts the accuracy of non-reasoning language models by simply repeating the input prompt. This method can improve output accuracy remarkably—up to 76%—while maintaining the same response time. It highlights the challenges of balancing latency and accuracy in AI applications and explores the structural limitations of language models, underscoring the importance of efficient prompt design to leverage model strengths effectively. The article concludes with a call to reassess how we utilize these models and consider the revelations brought about by prompt repetition.

Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.