mHC: Rethinking the Neural Highway

Author(s): Revanth Madamala

Originally published on Towards AI.

If you’ve been following the evolution of Deep Learning, you know that for the last decade, we’ve been obsessed with Residual Connections (ResNets). They are the “highways” of a neural network — the bypass lanes that allow information to skip over layers, preventing the dreaded “vanishing gradient” problem.

But as we push toward trillion-parameter models, these highways are starting to feel like narrow, one-lane country roads.

Recently, a team from DeepSeek-AI released a paper titled “mHC: Manifold-Constrained Hyper-Connections” (arXiv:2512.24880). It’s a fascinating piece of research that essentially argues we shouldn’t just build longer highways; we should build multi-lane super-highways — but only if we can keep the traffic from crashing.

The Evolution of the Connection

To understand why this matters, look at how we’ve been building these connections. In the paper, Figure 1 provides a perfect side-by-side comparison of the three major paradigms:

The Problem: The “Single-Lane” Bottleneck

In a standard Transformer, the residual stream is a single vector. Every layer adds something to that vector.

A few months ago, a concept called Hyper-Connections (HC) was introduced. The idea was simple: instead of one residual stream, why not have four? Or eight? By widening the residual stream, you increase “topological complexity” — basically giving the model more “thinking space” to carry different types of information simultaneously.

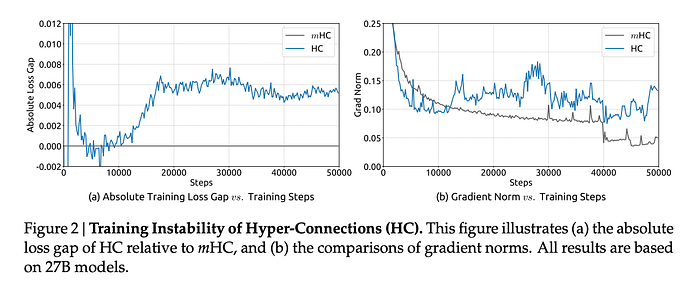

The catch? It was incredibly unstable. In my view, HC was like opening a four-lane highway but removing all the speed limits and lane markers. Without constraints, the mixing between lanes causes the signal to explode.

The Breakthrough: Manifold-Constrained Hyper-Connections (mHC)

This is where DeepSeek’s innovation comes in. They realized that the problem wasn’t the multiple lanes; it was the uncontrolled mixing.

DeepSeek’s solution is Manifold Constraints. They force the mixing matrices to live on a specific mathematical shape called the Birkhoff Polytope.

1. The Birkhoff Polytope (The “Fair-Mixing” Rule)

They use doubly stochastic matrices, in layman’s terms, the matrices follow below rules

- Every row sums to 1.

- Every column sums to 1.

- No entry is negative.

Think of it like a perfectly balanced mixing bowl. No matter how much you stir (mix the streams), the total amount of “stuff” stays the same. You aren’t adding or losing content; you’re just redistributing it.

2. The 1967 Time Machine: Sinkhorn-Knopp

To force the model to stay on this “manifold,” they reach back to an algorithm from 1967 called Sinkhorn-Knopp. During training, the model tries to learn a mixing matrix, and the Sinkhorn-Knopp algorithm “squishes” it until it becomes doubly stochastic.

As seen in the Figure 3, the results are night and day. While the old HC exploded, the mHC signal gain (the blue line) stays flat, near 1.0, just like a stable, old-school ResNet.

Results: Performance without the Crash

You might ask, “Is this just a math trick to stop crashes?” It’s more than that. By stabilizing these “Hyper-Connections,” DeepSeek has unlocked a new scaling axis.

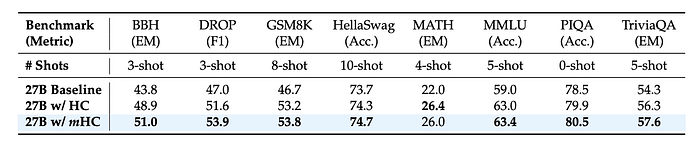

Traditionally, we scale models by making them deeper or wider. mHC adds Connectivity as a third option. Looking at table below, the 27B model results across benchmarks are striking:

The Engineering Feat

Usually, adding complex math like Sinkhorn-Knopp to every layer would tank training speed. DeepSeek solved this through Infrastructure Optimization.

They used a tool called TileLang to write custom GPU kernels that “fuse” the mixing and the normalization into a single step. The result? A 4x wider residual stream only added about 6.7% to the training time. For a massive boost in stability and performance, that is a bargain.

My Takeaway

The “mHC” paper represents a shift from brute-force scaling to geometric scaling. We are moving away from treating neural networks as simple stacks of blocks and moving toward treating them as complex topological structures.

By using the Birkhoff Polytope as a “safety rail,” DeepSeek has shown that we can build much more intricate, multi-lane architectures that are just as stable as the simple ones we’ve used for years. The future of AI isn’t just bigger; it’s better connected.

Reference: Xie et al., “mHC: Manifold-Constrained Hyper-Connections,” arXiv:2512.24880, 2025.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.