Why A/B Testing Fails in Two-Sided Marketplaces (and How to Fix It with Switchback Testing)

Author(s): Swaroop

Originally published on Towards AI.

By Swaroop Hadke

1. Introduction: The Hidden Trap of Network Effects

Standard A/B testing is dangerous in two-sided marketplaces. If you treat a ride-hailing app or a delivery network like a standard e-commerce store, you aren’t just getting noisy data — you are often actively cannibalizing your own network.

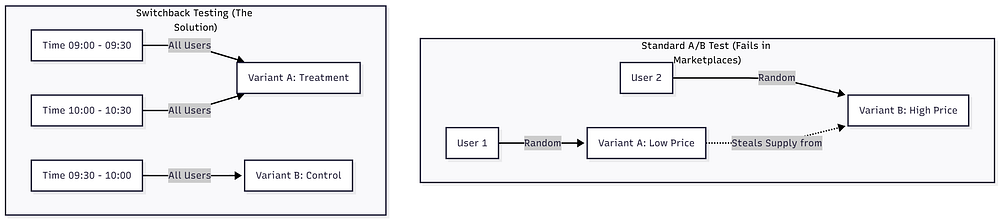

In a typical A/B test (say, testing a “Buy Now” button color), we rely on the SUTVA (Stable Unit Treatment Value Assumption). This is the statistical guarantee that User A’s behavior doesn’t impact User B.

In a marketplace, SUTVA effectively doesn’t exist.

Here is the scenario that breaks standard testing: You want to test a new dynamic pricing algorithm designed to lower prices and boost conversion.

- Group A (Treatment) sees lower prices. They naturally flood the system with orders.

- Group B (Control) sees normal prices.

Because your supply (drivers/couriers) is finite, Group A consumes all the available drivers. When Group B users try to book, they face longer wait times or “No Drivers Available” errors. Group B’s performance degrades artificially because of Group A’s activity.

If you look at the raw data, your algorithm looks like a massive success. In reality, you simply stole resources from your control group. This phenomenon is called Interference, and to solve it, we have to stop randomizing by User and start randomizing by Time.

This is where Switchback Testing becomes a requirement, not a luxury.

2. The Solution: Changing the Unit of Randomization

Switchback testing (or Time-Split testing) solves the interference problem by switching the entire marketplace between “Control” and “Treatment” at specific time intervals.

Instead of User A getting one price and User B getting another simultaneously, everyone in the marketplace gets the Treatment logic for a 30-minute window, and then everyone gets the Control logic for the next 30 minutes.

This clusters the interference within time blocks, allowing us to compare the performance of “Treatment Windows” against “Control Windows.”

Visualizing the Split

Here is how the randomization logic differs.

By isolating the variants in time, we ensure that the supply available during a “Control” period is (mostly) reflective of natural market conditions, not distorted by a simultaneous competing algorithm.

3. The Build: Simulating a Marketplace in Python

Testing experimental frameworks on live production traffic is risky (and expensive). To prove the efficacy of this architecture, I built a Python-based Experimentation Engine.

The core of this project is the MarketplaceSimulator class. It models the delicate balance of a two-sided economy:

- Drivers (Supply): Prefer higher earnings (Surge pricing).

- Users (Demand): Prefer lower costs.

The goal of the simulation was to test a “Surge Pricing” algorithm. The hypothesis? Increasing the price slightly would lower User Conversion, but increase Driver Acceptance enough to result in a higher overall Order Completion Rate (OCR).

Below is the core logic from my simulation engine. It demonstrates how the system “switches” behavior based on the time window variant.

# Simplified Logic from simulation.py

for _, row in schedule.iterrows():

variant = row['variant'] # 'Control' or 'Treatment' based on time window

# ... (Demand generation logic omitted for brevity) ...

# The Core Trade-off Mechanism

if variant == 'Treatment':

# Treatment: Surge Pricing applied

# Result: Users convert less, but Drivers accept more

price = base_price * np.random.uniform(1.0, 1.2)

driver_acceptance_prob = 0.85

user_conversion_prob = 0.70

else:

# Control: Base Pricing

# Result: Users convert more, but Drivers are pickier

price = base_price

driver_acceptance_prob = 0.75

user_conversion_prob = 0.75

# Determine the Outcome

# A ride is only 'Completed' if BOTH sides agree

driver_found = bool(np.random.random() < driver_acceptance_prob)

user_accepted = bool(np.random.random() < user_conversion_prob)

is_completed = bool(driver_found and user_accepted)

In this simulation, the Treatment creates a friction point for the user (price) to solve a friction point for the marketplace (supply availability).

4. The Math: Why Aggregation is Key

If you run this simulation for two weeks, you might generate 10,000 individual ride requests.

- 5,000 Treatment rides

- 5,000 Control rides

A junior Data Scientist might be tempted to run a T-test on these 10,000 rows. This is statistically invalid.

Why? Because rides happening within the same 30-minute window are autocorrelated. If it starts raining at 9:15 AM, all rides in that window are affected. They are not independent samples.

To calculate the P-value correctly, we must aggregate our data up to the Unit of Randomization — which, in Switchback testing, is the Time Window.

Instead of comparing 10,000 rides, we compare the means of the N time windows (e.g., 336 hours = 672 windows of 30 mins).

The Analysis Implementation

Here is how I implemented the aggregation and statistical testing in analysis.py. Note how we group by window_start before running the test.

from scipy import stats

def analyze_experiment(df):

# 1. CRITICAL: Aggregate metrics by the Window

# (The Unit of Randomization)

window_metrics = df.groupby(['window_start', 'variant']).agg({

'request_id': 'count',

'is_completed': 'sum',

'order_value': 'sum'

}).reset_index()

# Calculate Ratios per window (e.g., Order Completion Rate)

window_metrics['ocr'] = window_metrics['is_completed'] / window_metrics['request_id']

# 2. Separate Groups

control_windows = window_metrics[window_metrics['variant'] == 'Control']

treatment_windows = window_metrics[window_metrics['variant'] == 'Treatment']

# 3. Statistical Test (Welch's t-test on Window Means)

# We test the means of the *windows*, not the individual rides.

t_stat, p_value = stats.ttest_ind(

treatment_windows['ocr'],

control_windows['ocr'],

equal_var=False

)

return p_value, window_metrics

By averaging the metrics within each window, we satisfy the independence assumption required for the Welch’s t-test.

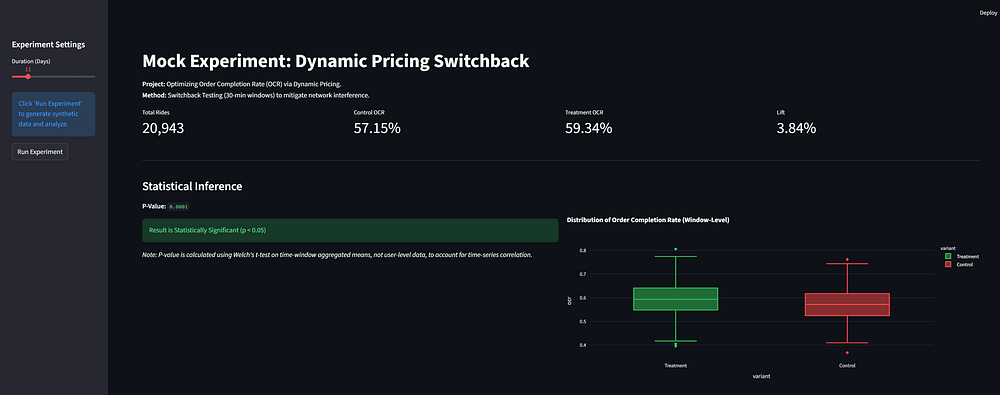

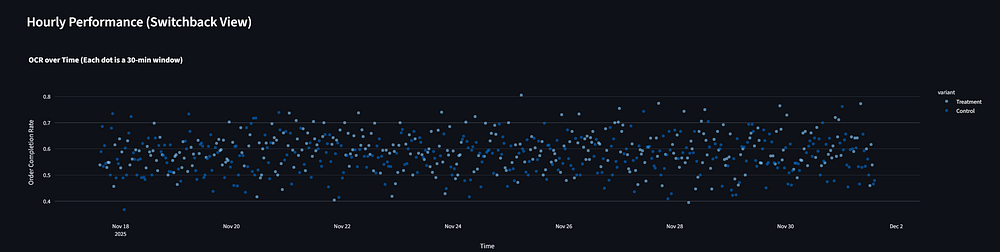

5. Visualizing the Results

To make these results accessible to stakeholders, the final step of the project was piping these metrics into a Streamlit dashboard.

The dashboard visualizes:

- Global Lift: The percentage improvement in Order Completion Rate (OCR) and Gross Merchandise Value (GMV).

- Significance: A clear indicator of whether the P-value is < 0.05.

- Time Series: A chart showing how the metrics fluctuated over the 14-day period.

6. Advanced Considerations & Limitations

While Switchback testing solves the interference problem, it introduces a new one: Carryover Effects.

If you surge prices at 9:55 AM (Treatment) to attract drivers, those drivers are likely still in the area at 10:05 AM, when the system switches back to normal prices (Control). The “Control” window benefits from the supply accumulated by the “Treatment” window.

The Fix: Sophisticated experimentation platforms implement a “Burn-in” (or Washout) Period. When calculating metrics, we drop the data from the first 5–10 minutes of every window. This allows the marketplace state to reset and stabilize before we start measuring the impact of the new variant.

7. Conclusion: Moving Beyond “Move Fast and Break Things.”

Building this simulation highlighted a critical shift in how we approach data science in mature tech sectors. In the early days, simple predictive modeling and “move fast” A/B testing were enough to capture low-hanging fruit.

Today, the margin for error in marketplaces is razor-thin. Algorithms that manage pricing and dispatching are too interconnected to test with naive splitting methods. We need to move toward Causal Inference engines that respect the physics of supply and demand.

The Python code I’ve shared here — specifically the shift from row-level analysis to window-aggregated analysis — isn’t just a statistical safeguard; it’s a requirement for validity. If you can’t trust your Control group, you can’t trust your Lift. And in a business operating at scale, a false positive on a pricing algorithm isn’t just a bad experiment — it’s millions in lost revenue.

This article is based on a portfolio project demonstrating Causal Inference in Python. The full source code for the simulation and analysis engine is available on my GitHub.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.