Data Imputation in Machine Learning: A Practical, No-Nonsense Guide (ML Chapter -2, Module-2)

Last Updated on December 2, 2025 by Editorial Team

Author(s): Sayan Chowdhury

Originally published on Towards AI.

Missing data shows up everywhere: surveys, logs, sensors, medical records, finance datasets, you name it. And if you feed missing values directly into most ML models, they’ll crash or behave unpredictably. That’s why data imputation is one of the most important preprocessing steps in any ML pipeline.

This article breaks down why missing data happens, how it affects models, and the most practical imputation techniques you should use in real projects. No fluff. No jargon overload. Just clarity.

Why Missing Data Happens

There are three major types of missingness. Understanding them helps you choose the right imputation strategy:

1. MCAR – Missing Completely At Random

Data disappears due to pure randomness.

Example: A survey response wasn’t recorded because of a glitch.

Best case scenario- almost any imputation works.

2. MAR – Missing At Random

Missing values depend on other observed data.

Example: Younger users skip income fields more than older users.

Most real-world datasets fall here.

3. MNAR – Missing Not At Random

Missingness depends on the value itself.

Example: People with high incomes intentionally skip “salary.”

Hardest to impute. Often requires domain knowledge or advanced models.

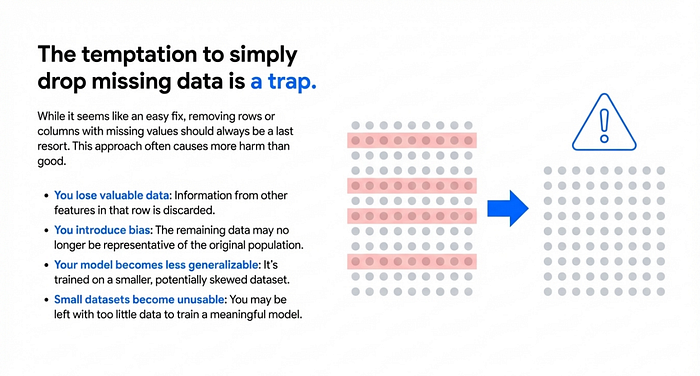

Why You Shouldn’t Just Drop Missing Data

A common instinct is: “I’ll drop all missing rows and save the hassle.”

That’s a trap.

- You lose valuable data

- You introduce bias

- Your model becomes less generalizable

- Small datasets become unusable

Dropping should always be the last option.

Popular Imputation Strategies

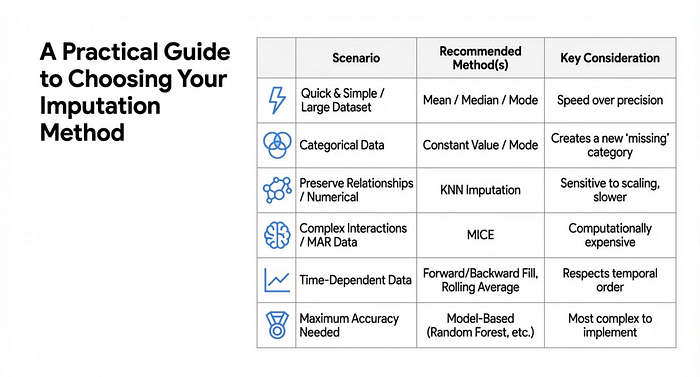

1. Mean, Median, Mode Imputation

Simple and popular.

- Mean for normal numerical data

- Median for skewed numerical data

- Mode for categorical data

Pros

- Fast

- Easy

- Works well with large datasets

Cons

- Shrinks variance

- Can distort distribution

2. Constant Value Imputation

Fill missing values with placeholders:

"Unknown"0"Other"

Great for categorical data and tree-based models.

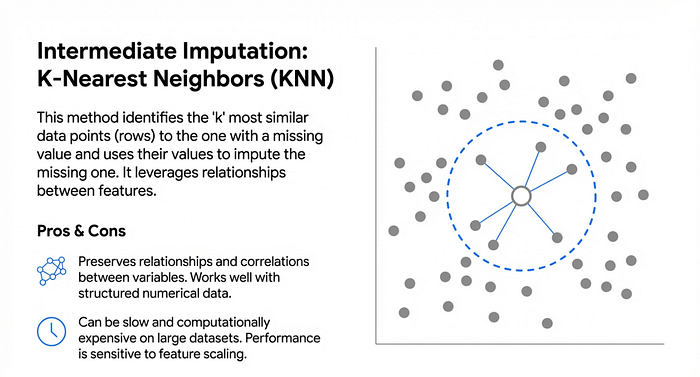

3. k-Nearest Neighbors (KNN) Imputation

For each missing point, find similar rows and use their values.

Pros

- Preserves relationships

- Works well with structured numerical data

Cons

- Slow on large datasets

- Sensitive to scaling

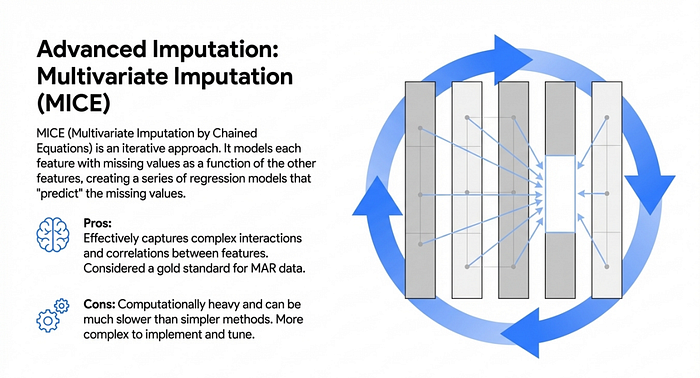

4. Multivariate Imputation (MICE)

Each feature with missing values is modeled using other features.

Uses regressions iteratively.

Pros

- Captures feature interactions

- Works well for MAR data

Cons

- Computationally heavy

- Harder to tune

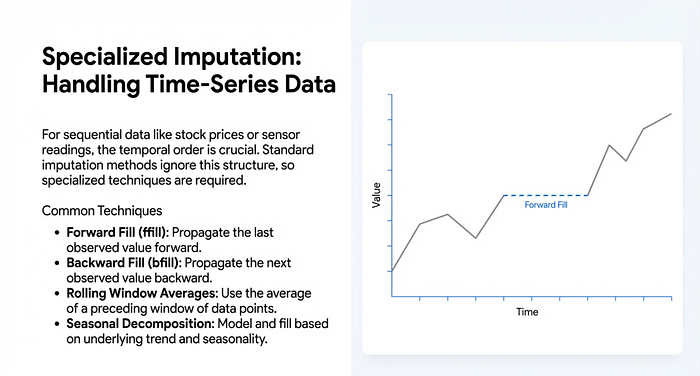

5. Time-Series Specific Imputation

For sequential data:

- Forward fill

- Backward fill

- Rolling window averages

- Seasonal trend decomposition

These techniques respect temporal order, which is crucial for forecasting tasks.

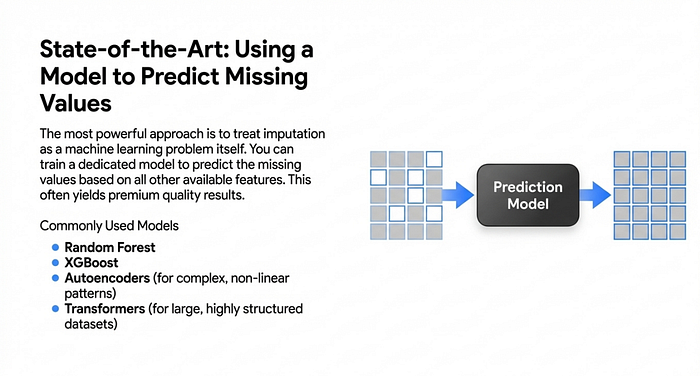

6. Model-Based Imputation

You can train a machine learning model just to predict missing values.

(Don’t get afraid of the heavy names, all I will discuss in upcoming chapters)

Common choices:

- Random forest

- XGBoost

- Autoencoders (deep learning)

- Transformers (for large structured datasets)

This often gives premium quality imputations.

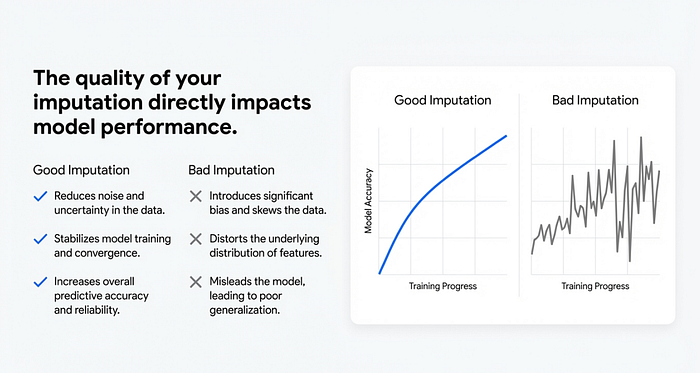

How Imputation Affects Model Performance

Good imputation:

- reduces noise

- stabilizes model training

- increases predictive accuracy

Bad imputation:

- introduces bias

- changes distribution

- misleads the model

That’s why it’s crucial to evaluate models before and after imputation.

Practical Workflow Using Scikit-Learn

( We will discuss scikit learn library in details later. This is just for a overview of code)

from sklearn.impute import SimpleImputer, KNNImputer

from sklearn.compose import ColumnTransformer

from sklearn.pipeline import Pipeline

numeric_features = ['age', 'salary']

categorical_features = ['gender', 'city']

numeric_transformer = Pipeline(steps=[

('imputer', SimpleImputer(strategy='median')))

])

categorical_transformer = Pipeline(steps=[

('imputer', SimpleImputer(strategy='most_frequent'))

])

preprocessor = ColumnTransformer(

transformers=[

('num', numeric_transformer, numeric_features),

('cat', categorical_transformer, categorical_features)

]

)

How to Compare Imputation Methods

Build a consistent evaluation loop:

- Train models with each imputation method

- Use cross-validation

- Compare metrics like accuracy, RMSE, F1

- Pick the method with the best trade-off

Often you’ll find:

- Mean median works for simple datasets

- KNN/MICE work best for complex ones

- Model-based imputation wins when you need high accuracy

Best Practices

- Understand your missingness pattern first

- Avoid dropping unless necessary

- Never impute before splitting into train/test

- Use domain knowledge wherever possible

- Evaluate multiple techniques, not just one

Data imputation is more than just filling blanks. It’s a crucial modeling decision that directly shapes your model’s performance.

If you found this helpful, consider subscribing to get my upcoming articles where I break down other preprocessing topics and model training tricks in a practical way.

Check out the Previous edition of Artificial Intelligence and Machine Learning Chapters:

Tensors in Machine Learning (ML Chapter-1) : https://medium.com/@sayanwrites/tensors-in-machine-learning-the-clearest-explanation-youll-ever-read-ml-chapter-1-8c26de28b386

Data Preprocessing in Machine Learning (ML Chapter -2: Module-1) : https://medium.com/@sayanwrites/data-preprocessing-in-machine-learning-the-complete-guide-ml-chapter-2-module-1-eca7b37ed9d2

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.