Attention = Soft k-NN

Last Updated on September 23, 2025 by Editorial Team

Author(s): Joseph Robinson, Ph.D.

Originally published on Towards AI.

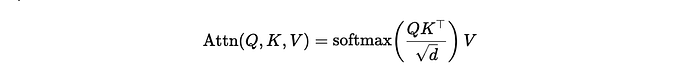

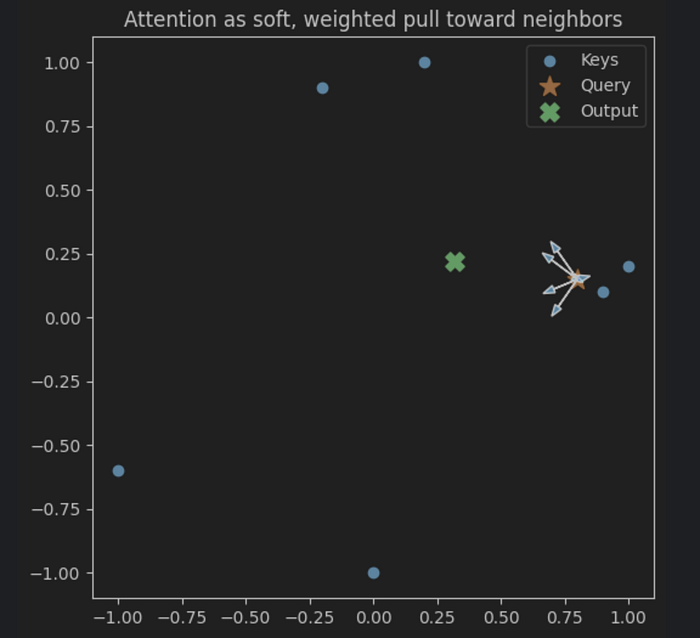

Transformers = soft k-NN. One query asks, “Who’s like me?” Softmax votes, and the neighbors whisper back a weighted average. That’s an attention head; a soft, differentiable k-NN: measure similarity → turn scores into weights (softmax) → average your neighbors (weighted sum of values).

TL;DR

- Scaled by

1/sqrt(d)to keep softmax from saturating as the dimension grows. - Masks decide who you’re allowed to look at (causal, padding, etc.).

Intuition (60 seconds)

- A query vector 𝐪 asks: “Which tokens are like me?”

- Compare 𝐪 with each key 𝒌ᵢ to get similarity scores.

- Softmax turns scores into a probability‐like distribution (heavier weight ⇢ more similar).

- Take the weighted average of value vectors 𝐯ᵢ.

That’s attention: a soft, order-aware neighbor average.

The math (plain, minimal)

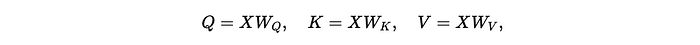

Single head, key/query dim 𝒅, value dim 𝒅ᵥ. With

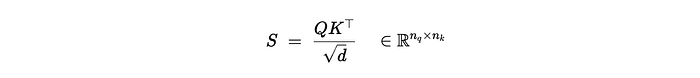

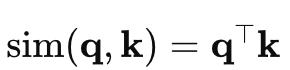

- Similarity (scaled dot product):

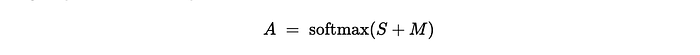

(Optional) mask

- Weights (softmax row-wise)

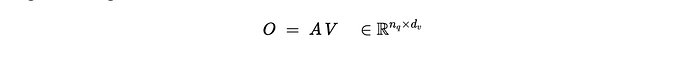

- Weighted average of values:

Why the 1/sqrt{d} scaling?

Dot products of random d-dimensional vectors grow like O(sqrt{𝒅}). Unscaled scores push softmax toward winner-take-all (one weight ≈1, others ≈0), collapsing gradients. Dividing by sqrt{𝒅} keeps the score variance — and thus the entropy of the softmax — in a healthy range.

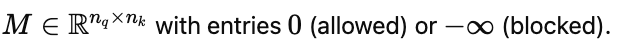

Masks (causal or padding)

Add 𝐌 before softmax:

- Causal (language modeling): block positions j>t for each timestep t.

- Padding: block tokens that are placeholders.

Formally, A=softmax(S+M) with Mᵢⱼ=0 if allowed, −∞ otherwise.

Soft k-NN view (and variants)

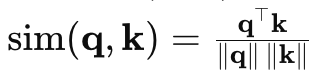

Swap the similarity and you swap the inductive bias:

- Dot product (direction and magnitude):

- Cosine (angle only; length-invariant):

- Negative distance, a Gaussian/RBF flavor (Euclidean neighborhoods):

Then softmax → weights → weighted average as before. Temperature τ (or an implicit scale) controls softness: lower τ ⇒ sharper, more argmax-like behavior.

Minimal NumPy (single head, clarity over speed)

Goal: clarity over speed. This is the whole operation you’ve seen in papers.

import numpy as np

def softmax(x, axis=-1):

x = x - np.max(x, axis=axis, keepdims=True) # numerical stability

ex = np.exp(x)

return ex / np.sum(ex, axis=axis, keepdims=True)

def attention(Q, K, V, mask=None):

"""

Q: (n_q, d), K: (n_k, d), V: (n_k, d_v)

mask: (n_q, n_k) with 0=keep, -inf=block (or None)

Returns: (n_q, d_v), (n_q, n_k)

"""

d = Q.shape[-1]

scores = (Q @ K.T) / np.sqrt(d) # (n_q, n_k)

if mask is not None:

scores = scores + mask

weights = softmax(scores, axis=-1) # (n_q, n_k)

return weights @ V, weights

A tiny toy: 6 tokens in 2-D

We’ll create 6 token embeddings, a single query, and watch the weights behave like a soft neighbor pick.

# Toy data

np.random.seed(7)

n_tokens, d, d_v = 6, 2, 2

K = np.array([[ 1.0, 0.2],

[ 0.9, 0.1],

[ 0.2, 1.0],

[-0.2, 0.9],

[ 0.0, -1.0],

[-1.0, -0.6]])

# Values as a simple linear map of keys (intuition)

Wv = np.array([[0.7, 0.1],

[0.2, 0.9]])

V = K @ Wv

# Query near the first cluster

Q = np.array([[0.8, 0.15]]) # (1, d)

out, W = attention(Q, K, V)

print("Attention weights:", np.round(W, 3))

print("Output vector:", np.round(out, 3))

# -> weights ~ heavier on the first two neighbors

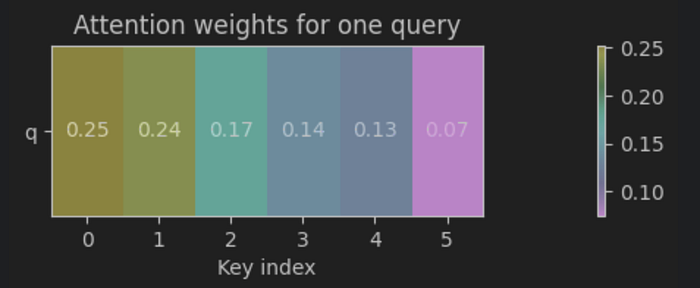

Attention weights: [[0.252 0.236 0.174 0.138 0.126 0.075]]

Output vector: [[0.318 0.221]]

Let’s visualize the weights from the above toy example:

Additionally, we can visualize this geometrically.

Cosine vs dot product vs RBF (soft k-NN flavors)

Try swapping the similarity and observe the heatmap change.

def attention_with_sim(Q, K, V, sim="dot", tau=1.0, eps=1e-9):

if sim == "dot":

scores = (Q @ K.T) / np.sqrt(K.shape[-1])

elif sim == "cos":

Qn = Q / (np.linalg.norm(Q, axis=-1, keepdims=True) + eps)

Kn = K / (np.linalg.norm(K, axis=-1, keepdims=True) + eps)

scores = (Qn @ Kn.T) / tau

elif sim == "rbf":

# scores = -||q-k||^2 / (2*tau^2)

q2 = np.sum(Q**2, axis=-1, keepdims=True) # (n_q, 1)

k2 = np.sum(K**2, axis=-1, keepdims=True).T # (1, n_k)

qk = Q @ K.T # (n_q, n_k)

d2 = q2 + k2 - 2*qk

scores = -d2 / (2 * tau**2)

else:

raise ValueError("sim in {dot, cos, rbf}")

W = softmax(scores, axis=-1)

return W @ V, W, scores

for sim in ["dot", "cos", "rbf"]:

out_s, W_s, _ = attention_with_sim(Q, K, V, sim=sim, tau=0.5)

print(sim, "weights:", np.round(W_s, 3), "out:", np.round(out_s, 3))

dot weights: [[0.252 0.236 0.174 0.138 0.126 0.075]] out: [[0.318 0.221]]

cos weights: [[0.397 0.394 0.113 0.05 0.037 0.008]] out: [[0.576 0.287]]

rbf weights: [[0.443 0.471 0.055 0.021 0.01 0. ]] out: [[0.651 0.268]]

Takeaway: similarity choice = inductive bias. Cosine focuses on angle; RBF on Euclidean neighborhoods; dot mixes both magnitude and direction.

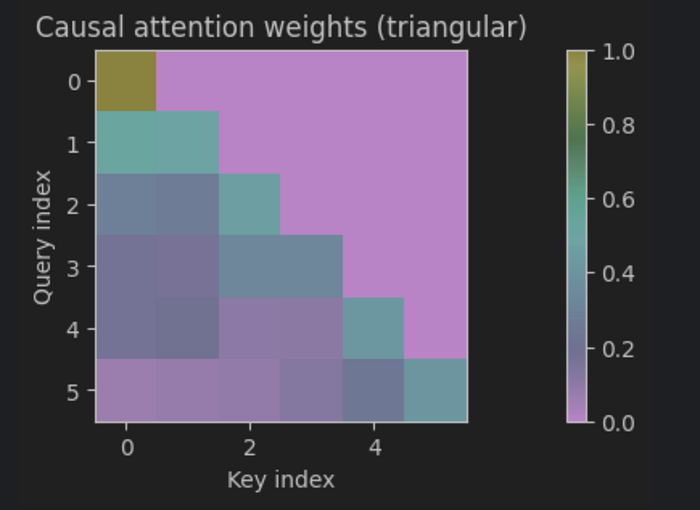

Causal and padding masks (language modeling)

- Causal: prevent peeking at future tokens. For position t, block > t.

- Padding: zero-length tokens shouldn’t get attention.

# Causal mask for sequence length n (upper-triangular blocked)

n = 6

mask = np.triu(np.ones((n, n)) * -1e9, k=1)

# Visualize structure by setting Q=K=V (toy embeddings)

X = K

out_seq, A = attention(X, X, X, mask=mask)

# Row sums stay 1.0 (softmax is row-wise):

print(np.allclose(np.sum(A, axis=1), 1.0))

True

Padding mask tip: build a boolean mask where padding positions get −∞ in the added matrix; reuse the same

attentionfunction.

Quick demo: why the scaling works

Generate random 𝑄, 𝒦 with large 𝒅; compare softmax entropy with vs. without 1/sqrt{𝒅}.

def entropy(p, axis=-1, eps=1e-12):

p = np.clip(p, eps, 1.0)

return -np.sum(p * np.log(p), axis=axis)

nq = nk = 64

dims = [256*(2**i) for i in range(7)] # 256..16,384

trials = 5

H_max = np.log(nk)

for dim in dims:

H_u = []

H_s = []

for _ in range(trials):

Q = np.random.randn(nq, dim)

K = np.random.randn(nk, dim)

S_unscaled = Q @ K.T

S_scaled = S_unscaled / np.sqrt(dim)

H_u.append(entropy(softmax(S_unscaled, axis=-1), axis=-1).mean())

H_s.append(entropy(softmax(S_scaled, axis=-1), axis=-1).mean())

print(f"{dim:>6} | unscaled: {np.mean(H_u):.3f} scaled: {np.mean(H_s):.3f} (max={H_max:.3f})")

256 | unscaled: 0.280 scaled: 3.686 (max=4.159)

512 | unscaled: 0.165 scaled: 3.672 (max=4.159)

1024 | unscaled: 0.124 scaled: 3.682 (max=4.159)

2048 | unscaled: 0.078 scaled: 3.669 (max=4.159)

4096 | unscaled: 0.063 scaled: 3.689 (max=4.159)

8192 | unscaled: 0.041 scaled: 3.685 (max=4.159)

16384 | unscaled: 0.024 scaled: 3.694 (max=4.159)

Why is this important?

Short answer: the scale keeps softmax from collapsing.

- Your numbers show entropy ~0.07–0.28 (unscaled) vs ~3.68 (scaled). With 64 keys, the maximum possible entropy is ln(64)≈4.16.

- Unscaled ⇒ near-one-hot distributions: one key hogs the mass, others ~0. Vanishing/unstable gradients, brittle attention.

- Scaled by 1/sqrt{𝒅} ⇒ high-entropy, well-spread weights: multiple neighbors contribute; gradients remain healthy.

Why this happens: For random vectors with independent and identically distributed (iid) entries, qᵀk has variance∝d. As 𝒅 grows, logits’ scale grows, so softmax saturates. Dividing by sqrt{𝒅} normalizes the logit variance to O(1), keeping the “temperature” of the softmax roughly constant across dimensionalities.

Net: 1/sqrt{𝒅} preserves learnability and stability — attention remains a soft k-NN instead of degenerating into hard argmax.

Failure modes (and simple fixes)

1️⃣ Flat similarities ⇒ blurry outputs.

- Fix: lower temperature / raise scale; learn projections W_🄠, W_🄚 to separate tokens.

2️⃣ One token dominates (over-confident softmax) ⇒ brittle.

- Fix: temperature tuning, attention dropout, and multi-head diversity.

3️⃣ Wrong metric.

- Fix: cosine for angle-only; RBF for Euclidean locality; dot when magnitude carries signal.

From this to “Transformer attention”

Add learned projections:

And replicate heads h=1…H, then concatenate the outputs and mix with W_🄞 — same core: soft neighbor averaging, just in multiple learned subspaces.

Conclusion

Attention isn’t mystical — it’s soft k-NN with learnable projections. A query asks “who’s like me,” softmax turns similarities into a distribution, and the output is a weighted neighbor average. Two knobs make it work in practice:

- Scale by 1/sqrt{𝒅} to keep logits O(1) and preserve entropy — our demo shows saturation without it (near-argmax), and healthy, dimension-invariant softness with it.

- Masks are routing rules: causal for “no peeking,” padding for “ignore blanks.”

Your similarity choice is an inductive bias: dot (magnitude+direction), cosine (angle), RBF (Euclidean neighborhoods). Multi-head runs this in parallel subspaces and mixes them.

When things fail: flat sims ⇒ blurry; peaky sims ⇒ brittle; wrong metric ⇒ misfocus, fix with temperature/scale, dropout, better projections, or a metric that matches the geometry of your data.

Bottom line: think of attention as probabilistic neighbor averaging with a thermostat. Get the temperature right (1/sqrt{𝒅}), pick the right neighborhood (similarity + masks), and the rest is engineering.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.