Author(s): Przemek Chojecki

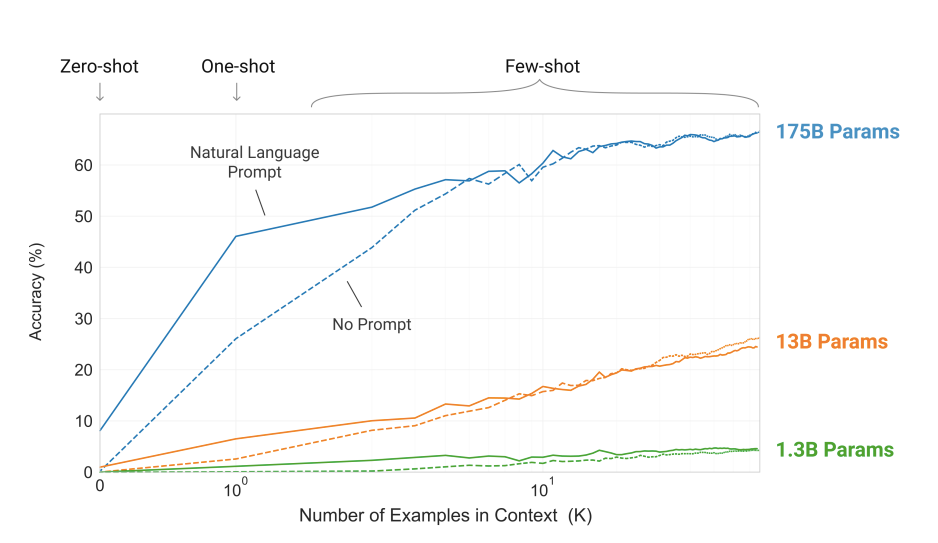

175 billion parameters, 10 times larger than the previous largest model, GPT-3 is the largest trained Transformer to date.

Continue reading on Towards AI — Multidisciplinary Science Journal »

Published via Towards AI

175 billion parameters, 10 times larger than the previous largest model, GPT-3 is the largest trained Transformer to date.

Continue reading on Towards AI — Multidisciplinary Science Journal »

Published via Towards AI